A training dashboard should do one thing exceptionally well: help leadership decide whether learning investments are improving workforce capability and business performance. If your dashboard only shows course completions, attendance, or satisfaction scores, it is reporting activity—not proving impact.

For L&D leaders, HR business partners, operations directors, and executives, the real challenge is not collecting training data. It is connecting learning activity to outcomes leadership actually cares about: productivity, quality, readiness, retention, compliance, and revenue contribution. A strong dashboard closes that gap.

Leadership does not need more learning data. Leadership needs answers to business questions.

A useful training dashboard should start by clarifying what leaders want to know across four areas:

This distinction matters because many organizations confuse training reporting with learning impact measurement.

Reporting activity is straightforward. It tells you:

That information is useful, but it is not enough for executive decision-making.

Proving learning impact requires a higher standard. It means your training dashboard can show:

In other words, activity explains what happened in the learning program. Impact explains why it mattered to the business.

A single dashboard rarely serves every stakeholder equally well. The best approach is to design views for specific audiences.

Typical dashboard audiences include:

When you know the audience, you can reduce clutter and present only the metrics that support real decisions.

A training dashboard becomes powerful when it supports decisions, not status updates. That requires explicit success criteria.

Before you choose charts or data fields, define:

For example, instead of saying, “We launched onboarding training,” define success as, “Reduce time to proficiency for new hires by 20% within 90 days of onboarding completion.”

That level of precision gives your training dashboard a clear job: measure progress against a business outcome.

Building a credible dashboard is not a design exercise first. It is a measurement design exercise. Start with strategic alignment, then define metrics, then build the reporting layer.

Every training dashboard should begin with leadership priorities, not with whatever data is easiest to extract from the LMS.

Map each training initiative to:

For example:

Once that mapping is complete, narrow your dashboard to a small set of high-value metrics leadership can scan quickly.

This is the foundation of a dashboard that can stand up in leadership reviews.

A high-performing training dashboard combines learning data with workforce and business data. If you only pull from the LMS, your dashboard will stay trapped at the activity level.

You need a balanced measurement model that includes:

Most organizations need to integrate data from several systems, such as:

If these sources are not aligned, trust in the training dashboard will decline fast. Standardized definitions and consistent joins are essential.

A training dashboard should help leaders answer three questions in seconds:

That means your design priorities should be clarity, credibility, and actionability.

A polished visual with weak governance is dangerous. It looks credible while creating confusion. A simpler dashboard with strong definitions and accountability wins every time.

Not every metric belongs in an executive-facing training dashboard. To prove impact, focus on metrics that connect learner progress to workforce and business outcomes.

These are the essential building blocks. They tell you whether training reached the intended audience and whether learners progressed through the experience.

Core learning metrics:

These metrics help identify reach, adoption, and execution consistency. They do not prove impact alone, but they are necessary inputs.

This is where the training dashboard becomes strategic. Link learning participation and performance to downstream outcomes leadership already tracks.

Business indicators commonly tied to learning impact:

To make these indicators meaningful, include structured comparisons such as:

These views help leadership distinguish random movement from meaningful improvement.

A mature training dashboard uses both leading and lagging indicators.

Leading indicators show early momentum. They help you predict whether training is on track to influence outcomes.

Examples include:

Lagging indicators confirm whether business results improved after training had time to take effect.

Examples include:

The key is to interpret movement responsibly. If business outcomes improve after training, that is a strong signal—but not automatic proof of causation. Leadership will trust the dashboard more when you explicitly state:

This balanced interpretation builds credibility, especially in enterprise environments where multiple initiatives are running at once.

A good training dashboard structure depends on who uses it, how often it is reviewed, and how mature your data environment is.

The most effective training dashboard programs usually include multiple views, each designed for a different decision-maker.

Best for senior leadership and HR executives.

Include:

This view should be highly concise. Think scorecard plus trend highlights.

Best for L&D managers and program owners.

Include:

This view supports optimization and intervention.

Best for people managers and department heads.

Include:

This makes the dashboard actionable beyond the learning function.

Several structures consistently work well:

Choose the format based on the decision the user needs to make—not on visual preference alone.

Not every organization needs an advanced BI stack on day one. The right choice depends on skills, reporting frequency, and data complexity.

These are useful for:

They work well when data volume is manageable and the goal is to validate metric definitions before automation.

Limitations include:

BI platforms are the better fit when you need:

For most mid-sized and large organizations, this is where a training dashboard becomes sustainable and credible.

Studying sample dashboards is useful, but copying them rarely works.

Instead, review examples to learn:

The best templates are starting points, not final answers. Your training dashboard should reflect your own business goals, data maturity, governance model, and leadership expectations.

Many training dashboards fail for predictable reasons. The good news is that these mistakes are preventable.

A dashboard overloaded with metrics creates noise and weakens decision-making.

How to avoid it:

Limit executive views to the few KPIs tied directly to leadership decisions. Keep supporting detail in drill-down tabs.

Completion rates alone do not tell leadership whether training mattered.

How to avoid it:

Always pair learning data with a business baseline, comparison group, trend, or downstream performance metric.

If “completion,” “active learner,” or “proficiency” mean different things to different teams, trust disappears.

How to avoid it:

Create a metric dictionary with standardized definitions, owners, and calculation rules.

A single snapshot can be misleading and impossible to interpret.

How to avoid it:

Use pre/post comparisons, cohort analysis, and benchmark views wherever possible.

A dashboard without refresh rules, ownership, or validation processes quickly becomes stale or disputed.

How to avoid it:

Define:

If you want a training dashboard that leadership actually trusts and uses, follow these practical steps:

Start with one priority use case.

Focus on one strategic program such as onboarding, compliance, sales enablement, or leadership development before scaling.

Build a KPI map before building visuals.

Define business goals, learning outcomes, measures, data sources, and owners in one document.

Validate definitions with stakeholders early.

Align L&D, HR, operations, and executives on what each KPI means before publishing anything.

Pilot with a limited audience.

Test the dashboard with a small group of leaders and managers to refine usability, relevance, and trust.

Review and iterate quarterly.

Business priorities change. Your training dashboard should evolve with them.

Even the best training dashboard can fail if it is presented as a data dump. Leadership responds to a clear narrative tied to business decisions.

When presenting the dashboard, structure the discussion in this order:

A practical example:

That is a leadership story. It uses dashboard data to support decisions.

A training dashboard is not a one-time project. It is an operating tool.

To keep it relevant:

The highest-performing organizations treat dashboards as products: governed, iterated, and aligned to changing business needs.

Building a reliable training dashboard manually is possible—but it is often slow, fragmented, and difficult to scale. Data lives across LMS platforms, HRIS tools, survey systems, and operational databases. Definitions drift. Refresh cycles break. Stakeholders question the numbers.

That is why many teams move beyond manual reporting.

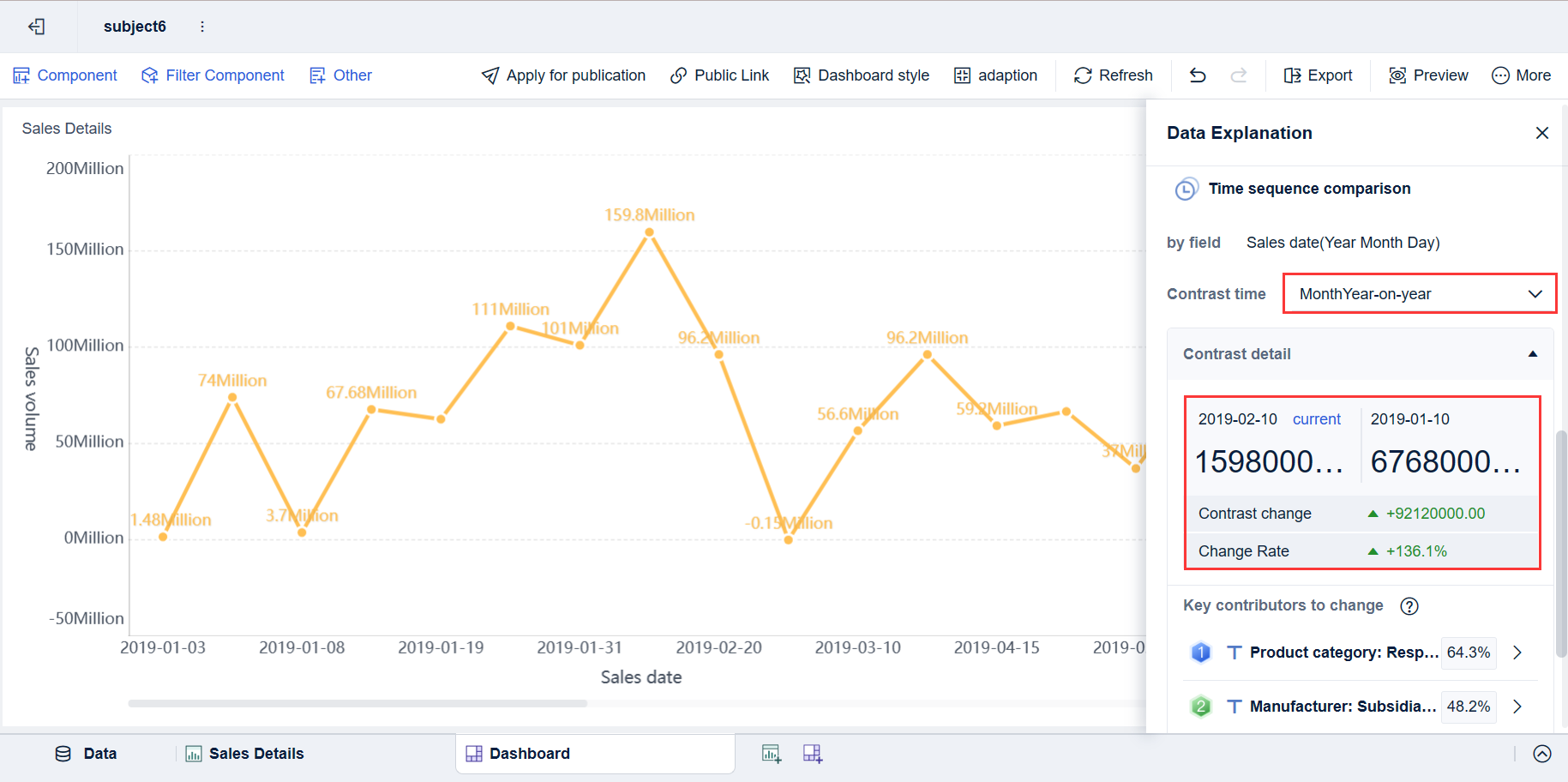

Building this manually is complex; use FineBI to utilize ready-made templates and automate this entire workflow. FineBI helps teams connect cross-system training data, standardize KPIs, create role-based dashboard views, and deliver interactive analysis without rebuilding reports every cycle.

With FineBI, you can:

If leadership expects learning data that is credible, fast, and decision-ready, a modern BI approach is the practical next step. FineBI gives you the structure and automation to turn your training dashboard from a reporting asset into a business performance tool.

A strong training dashboard should connect participation and completion data to capability growth, behavior change, and business KPIs. Leadership needs to see whether training improved outcomes like productivity, quality, compliance, retention, or revenue.

Basic reports show activity such as enrollments, completions, and satisfaction scores. A training dashboard built for leadership goes further by showing whether learning changed performance and supported business goals.

Executive views usually focus on participation rate, completion rate, assessment pass rate, skill progression, time to proficiency, and business outcome lift. The best KPI set depends on the program's stated goal and the business metric it is meant to influence.

Start by defining the business objective, target learner group, expected behavior change, and the KPI you want to improve before building the dashboard. Then compare post-training results over time to see whether trained groups show measurable improvement.

Training dashboards are most useful for executives, L&D leaders, HR partners, line managers, and operations leaders. Each audience should have a tailored view so they can quickly act on the metrics that matter to their decisions.

The Author

Yida YIn

FanRuan Industry Solutions Expert

Related Articles

What Is a Daily Dashboard? A Practical Guide for Operations Teams to Track Performance Every Morning

A daily dashboard is a simple, high visibility view of the few metrics your team needs to review every morning to understand performance, spot risk early, and decide what to do next. For operations teams, that matters be

Eric

Jan 01, 1970

What Is a Bank Dashboard? Core Components, KPIs, and Use Cases Explained

A bank dashboard is a decision making interface that turns fragmented banking data into a fast, visual, actionable view. For banking leaders, analysts, and operations teams, that matters because critical decisions cannot

Eric

Jan 01, 1970

What Is a Banking Dashboard? KPIs, Use Cases, and Decision-Making Layers Explained

A banking dashboard is a decision system that consolidates financial, customer, risk, compliance, and operational data into one interactive view. For banking leaders, that matters because critical decisions cannot wait f

Eric

Jan 01, 1970