Data quality monitoring tools continuously track, detect, and alert you to issues in your data—such as errors, inconsistencies, or anomalies—to ensure accuracy, reliability, and trustworthiness.

Here are the top 10 data quality monitoring tools you should know in 2025:

You can expect advanced features like AI-driven insights, real-time monitoring, seamless integration, and easy scalability from these data quality monitoring tools. Selecting the right platform helps you maintain accurate data and supports smarter business decisions.

You rely on accurate data to make smart decisions every day. Data quality monitoring tools help you avoid costly mistakes by improving data integrity and standardization. When you use these tools, you can:

Consider these benefits:

You face strict rules in many industries. Data quality monitoring helps you meet these requirements by identifying, assessing, and reducing data-related risks. These tools perform data profiling, auditing, and lineage tracking, which support your data governance efforts and lower the chance of problems.

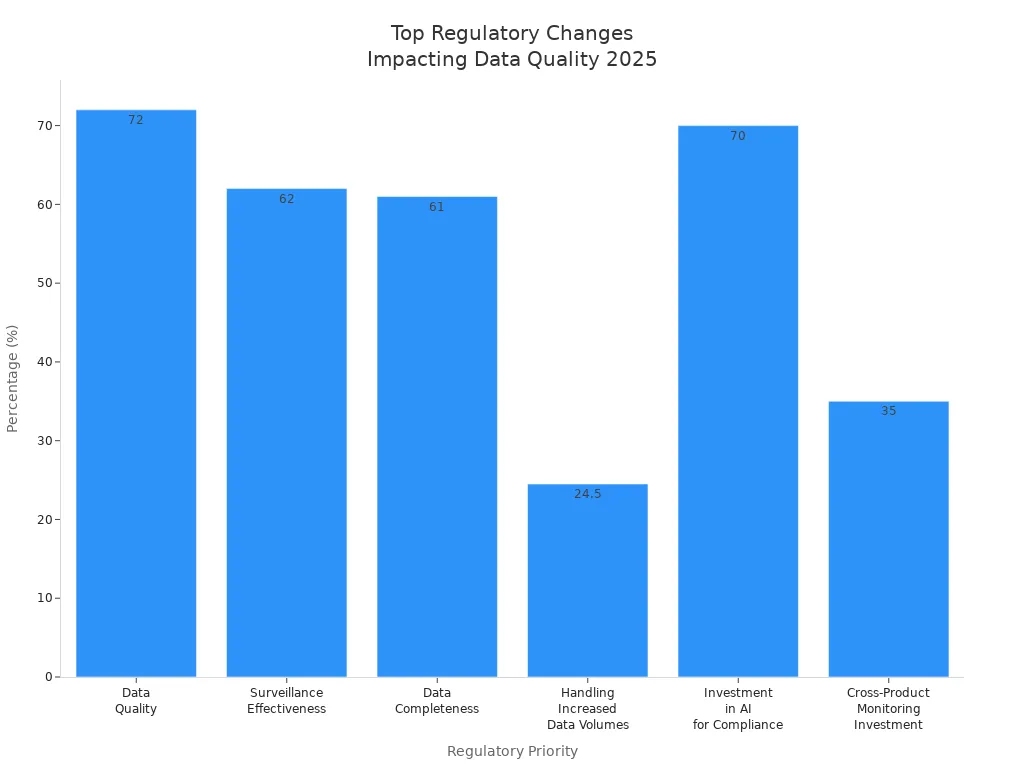

In 2025, regulations like HIPAA, GDPR, and SOX require you to keep data accurate, complete, and consistent. Compliance leaders now focus more on data quality and surveillance effectiveness. The chart below shows how important these priorities have become:

You want your organization to stay ahead. Data quality monitoring supports innovation by making sure your data is accurate and up to date. AI-driven tools can spot errors, detect changes in data patterns, and keep your models reliable. This means you can trust your AI results and adapt quickly when things change.

Here is how AI-driven data quality monitoring helps you:

| Role of AI-driven Data Quality Monitoring Tools | Description |

|---|---|

| Ensures data accuracy | Finds mistakes that could lead to bad predictions and costly errors. |

| Detects data drift | Spots changes in data over time, so you can respond fast. |

| Enhances model reliability | Keeps your AI results consistent and trustworthy. |

| Supports compliance | Helps you follow data rules and avoid risks. |

| Utilizes automated tools | Saves time and lets you focus on bigger goals. |

You can use these tools to drive new ideas and keep your business moving forward.

You need to spot data issues as soon as they happen. Real-time monitoring lets you track and analyze your data the moment it is created. This means you can catch problems right away and fix them before they affect your business. When you use real-time monitoring, you make decisions based on the most current and accurate information.

Automated alerts help you stay on top of your data without checking it all the time. These alerts notify you when something goes wrong, like missing or incorrect data. In health records, for example, automated alerts increased data completeness from 11.9% to 26.7%. You can use alerts to improve your data entry and keep your records accurate.

Tip: Set up alerts for your most important data fields to catch errors early.

You want your data quality tools to work with all your systems. Integration capabilities let you connect data warehouses, ETL pipelines, transformation tools, and BI platforms. This table shows how integration supports your workflow:

| Integration Capabilities | Description |

|---|---|

| Data Warehouses | Works with existing data warehouses to ensure data quality across all sources. |

| ETL Pipelines | Integrates with ETL processes to maintain data quality during extraction, transformation, and loading. |

| Transformation Tools | Compatible with tools like dbt for seamless data transformation and quality checks. |

| BI Platforms | Ensures data quality is maintained in business intelligence reporting and analytics. |

Integration helps you apply data quality rules everywhere, not just in one place.

Your business grows, and your data grows with it. You need tools that can handle more data and more users without slowing down. Scalable data quality tools let you add new data sources and users easily. You keep your data quality high, even as your company expands.

AI and machine learning make your data quality monitoring smarter. These features check your data for errors, clean up mistakes, and spot patterns you might miss. Most data professionals say that improving data quality is their top goal. AI-powered tools help you reach that goal faster and with less effort.

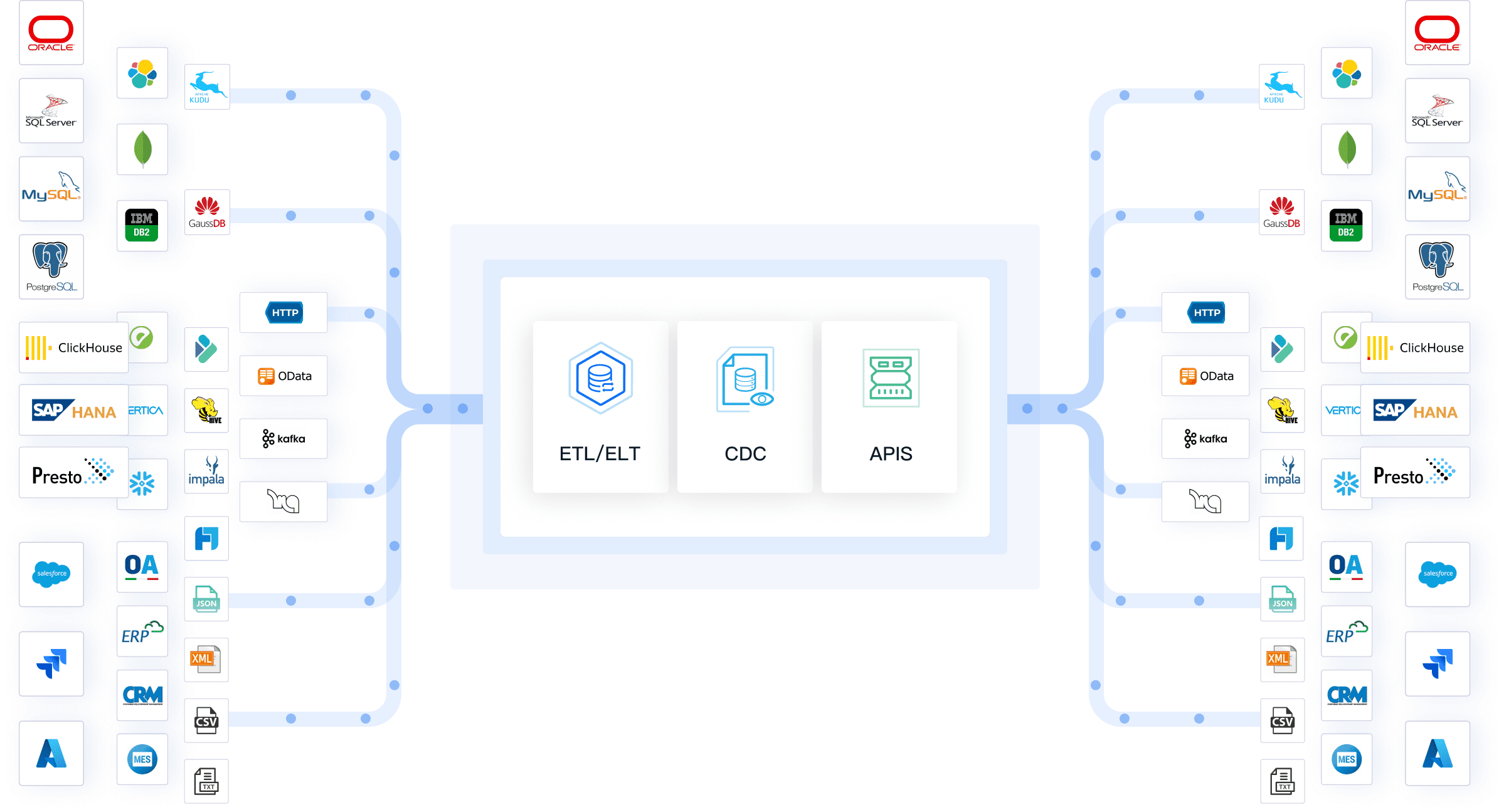

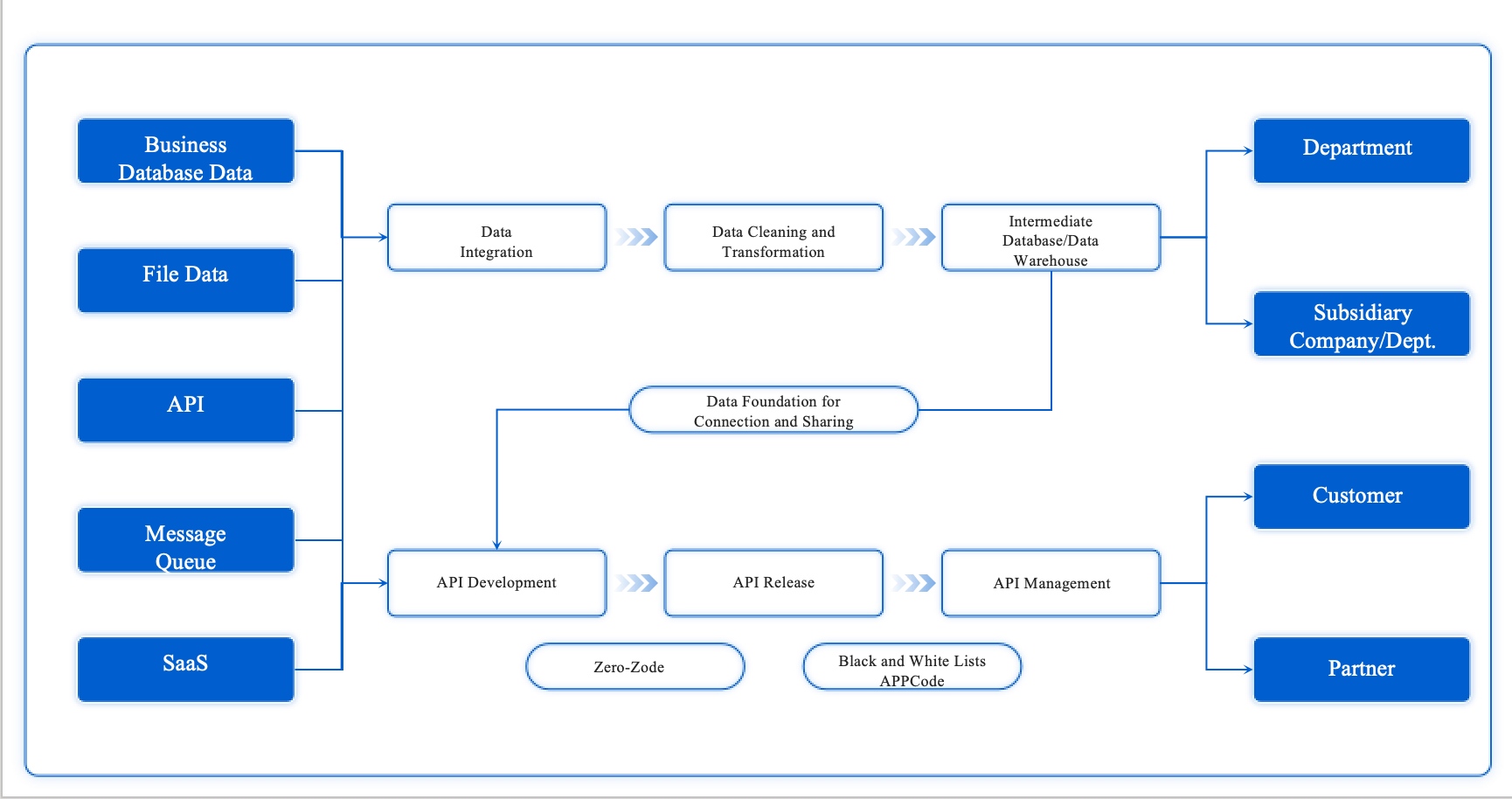

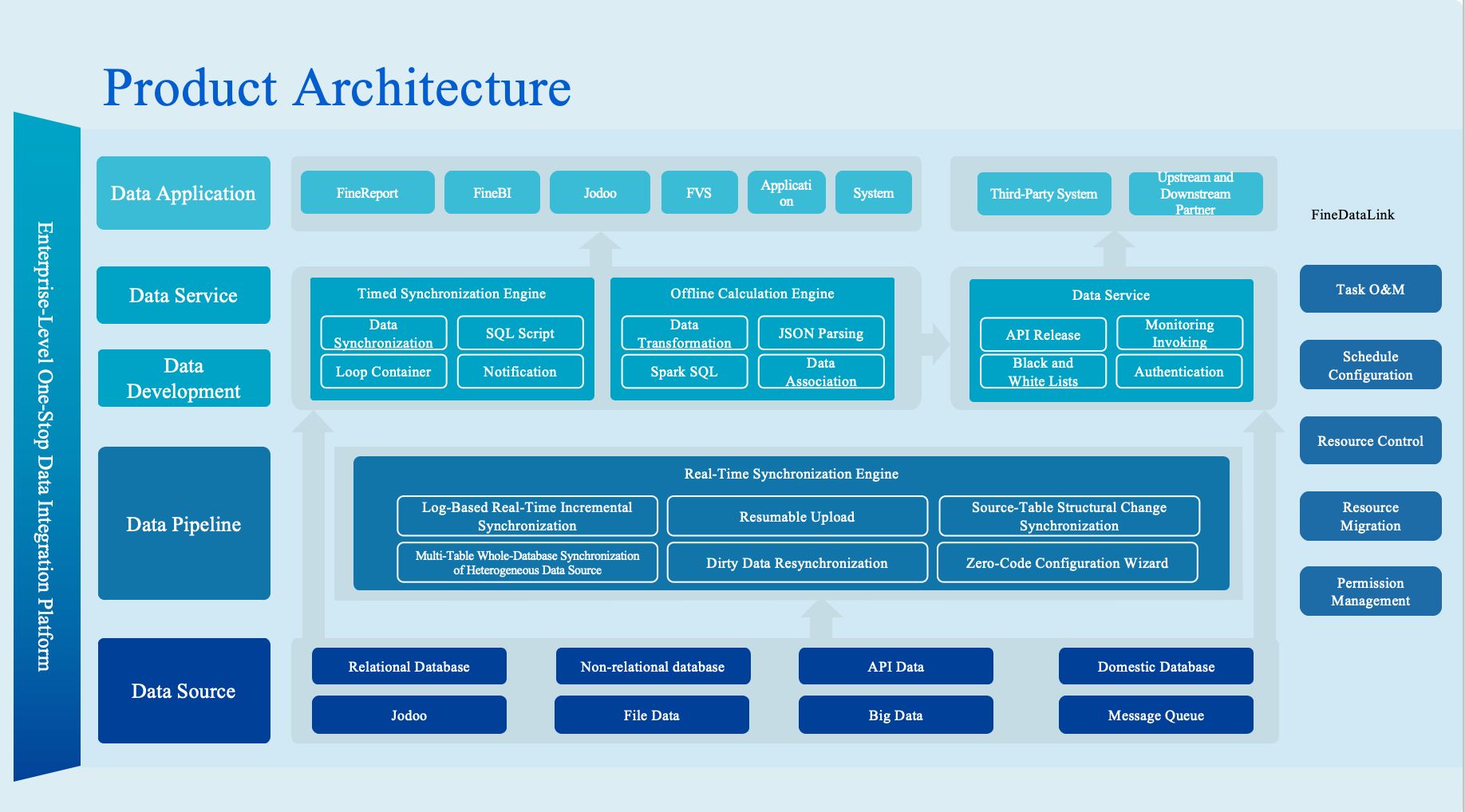

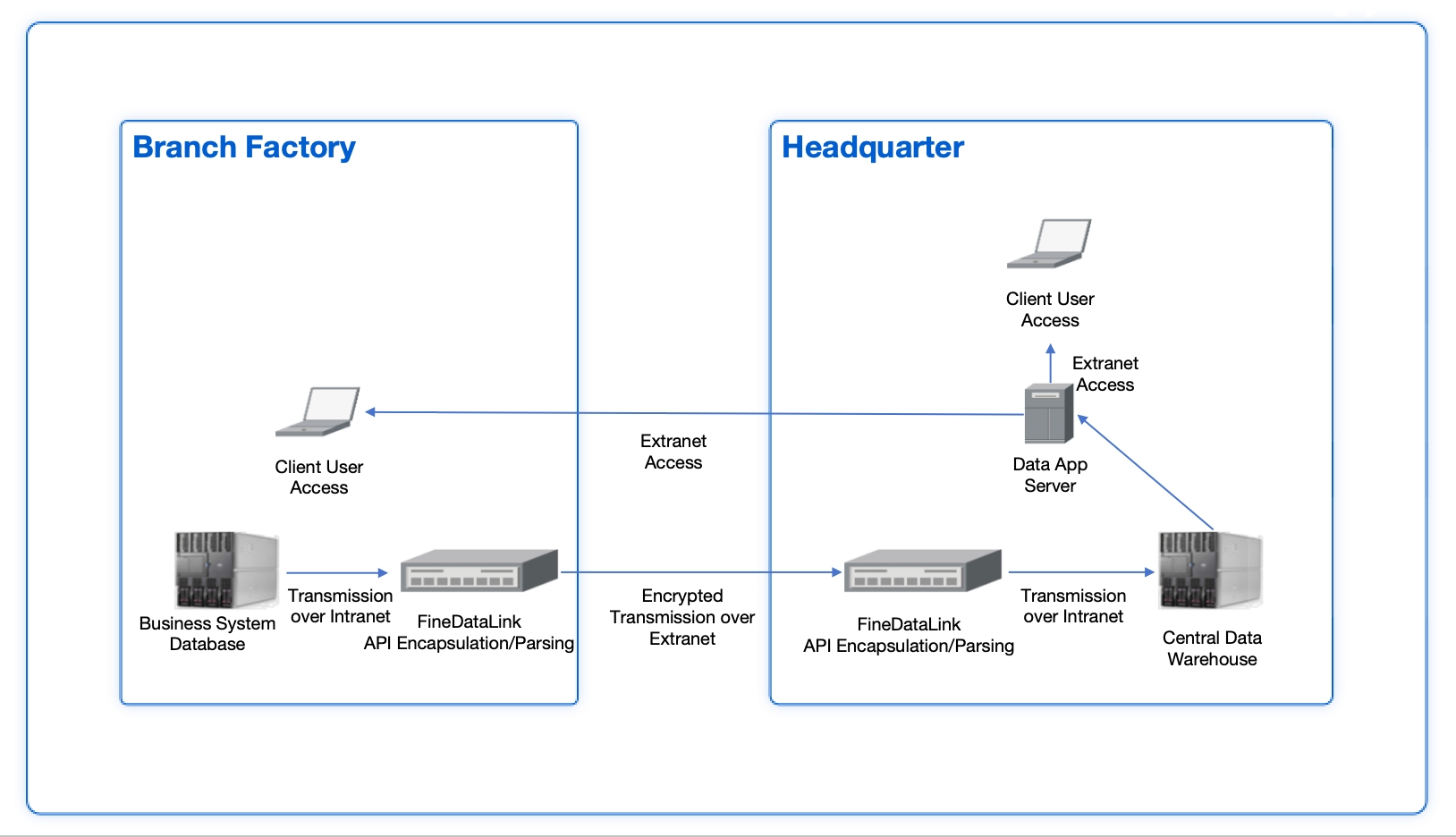

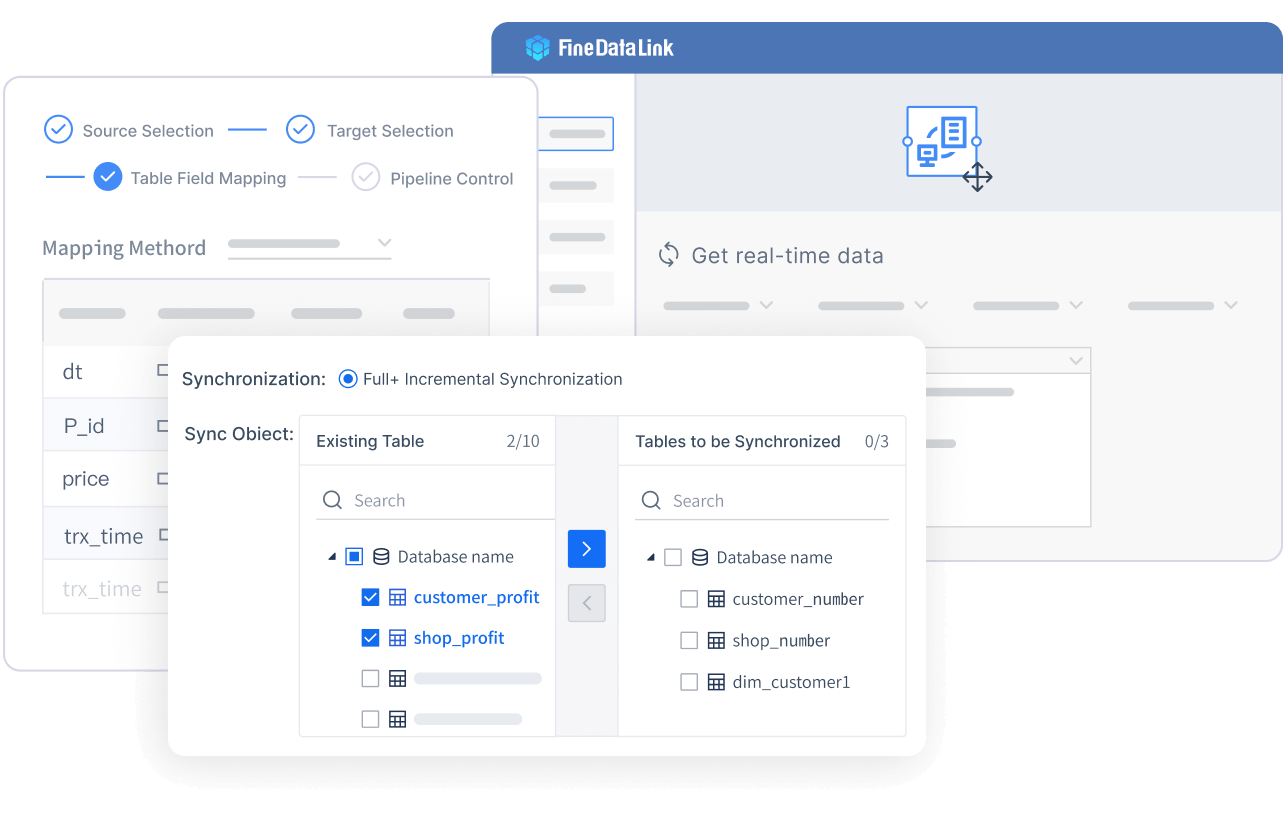

You can use FineDataLink by FanRuan to build a strong foundation for your business intelligence. This platform helps you integrate and transform data from over 100 sources. You get real-time data synchronization, advanced ETL and ELT features, and a low-code interface. The drag-and-drop design makes it easy for you to set up data pipelines and automate workflows.

FineDataLink supports both database migration and real-time data warehouse construction. You can launch APIs in minutes without writing code, which helps you connect different systems quickly. The platform reduces manual work and improves data quality by automating data integration and monitoring. FineDataLink is a cost-effective choice for companies that want to break down data silos and manage data efficiently.

Website: https://www.fanruan.com/en/blog/data-warehouse-solutions

Tip: FineDataLink offers a free trial, so you can test its features before making a decision.

DQLabs stands out among data quality monitoring tools for its ability to operationalize data observability from day one. You start monitoring data quality metrics immediately after deployment, without writing code. The platform uses machine learning to detect anomalies in real time and sends you alerts about unusual events. DQLabs works across cloud and on-premise systems, so you can monitor thousands of tables at once. You get AI-driven insights and recommendations that help you make better decisions. The platform adapts to different industries and lets you customize rules for your needs.

Website: https://www.dqlabs.ai/

| Unique Selling Point | Description |

|---|---|

| Operationalize Data Observability from Day One | Start monitoring data quality metrics immediately after deployment. |

| Scalable Cloud-Native Deployment | Scale with your data landscape, supporting SaaS or on-premise deployments. |

| Real-Time Anomaly Detection | Detect anomalies from day one and receive real-time alerts. |

| Hybrid and Multi-Cloud Integration | Monitor data across various cloud platforms and on-premise systems. |

| Flexibility Across Industries | Customize with industry-specific rules and terminology. |

| AI-Driven Insights and Recommendations | Get intelligent insights and recommendations for better decisions. |

| Comprehensive Monitoring Capabilities | Identify and resolve data issues effectively. |

| Significant Business Benefits | Reduce data downtime and improve data trust. |

DQLabs has earned recognition as a High Performer in the Summer 2025 Data Observability Grid® Report. Many users report high satisfaction with its effectiveness and growing market presence.

Talend Data Quality gives you a complete suite of data quality tools for profiling, cleansing, and standardizing your data. You can connect to many data sources using built-in connectors, which makes integration flexible and easy. Talend’s cloud-native architecture supports on-premises, cloud, or hybrid environments, so you can scale as your business grows. The platform helps you maintain data accuracy and consistency with automated tools.

Website: https://www.talend.com/products/data-quality/

| Feature | Description |

|---|---|

| Data Integration | Integrate data from multiple sources with various connectors. |

| Cloud-Native | Supports on-premises, cloud, or hybrid environments for scalability. |

| Data Quality | Profile, cleanse, and standardize data to maintain accuracy and consistency. |

You can rely on Talend to keep your data clean and ready for analysis, no matter where it lives.

Informatica Data Quality helps you manage, monitor, and improve your data across the enterprise. You get tools for profiling, cleansing, matching, and monitoring data in real time. Informatica supports both cloud and on-premise deployments, so you can choose what fits your needs. The platform uses AI and machine learning to automate data quality tasks and reduce manual work. You can set up rules to catch errors and inconsistencies before they affect your business. Informatica also integrates with many data sources and business applications, making it a flexible choice for large organizations.

Website: https://www.informatica.com/products/data-quality.html

Ataccama ONE is a modern platform that uses AI-driven anomaly detection to improve data quality. You can use its free Data Quality app within Snowflake AI Data Cloud for easy access. The platform embeds data quality functions directly within master data management, so you can handle large data volumes without slowing down. Ataccama ONE offers a modern user interface that works well for both technical and business users. You can deploy it in the cloud, on a private cloud, or manage it yourself.

Website: https://www.ataccama.com/platform

| Feature | Description |

|---|---|

| AI-driven anomaly detection | Reduces manual efforts and improves data quality efficiently. |

| Free Data Quality app | Enhances ease-of-use within Snowflake AI Data Cloud. |

| Flexibility and scalability | Handles large data volumes seamlessly within MDM. |

| Modern user interface | Designed for ease of use across technical and business users. |

| Deployment options | Supports cloud-native, private cloud, and self-managed deployments. |

| Proactive customer support | Offers technical and strategic advisory services. |

Ataccama ONE has helped companies consolidate customer portfolios and establish strong data governance. You can see a 348% average ROI, a $12.07M net present value, and payback in under 12 months.

IBM InfoSphere QualityStage focuses on data quality and information governance. You can use it to profile, standardize, and match data with advanced algorithms. The platform supports compliance and regulatory requirements by enforcing information governance policies across your organization. You get cross-organization capabilities that help you meet regulations like GDPR and HIPAA. IBM InfoSphere QualityStage works well for companies that need strong compliance support and want to ensure high-quality data for analytics and reporting.

Website: https://www.ibm.com/products/infosphere-qualitystage

Collibra Data Quality & Observability brings together data quality, governance, and observability in one platform. You can unify your data quality efforts and ensure your data is accurate, complete, and consistent. Collibra automates profiling and rule enforcement, so you catch issues like duplicates and anomalies before they become problems. The platform monitors data pipelines in real time, which helps you detect anomalies quickly and track performance.

Website: https://www.collibra.com/products/data-quality-and-observability

| Advantage | Description |

|---|---|

| Unification of Data Quality | Integrates data quality and observability with governance. |

| Automation Features | Automated profiling and rule enforcement for proactive issue detection. |

| Real-time Monitoring | Continuous monitoring of data pipelines for quick anomaly detection. |

You benefit from a single platform that eliminates the need for multiple tools. This integration boosts productivity and builds confidence in your data.

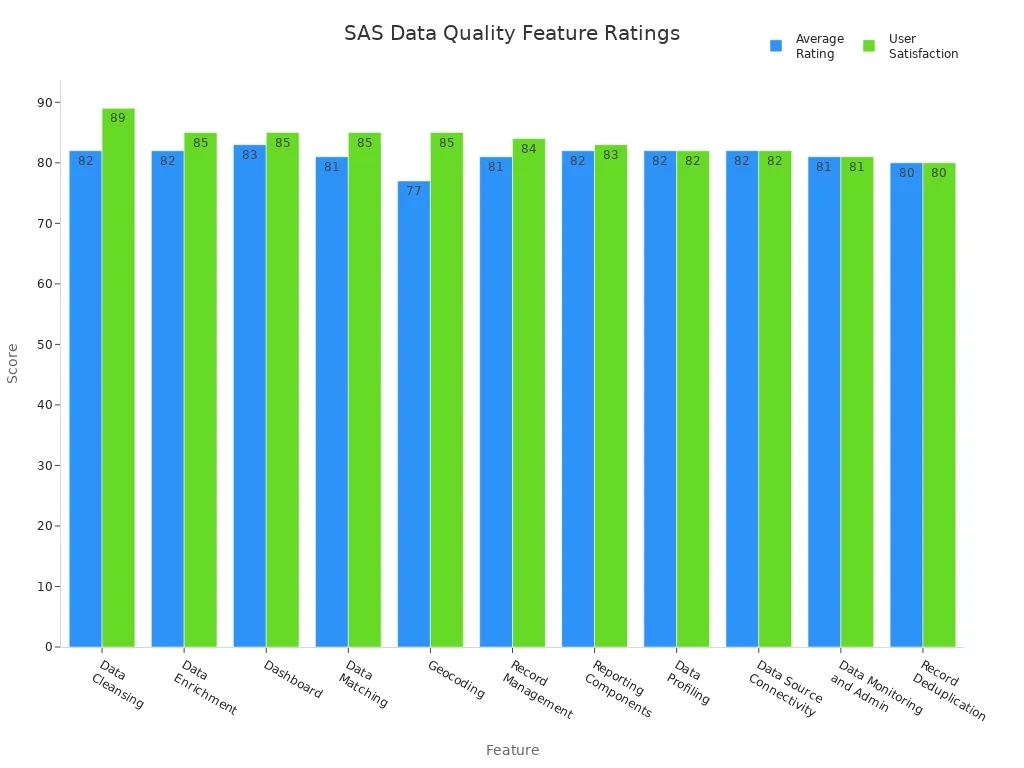

SAS Data Quality gives you a complete framework for managing, cleansing, and enriching your data. You can use dashboards, data matching, geocoding, and record management to keep your data accurate and up to date. The platform supports strong integration and scalability, so you can handle large datasets with ease. Users rate SAS Data Quality highly for its performance, trustworthiness, and efficient service.

Website: https://support.sas.com/en/software/sas-data-quality-support.html

You can rely on SAS Data Quality for end-to-end data management, from profiling to reporting.

Bigeye focuses on real-time monitoring and alerting for your data pipelines. You get tailored incident resolution steps and proactive suggestions for improving your data flows. The platform uses anomaly detection to prioritize alerts based on the importance of your datasets. Bigeye summarizes incidents in plain language, so everyone on your team understands what happened. The system learns from past incidents to improve future recommendations. You can also analyze upstream patterns in your ETL and dbt code to prevent future issues.

Website: https://www.bigeye.com/

| Feature | Description |

|---|---|

| bigAI | Provides tailored incident resolution steps and proactive improvements to data pipelines. |

| Anomaly Detection | Prioritizes alerts for critical datasets. |

| Incident Summarization | Summarizes incidents in plain language for clarity. |

| Historical Context | Uses past incidents to improve future recommendations. |

| Upstream Pattern Analysis | Suggests defensive fixes by analyzing ETL and dbt code patterns. |

Great Expectations is an open-source library that helps you validate, document, and profile your data. You can use it to set clear expectations for your datasets and check if your data meets those standards. The platform makes it easy to document data quality rules, which improves communication among your team members. You can profile your data to understand its structure and quality better.

Website: https://docs.greatexpectations.io/docs/home/

| Use Case | Description |

|---|---|

| Data Validation | Validate datasets to ensure they meet specified criteria. |

| Data Documentation | Document data quality expectations for better team communication. |

| Data Profiling | Profile data to understand its structure and quality. |

You can use Great Expectations to validate data integrity, document quality expectations, and profile data for better understanding.

You have now seen how each of these data quality monitoring tools brings unique strengths to the table. You can choose the right solution based on your needs, whether you want real-time monitoring, AI-driven insights, or strong integration capabilities.

You want data quality assessment tools that deliver more than just basic checks. FineDataLink by FanRuan gives you a low-code platform, real-time integration, and support for over 100 data sources. You can build efficient data pipelines with drag-and-drop simplicity. Many tools now offer automated data quality features, including automated anomaly detection and data validation. These features help you spot errors instantly and keep your data accurate.

Here is a quick look at the main benefits you get from leading data quality assessment tools:

| Benefit | Description |

|---|---|

| Enhanced Compliance | Ensures adherence to regulatory requirements |

| Actionable Insights | Provides valuable data for informed decisions |

| Operational Efficiency | Streamlines data management processes |

| Data Security | Protects sensitive financial information |

You also gain from data cleansing and data enrichment, which improve the value and usability of your datasets.

You can apply data quality assessment tools in many industries. In manufacturing and finance, these tools support advanced analytics, machine learning for risk assessment, and time series analysis. The table below shows how organizations use these tools:

| Application | Percentage of Use | Impact |

|---|---|---|

| Advanced Analytics | 75% | Improved data analysis |

| Machine Learning in Risk Assessment | 22% | Enhanced predictive modeling |

| Time Series Analysis | 45% | Better financial forecasting |

You also benefit from:

You can use data quality assessment tools for data validation, data cleansing, and data enrichment in every workflow.

You need tools that stand out for their strengths. FineDataLink by FanRuan offers a user-friendly interface, cost-effectiveness, and real-time integration. You can connect to many data sources and automate data validation and data cleansing. Other top tools provide automated anomaly detection, strong data governance, and seamless integration with BI platforms.

You can trust these tools to deliver:

You can rely on data quality assessment tools to keep your data accurate, secure, and ready for analysis.

You want to choose the right data quality management tools for your business. Each tool offers unique features that help you monitor and improve your data. The table below shows how the top data quality management tools compare in their main features:

| Tool Name | Key Features |

|---|---|

| FineDataLink by FanRuan | Real-time synchronization, low-code ETL/ELT, supports 100+ data sources, user-friendly UI |

| Informatica Data Quality | Data profiling, cleansing, monitoring, AI automation |

| Talend Data Quality | Data integration, transformation, profiling, cloud-native |

| Ataccama ONE | AI-powered anomaly detection, modular design, scalability |

| IBM InfoSphere QualityStage | Profiling, cleansing, governance, compliance |

| SAS Data Quality | Advanced analytics, reporting, data enrichment |

| Collibra Data Quality | Unified governance, automated profiling, real-time monitoring |

| Bigeye | Real-time monitoring, incident management, BI integration |

| DQLabs | AI-driven insights, real-time alerts, hybrid integration |

| Great Expectations | Open-source validation, data profiling, documentation |

FineDataLink stands out for its real-time synchronization, low-code ETL/ELT, and support for over 100 data sources. You can build data pipelines quickly and monitor data quality with a visual interface.

You need to know how much data quality management tools cost before you decide. Most enterprise-grade data quality management tools start at $50,000 per year. Some tools cost $75,000 or more, depending on your data volume and number of users. Mid-sized teams can find options from $15,000 to $30,000 per year. Pricing often depends on the features you need and how many systems you want to monitor.

| Pricing Range | Description |

|---|---|

| $15-30K annually | For mid-sized teams with focused needs |

| Starts at $50K+ | Annual cost based on users and data sources |

| Starts at $75K+ | Annual cost for large data volumes |

| Starts at $100K+ | Annual cost for enterprise-wide deployments |

You should always check for free trials or demos. FineDataLink offers a free trial so you can test its features before you buy.Click the banner below to get a free trial!

You want data quality management tools that connect with your current systems. Integration is key for smooth data flow and accurate monitoring. The table below shows how some tools handle integration:

| Tool Name | Integration Capabilities | Key Features |

|---|---|---|

| FineDataLink by FanRuan | 100+ data sources, real-time sync, API integration | Low-code, drag-and-drop, ETL/ELT |

| Bigeye | BI platform integration, automated monitoring | Incident management, data rules |

| Collibra Data Quality | Unified platform, real-time pipeline monitoring | Governance, automation |

| Ataccama ONE | Modular, scalable, cloud and on-premise | AI-powered, anomaly detection |

FineDataLink gives you flexible integration. You can monitor data across cloud, on-premise, and hybrid environments. The platform’s low-code interface makes it easy for you to set up connections and automate workflows.

Tip: Choose data quality management tools that match your current and future integration needs. This helps you scale and monitor data quality as your business grows.

You should start by understanding what your organization requires from a data quality monitoring tool. Every business has different priorities. Some focus on accuracy, while others need fast access to data. You can use the table below to help you identify the most important dimensions for your team:

| Dimension | Description |

|---|---|

| Accessibility | The data is available when needed. |

| Accuracy | Data matches the original intent and is precise. |

| Completeness | All required data attributes are present. |

| Coverage | All necessary data records are included. |

| Conformity | Data follows required standards. |

| Consistency | Data uses the same patterns and rules everywhere. |

| Integrity | Data relationships are correct. |

| Timeliness | Data is up to date. |

| Uniqueness | Each record is unique and not duplicated. |

You can use this checklist to compare tools and see which one matches your needs best.

You want a tool that grows with your business. Scalability means the tool can handle more data and users without slowing down. Look for features like parallel processing, resource management, and load balancing. These help you keep performance high as your data grows.

Flexibility is also important. You need a tool that connects to many data sources and works with your current systems. Check for API connectivity, native integrations, and support for both batch and real-time processing. FineDataLink by FanRuan stands out here. It supports over 100 data sources, offers real-time synchronization, and provides a low-code interface. This makes it a strong choice for manufacturing and enterprise data management, where you often deal with complex and growing data environments.

You should consider your budget before making a decision. Some tools cost more because they offer advanced features or support large teams. Others provide affordable options for smaller businesses. Always look for a free trial or demo to test the tool first.

Support matters, too. Choose a provider that offers clear documentation, training, and responsive customer service. FanRuan provides detailed guides, step-by-step videos, and ongoing support. This helps you get started quickly and solve problems fast.

Tip: Make a list of your must-have features, then compare pricing and support options. This will help you find the best fit for your organization.

You have explored the top data observability tools for 2025. FineDataLink by FanRuan stands out among data observability tools for its real-time integration and low-code platform. When you compare data observability tools, focus on features like automated alerts, scalability, and integration options. You should select data observability tools that match your business needs and support growth. Data observability tools help you maintain data accuracy and compliance. You can improve efficiency with data observability tools that offer user-friendly interfaces. Data observability tools provide strong support for analytics and reporting. You can reduce manual work by choosing data observability tools with automation. Data observability tools also help you monitor data pipelines in real time. Try free trials or demos of data observability tools, such as FineDataLink, to find the best fit for your organization.

Tip: Test several data observability tools before making your final decision.

The Author

Howard

Data Management Engineer & Data Research Expert at FanRuan

Related Articles

11 Best Data Management Tool Options Compared in 2026: Features, Pros, Cons & Use Cases

Compare the best data management tools for 2026. Review features, pros, cons, and ideal use cases for platforms like FineBI, Microsoft Purview, and Talend.

Lewis Chou

Apr 26, 2026

7 Best Data Governance Platforms Compared: Pros, Cons, and Which Teams They Fit Best

A data governance platform is software that helps organizations define, manage, monitor, and enforce how data is cataloged, accessed, trusted, and used across the business. 7 best data governance platforms compared at a

Howard Chu

Apr 20, 2026

What is a data management platform in 2025

A data management platform in 2025 centralizes, organizes, and activates business data, enabling smarter decisions and real-time insights across industries.

Howard

Dec 22, 2025