A uat dashboard gives BI teams one place to control testing progress, data accuracy, defect risk, and stakeholder sign-off before release. For IT managers, BI product owners, analytics leads, and operations directors, this is not a reporting nicety. It is a release-control mechanism.

Without a structured UAT view, teams usually face the same operational failures: test cases scattered across spreadsheets, unresolved data issues hidden in email threads, unclear ownership, and last-minute business objections that delay go-live. A well-designed uat dashboard solves that by making release readiness visible and measurable.

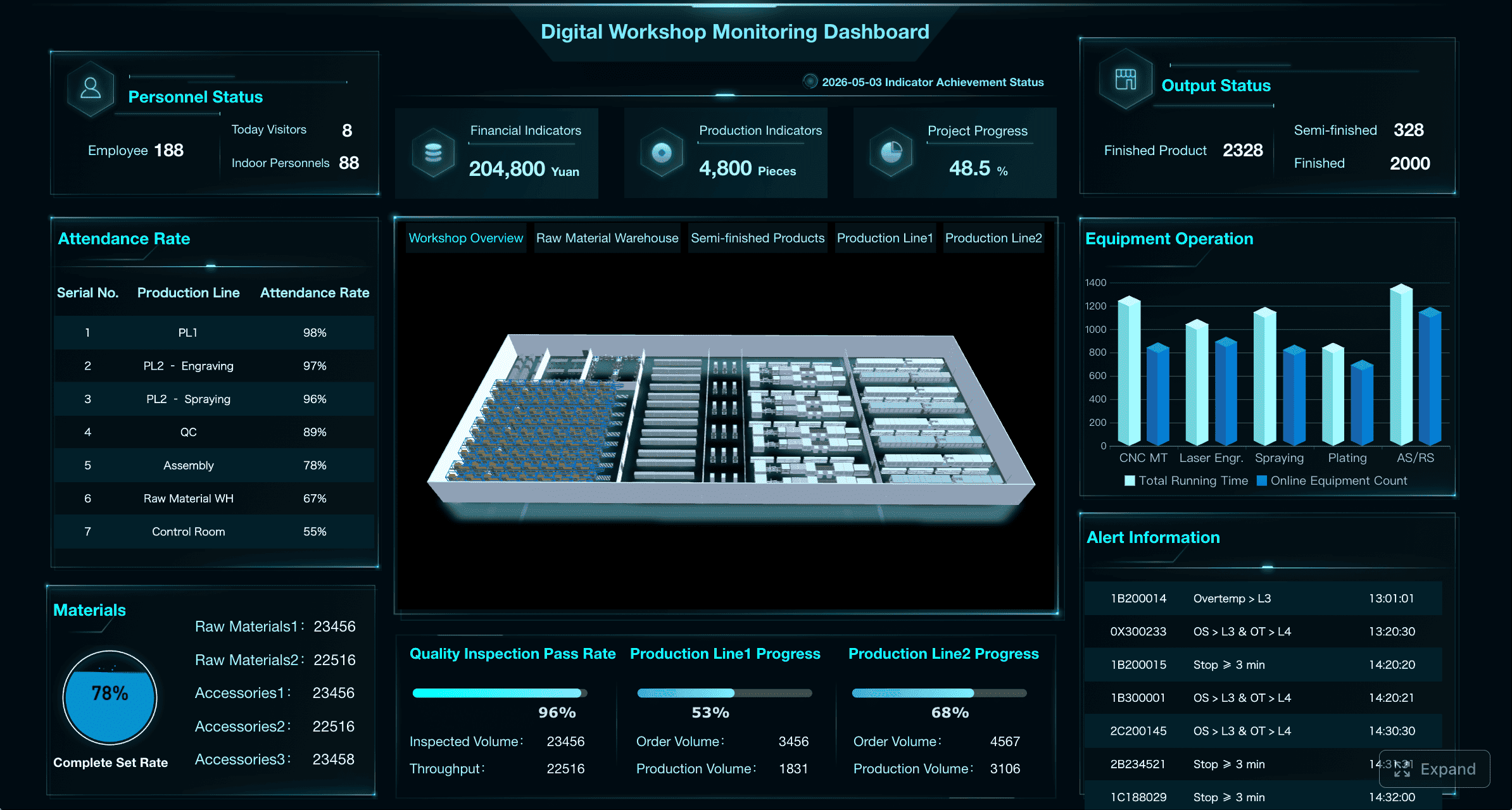

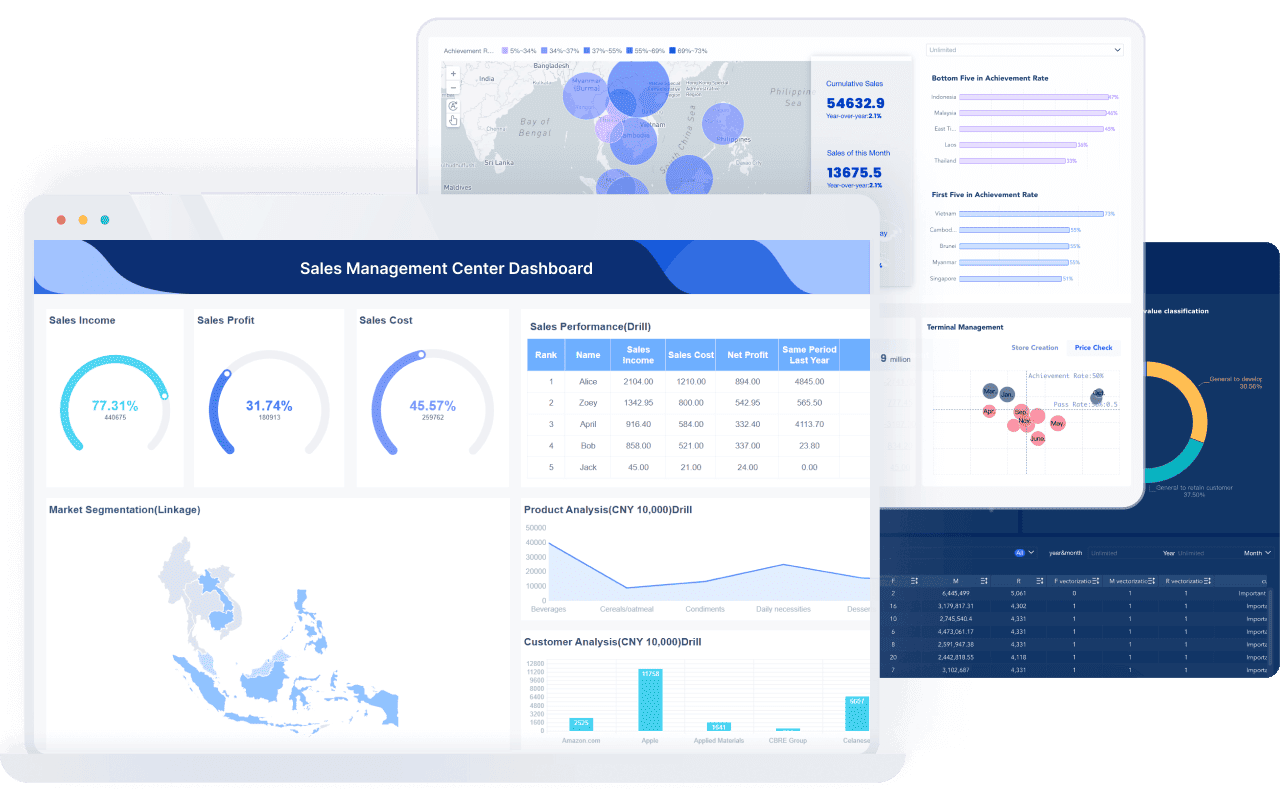

All dashboards in this article are created by FineBI

A uat dashboard in a BI project is a centralized view that tracks whether dashboards, reports, metrics, and data flows have been tested and accepted by business users. Its purpose is to help teams answer three critical questions fast:

In business intelligence programs, this matters because UAT is where technical delivery meets business trust. A report can pass developer QA and still fail in production if users do not trust the numbers, cannot navigate filters correctly, or discover broken drill-down logic during decision-making.

A strong uat dashboard connects three domains that are often managed separately:

Dashboard created with FineBI

Dashboard created with FineBI

It is also important to separate UAT from adjacent activities that BI teams sometimes blur together:

That distinction matters because enterprise BI failures rarely come from just one layer. A dashboard may be technically functional, numerically correct in sample cases, and still not acceptable for business use because workflow context, usability, or role-specific access was missed.

A useful uat dashboard should focus on release readiness, not vanity reporting. The goal is to show whether the BI asset is testable, trustworthy, and sign-off ready.

Execution metrics tell you whether UAT is progressing at a pace that supports the project timeline. More importantly, they reveal whether the team is testing broadly enough across the full BI estate.

Track the following as standard:

This is especially important in complex BI environments where a single dashboard may support multiple user groups. Coverage should not stop at “dashboard tested.” It should show whether key personas and decision flows were validated.

For example, a sales dashboard may need separate scenario coverage for:

A heatmap or matrix works well here because leadership can instantly see under-tested areas without digging through case-level detail.

Defect metrics in a BI uat dashboard should show not only the count of issues, but their impact on release confidence.

The most important indicators include:

BI projects require special emphasis on data quality defects, because many failures are not software bugs in the traditional sense. Common high-risk issues include:

Aging matters because old defects usually signal one of three deeper problems: unclear ownership, unresolved business-rule conflict, or a dependency on upstream data engineering. All three can derail release decisions if not surfaced early.

Many BI teams stop at test execution and defect counts. That is not enough. UAT exists to validate business acceptance, so the dashboard must also measure readiness and adoption signals.

Include metrics such as:

These indicators help answer the executive question behind every UAT cycle: Can the business safely rely on this dashboard after release?

If participation is low, sign-off is incomplete, or acceptance criteria remain unverified, a high pass rate can be misleading. A dashboard that has been tested mostly by technical users is not the same as a dashboard accepted by the people who will use it to make decisions.

A successful uat dashboard depends on a disciplined workflow behind it. If the process is informal, the dashboard becomes a passive status board. If the process is structured, the dashboard becomes an active control system.

Start by defining the full UAT lifecycle before building metrics. In most BI environments, the workflow should include:

Each stage needs clear ownership and measurable conditions.

Entry criteria should confirm the BI asset is stable enough for business testing. Typical requirements include:

Test cycles should be structured rather than ad hoc. For example:

Exit criteria should be equally explicit:

Responsibilities should also be mapped clearly across delivery roles:

A weekly steering review and a more frequent operational checkpoint, often daily during active UAT, is a practical cadence for enterprise BI programs.

The most effective BI UAT does not test every visual element in isolation. It tests whether users can make the decisions the dashboard was built to support.

That means scenarios should be based on actual use cases, such as:

This approach improves efficiency and makes testing more meaningful for business stakeholders.

Prioritize scenarios around the highest-risk areas:

A seasoned consultant will also validate three layers together rather than separately:

That combination is critical. A number may be correct in a data table but misleading in the dashboard because the filter behavior or default view creates the wrong impression.

Evidence capture is what turns UAT from a conversation into a defensible release record. This is essential in regulated industries, high-stakes executive reporting, and any environment where post-release disputes are likely.

A robust approach should log:

Just as important is traceability. Every test case should link back to:

This traceability makes root-cause analysis faster when issues are discovered after release. It also helps teams avoid re-litigating KPI definitions during future dashboard iterations.

For regulated or audit-sensitive BI environments, maintain a document package that includes:

The biggest UAT governance mistake is allowing sign-off to become subjective. Teams need measurable rules so the go/no-go decision is based on defined thresholds, not optimism.

Your uat dashboard should display release thresholds in a way that leaders can evaluate quickly. Common acceptance conditions include:

A practical threshold model often looks like this:

The exact thresholds vary by business context, but the principle remains constant: acceptance criteria must be visible before UAT starts, not negotiated at the end.

Business rule confirmation is another non-negotiable area. Sign-off should explicitly verify that:

A structured sign-off process removes ambiguity and protects both the delivery team and business sponsors.

At minimum, identify:

Then use a sign-off checklist that covers more than defect count. It should include:

A disciplined sign-off workflow should also document:

This makes the final release discussion far more productive. Instead of asking, “Are we comfortable?” leaders can ask, “Have all release criteria been satisfied or formally excepted?”

Complex BI UAT usually breaks down in predictable ways. Knowing those failure modes in advance helps you design the uat dashboard and operating model to prevent them.

The most common issues include inconsistent test data and unclear ownership. If business users validate against one export while developers compare against another data snapshot, disputes multiply quickly.

Another recurring problem is late feedback from business users. Many business stakeholders treat UAT as something they will “review later,” which compresses issue discovery into the final days before release.

Fragmented communication is another major risk. Defects may be logged in one tool, screenshots saved in another, and sign-off comments shared over email or chat. When information is spread across systems, no one has a reliable readiness view.

BI-specific edge cases are also frequently missed, especially in:

These issues often surface only after a dashboard is used under real-world conditions, which is exactly why scenario-based UAT is so important.

From a consulting standpoint, a few practices consistently improve BI UAT quality and speed.

1. Start with a pilot dashboard before scaling.

Do not try to industrialize UAT across dozens of assets on day one. Prove the framework on one high-value dashboard, refine the metrics, then standardize.

2. Keep KPIs focused on release decisions.

A uat dashboard is not a project vanity board. If a metric does not help determine readiness, risk, or ownership, remove it.

3. Review usability alongside accuracy.

Business users care about trust and ease of use together. A technically accurate dashboard that is confusing to navigate will still fail adoption.

4. Time-box defect triage and escalation.

Set a fixed rhythm for issue review. Critical and high-severity defects should never sit untriaged for days.

5. Make business ownership explicit.

Every dashboard, KPI, and subject area should have a business owner who can resolve definition disputes quickly.

The right tooling depends on the maturity of your BI delivery process.

Spreadsheets can work for very small teams or one-off UAT cycles, but they quickly become fragile when you need version control, defect traceability, or multi-stakeholder visibility.

Project trackers are better for workflow discipline and issue management, especially when you need ownership, due dates, and escalation paths. However, they often lack business-friendly visual summaries unless you build separate reporting.

BI-native views are ideal when you want to visualize UAT progress like any other operational process. They allow teams to combine execution metrics, defect data, and sign-off status in one interactive experience.

Lightweight apps can also work if your process is relatively standardized and you need simple forms, evidence capture, and approval routing.

A practical template for dashboard-level testing should include:

For executive reporting, keep the summary simple:

After the first release cycle, evolve the uat dashboard by reviewing where the process slowed down. Typical improvement opportunities include:

Building this manually is complex; use FineBI to utilize ready-made templates and automate this entire workflow.

For enterprise BI teams, the challenge is not understanding what a good uat dashboard should contain. The challenge is operationalizing it without creating another fragile reporting process on top of your existing delivery work.

FineBI helps by enabling teams to:

That matters because a UAT process only works when the reporting layer is reliable, fast to update, and easy for decision-makers to consume. If teams must manually reconcile test logs, defect tickets, and approval status every day, the process becomes slow and error-prone.

With FineBI, analysts can move from fragmented UAT administration to a repeatable operating model. Start with a pilot dashboard, define your KPI set, map your sign-off process, and use FineBI to turn that framework into a scalable, audit-ready management view.

If your BI release process still depends on disconnected spreadsheets and manual status updates, that is your first improvement opportunity. Build a uat dashboard that measures what matters, enforces accountability, and gives stakeholders the confidence to sign off with clarity.

A BI UAT dashboard should track test execution, pass and fail rates, blocked cases, coverage by asset or role, open defects by severity, defect aging, stakeholder participation, and sign-off progress. These metrics help teams judge release readiness in one place.

QA reporting focuses on whether the report or dashboard works as designed, while data validation checks whether numbers and logic are correct. A UAT dashboard adds the business perspective by showing whether real users trust the output and are ready to approve release.

The most important KPIs are execution rate, pass rate, blocked rate, open critical defects, defect aging, retest success rate, acceptance criteria completion, and sign-off status. Together, they show whether issues are under control and whether business approval is realistic.

Focus on high-risk business workflows, critical user roles, and the most important calculations instead of testing every possible click path equally. A coverage matrix by dashboard, process, and persona helps teams find gaps without creating unmanageable test volumes.

A BI solution is usually ready for sign-off when critical test scenarios are completed, acceptance criteria are met, major defects are resolved or formally accepted, and required stakeholders have reviewed the results. The dashboard should make those conditions visible before go-live approval.

The Author

Lewis Chou

Senior Data Analyst at FanRuan

Related Articles

How to Build a UAT Dashboard for BI Projects: KPIs, Workflow, and Sign-Off Criteria

A uat dashboard gives BI teams one place to control testing progress, $1, defect risk, and stakeholder sign off before release. For IT managers, BI product owners, analytics leads, and operations directors, this is not a

Eric

Jan 01, 1970

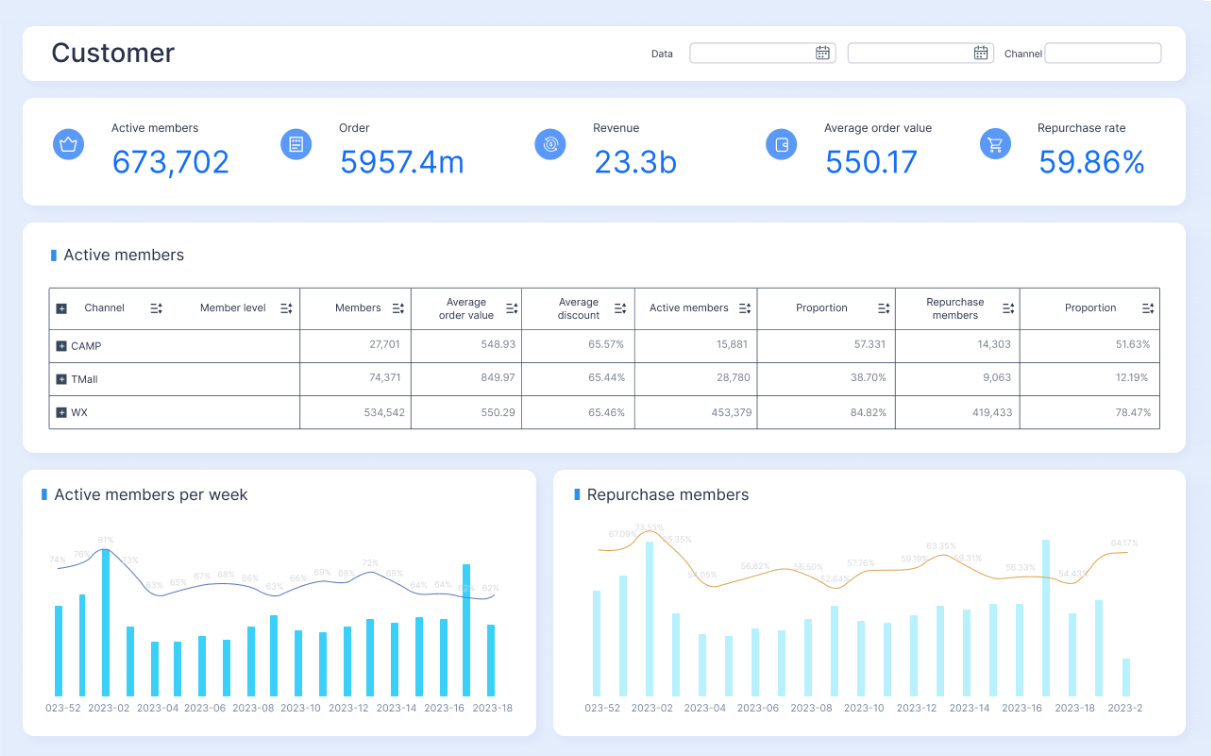

Customer Insights Dashboard: What Enterprise Teams Should Track and Why It Matters

Learn what enterprise teams should track in a customer insights dashboard to centralize data, improve decisions, and drive revenue and retention.

Lewis Chou

May 01, 2026

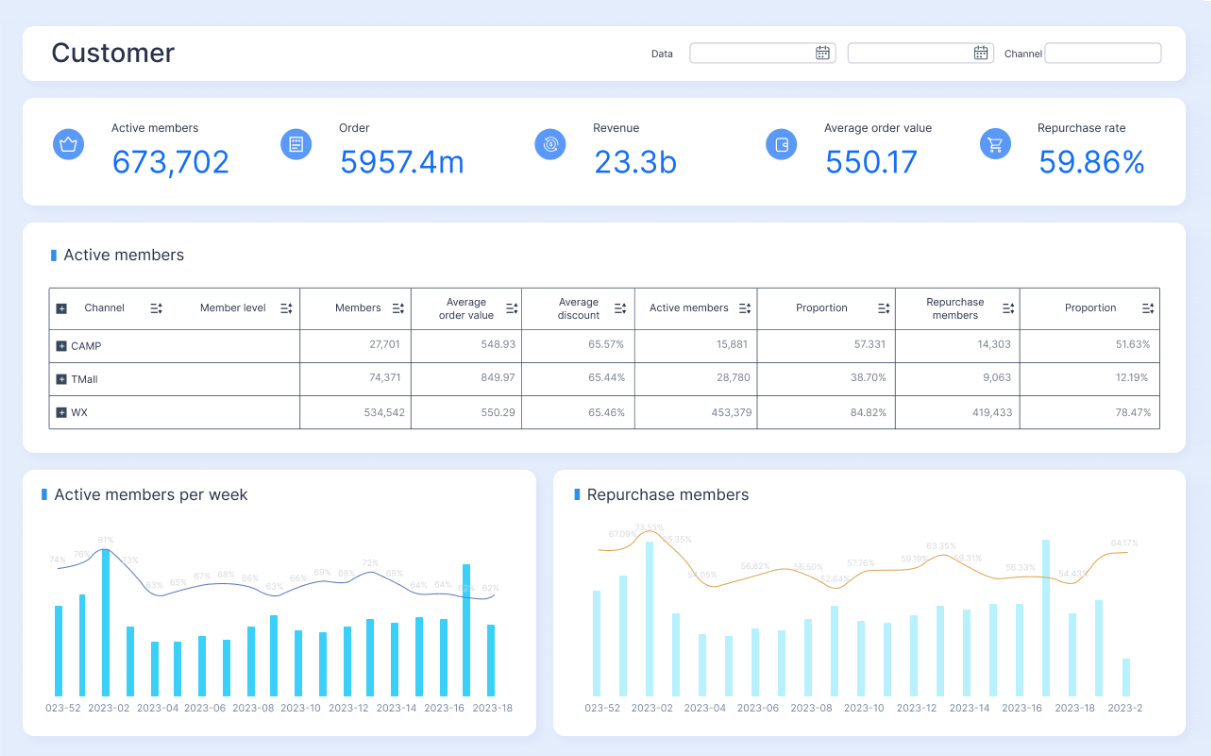

Customer 360 Dashboard: What It Is, What It Tracks, and Why Enterprises Need One

Learn what a Customer 360 Dashboard is, what it tracks across the customer lifecycle, and why enterprises need one for unified data and better decisions.

Lewis Chou

Apr 28, 2026