Overall Equipment Effectiveness is one of the most useful manufacturing metrics when plant leaders need a clear answer to a simple question: how much of planned production time actually became good output. For operations directors, plant managers, maintenance leaders, and continuous improvement teams, that answer matters because hidden losses rarely show up in ERP reports, shift summaries, or top-line throughput alone.

If your line appears busy but output is still disappointing, if one shift always “looks fine” on paper but misses schedule, or if downtime discussions go nowhere because every team defines losses differently, Overall Equipment Effectiveness gives you a structured way to isolate what is really happening. Used correctly, it exposes where capacity disappears: stoppages, speed loss, and defects. Used poorly, it creates false confidence.

Overall Equipment Effectiveness, or OEE, is a manufacturing metric that measures the percentage of planned production time that is truly productive. In plain language, it tells you how much scheduled time was converted into good parts, at the right speed, with the equipment actually running.

This is why plant leaders rely on it. OEE does not just show whether a machine was on. It shows whether the asset was:

OEE is built from three factors:

These three factors matter because they separate different failure modes that often get mixed together in operations reviews. A line can have high uptime but poor OEE because of slow cycles. Another can run fast but waste too much output in scrap or rework. A third may have strong quality but lose hours to changeovers and breakdowns.

When definitions are consistent and data is reliable, Overall Equipment Effectiveness helps uncover:

For plant leadership, this makes OEE valuable not just as a metric, but as an operational language. Maintenance, production, engineering, and quality can all use the same framework to discuss loss.

This is where many organizations get into trouble. OEE is powerful, but it is not a complete operating system.

OEE can tell you:

OEE cannot tell you by itself:

A strong OEE score with weak schedule attainment can still be a business problem. Likewise, a lower OEE in a high-mix environment may be operationally acceptable. The metric needs context.

The standard formula is straightforward:

OEE = Availability × Performance × Quality

That simplicity is useful, but it also creates risk. Many misleading OEE results come from incorrect inputs, inconsistent time rules, or mixing units carelessly.

To calculate Overall Equipment Effectiveness correctly, you need a tight definition for each input:

The math only works if units are aligned.

For example:

Correct approach:

Common bad approach:

A mathematically correct formula can still produce an operationally useless answer if definitions are weak.

Availability answers the question: during the time we planned to produce, how much time did the machine actually run?

Formula:

Availability = Run Time ÷ Planned Production Time

If a line is scheduled for 420 minutes and loses 60 minutes to downtime, then:

Availability is where breakdowns, changeovers, waiting for material, and other stop events typically show up. The key risk is inconsistency. If one line includes changeovers and another excludes them, comparison becomes meaningless.

Performance answers: when the line was running, how close was it to the ideal speed?

Formula:

Performance = (Ideal Cycle Time × Total Count) ÷ Run Time

This captures both slow running and minor stops that do not get coded as downtime. If your line “never stops” but still under-delivers output, Performance usually explains the gap.

The biggest trap here is a bad ideal cycle time. If it is set too generously, Performance looks better than reality. If Performance exceeds 100%, your standard is likely wrong.

Quality answers: of everything produced, how much was good without rework?

Formula:

Quality = Good Count ÷ Total Count

If the line made 10,000 units and 300 were rejected or required rework:

The phrase “first time” matters. If you count reworked parts as good without tracking the original defect, Quality becomes artificially inflated.

Let’s walk through a single shift.

Assume:

Availability = Run Time ÷ Planned Production Time

= 370 ÷ 420

= 0.8810

= 88.10%

First convert run time into seconds so the units match the ideal cycle time.

Performance = 19,800 ÷ 22,200

= 0.8919

= 89.19%

Quality = Good Count ÷ Total Count

= 12,800 ÷ 13,200

= 0.9697

= 96.97%

OEE = Availability × Performance × Quality

= 0.8810 × 0.8919 × 0.9697

= 0.7620

= 76.20%

This result is mathematically correct. But the operational insight is more valuable than the number alone:

That tells leadership where to focus first. In this case, improving uptime alone will not solve the full problem if speed loss remains chronic.

The most frequent OEE calculation errors are basic but damaging:

These mistakes can make a dashboard look polished while hiding deeply flawed logic.

Most OEE failures are not formula failures. They are governance failures. The formula is easy. The discipline behind it is hard.

If Line A logs changeovers as downtime but Line B excludes them, Availability becomes non-comparable. The same problem occurs across shifts and plants when local teams create their own stop codes or thresholds.

This is especially common in multi-site operations where legacy practices remain in place. Leadership sees one normalized OEE figure, but the underlying rules are different.

Performance depends on one critical standard: ideal cycle time. If that standard is too slow, Performance improves on paper without any real operational gain.

This creates one of the most dangerous situations in reporting: a metric that rewards low expectations. When Performance looks healthy despite operator complaints, missed schedules, and chronic minor stops, the ideal rate is often the problem.

Quality is another area where definitions get bent. Teams may:

The result is a Quality score that appears stable while first-pass yield is deteriorating.

Manual production logs are vulnerable to:

Disconnected systems make this worse. The machine count may say one thing, the MES another, and the quality log a third. If timestamps do not reconcile, your Overall Equipment Effectiveness result becomes a rough approximation, not a trusted metric.

A dashboard can be visually impressive and still misleading.

A daily average may show decent Performance, while the line actually suffers from frequent five-to-fifteen-second interruptions that operators clear without escalation. Those losses accumulate fast, but aggregated views can hide them.

If planned maintenance, extended changeovers, startup losses, or waiting events are excluded without a clear rule, OEE improves artificially. Leaders may believe an asset is performing at an elite level when real capacity is still leaking away.

An OEE score of 78% can come from many different patterns:

Managing by the headline number alone creates bad decisions. Improvement requires loss visibility, not score obsession.

If you want trustworthy Overall Equipment Effectiveness, you need standardization before automation. This is where many plants reverse the sequence. They deploy dashboards first, then argue over definitions later.

That approach almost guarantees false comparability.

Before benchmarking, define:

Document these rules and make them mandatory across sites. Without this, cross-line or cross-plant OEE comparisons are not credible.

Daily, weekly, and monthly OEE should be built from the same calculation rules. If one report uses shift-level data and another uses summarized ERP output, discrepancies will appear. The same applies to timezone issues, shift boundaries, and delayed quality confirmation.

A metric becomes trusted only when the logic remains stable period after period.

Keep these categories distinct:

Blending them may simplify reporting, but it weakens diagnosis. Improvement teams need to know whether to focus on maintenance, setup reduction, operator standard work, process stability, or defect prevention.

A reliable OEE program includes periodic validation of:

This audit discipline matters more than most teams expect. A dashboard can automate bad logic just as efficiently as good logic.

Assign a clear owner for each key field:

If no one owns a field, it will drift.

Decide how you will treat:

Do not leave these decisions to end-of-shift interpretation.

Automation improves speed and consistency, but it can still miss context. Sensors may show that a machine is technically running while operators know the line is starved, blocked, or cycling abnormally. Use regular reconciliation between system records and shop-floor experience.

Every OEE model contains assumptions. Capture them explicitly:

This makes future comparisons defensible and prevents “metric drift.”

The best plants do not use OEE as a vanity KPI. They use it as a decision tool.

Overall Equipment Effectiveness becomes more useful when paired with:

This combination helps leadership distinguish between apparent efficiency and actual business performance.

When OEE is broken down properly, it reveals where to attack first:

This sounds obvious, but many plants still launch broad improvement programs without first identifying the dominant loss mechanism.

The moment OEE becomes a score people are punished for, behavior changes:

That is why mature organizations use OEE to learn, not just to judge. The purpose is to expose loss honestly enough to remove it.

A strong operating cadence usually includes:

This review rhythm is where Overall Equipment Effectiveness stops being a dashboard number and becomes a management process.

Here are four practical steps I recommend in almost every plant deployment:

Create a written OEE rulebook first. Lock definitions for planned production time, downtime, ideal cycle time, good count, and exception handling before any visualization goes live.

Pilot in a stable area where standards are easier to maintain. Validate data quality there before scaling to a site-wide or enterprise rollout.

During implementation, compare sensor events, production records, and operator logs daily. The gaps you uncover early will prevent long-term reporting credibility issues.

Every improvement meeting should look at Availability, Performance, Quality, and the top recurring loss reasons. If the review only discusses the headline score, the process will stall.

Overall Equipment Effectiveness works best in environments with:

That makes it especially effective for many discrete and repetitive manufacturing operations.

It is less straightforward in:

In those environments, other measures may be more useful for decision-making, such as:

The key leadership principle is simple: do not manage by one number alone. Use OEE where it fits, and always keep operational context attached.

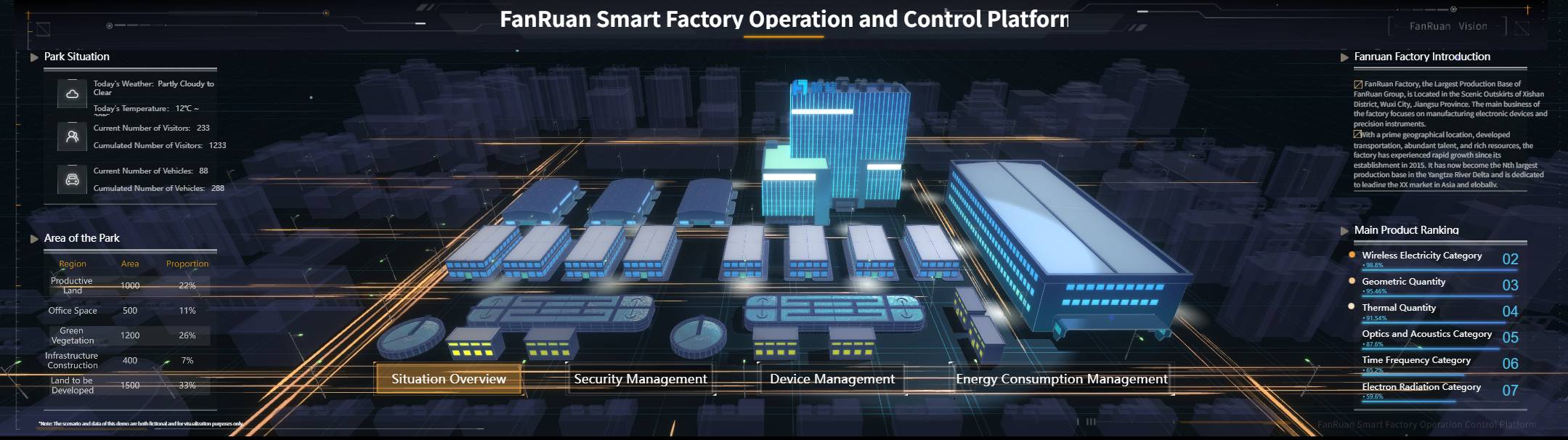

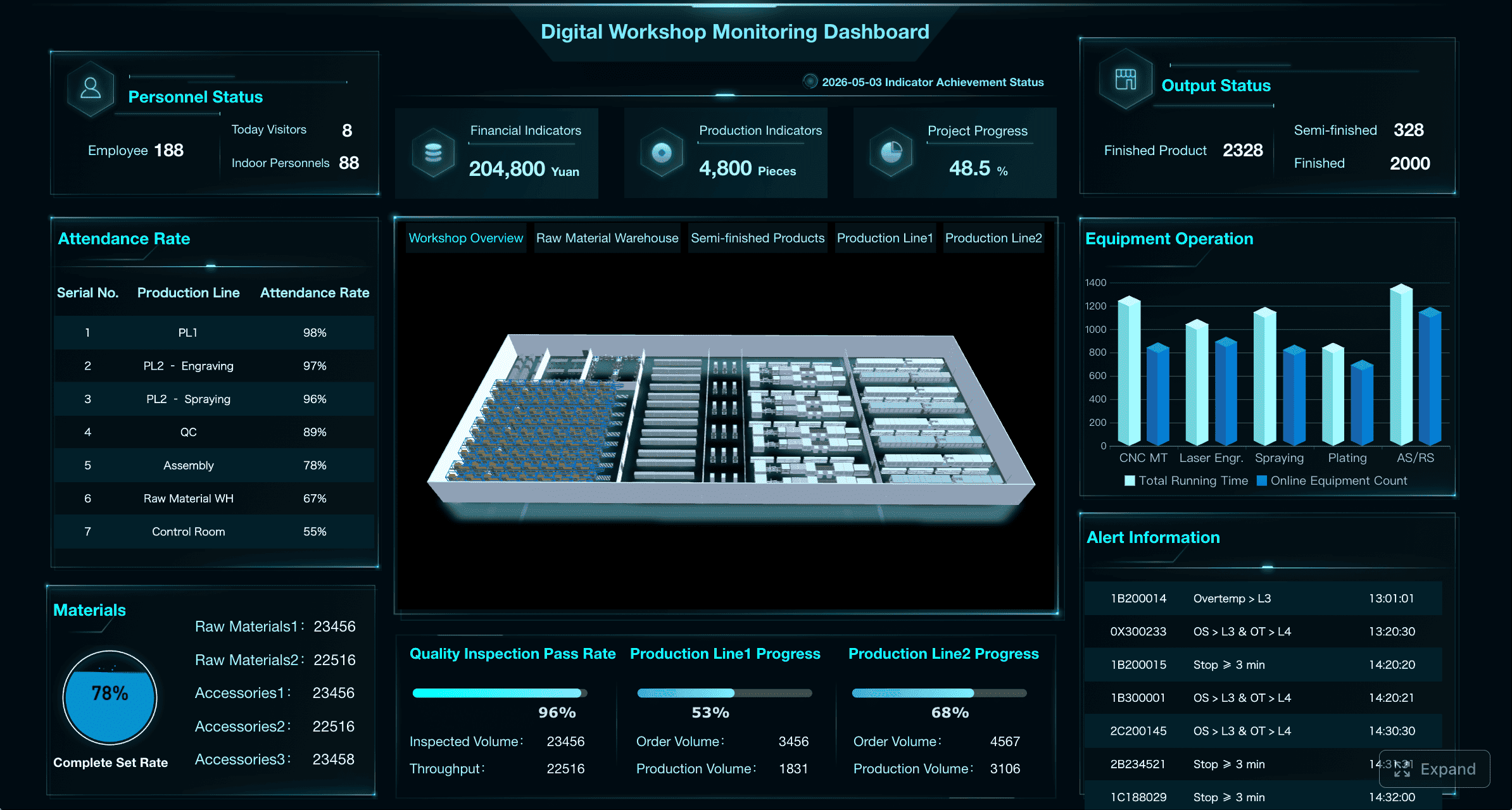

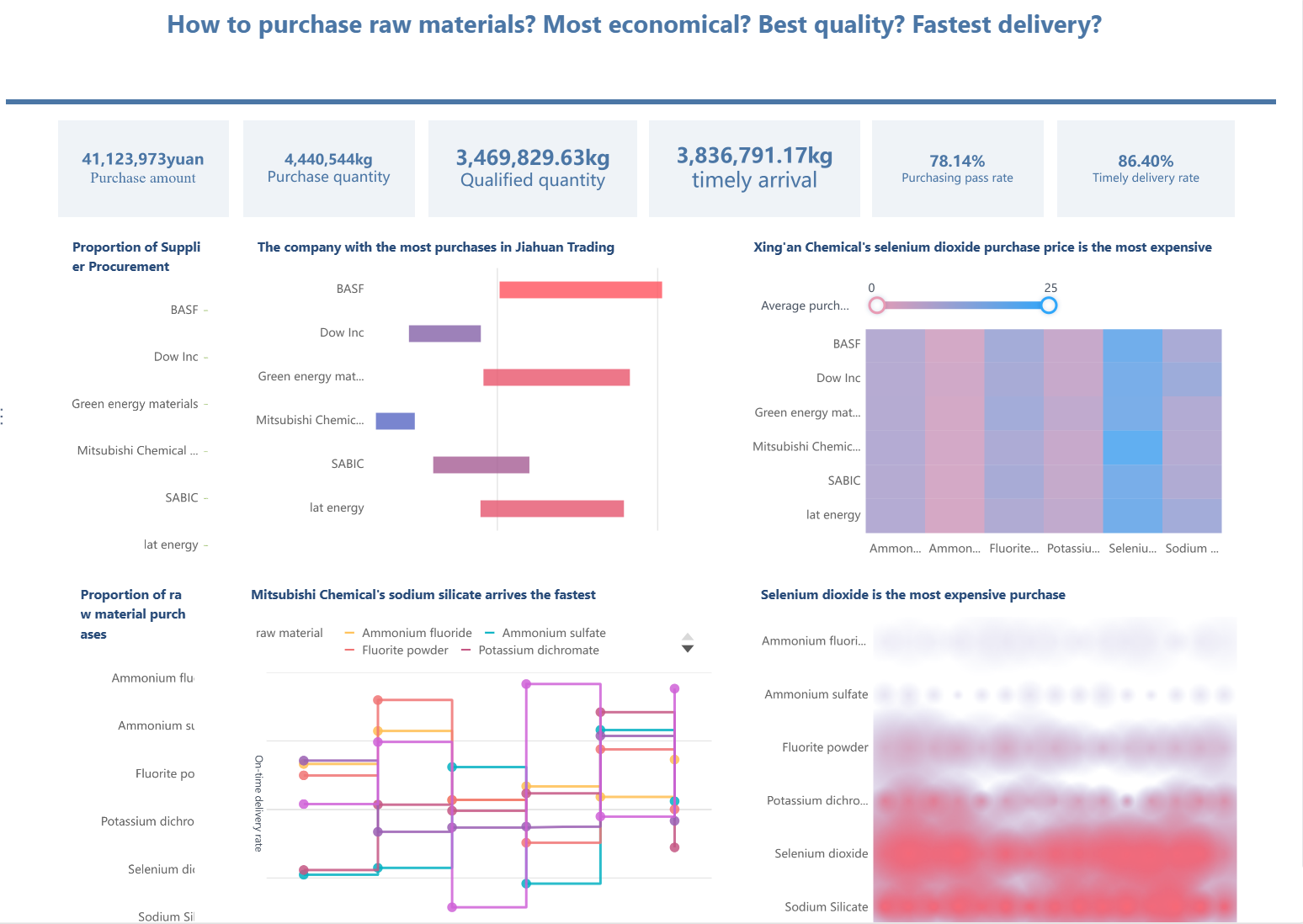

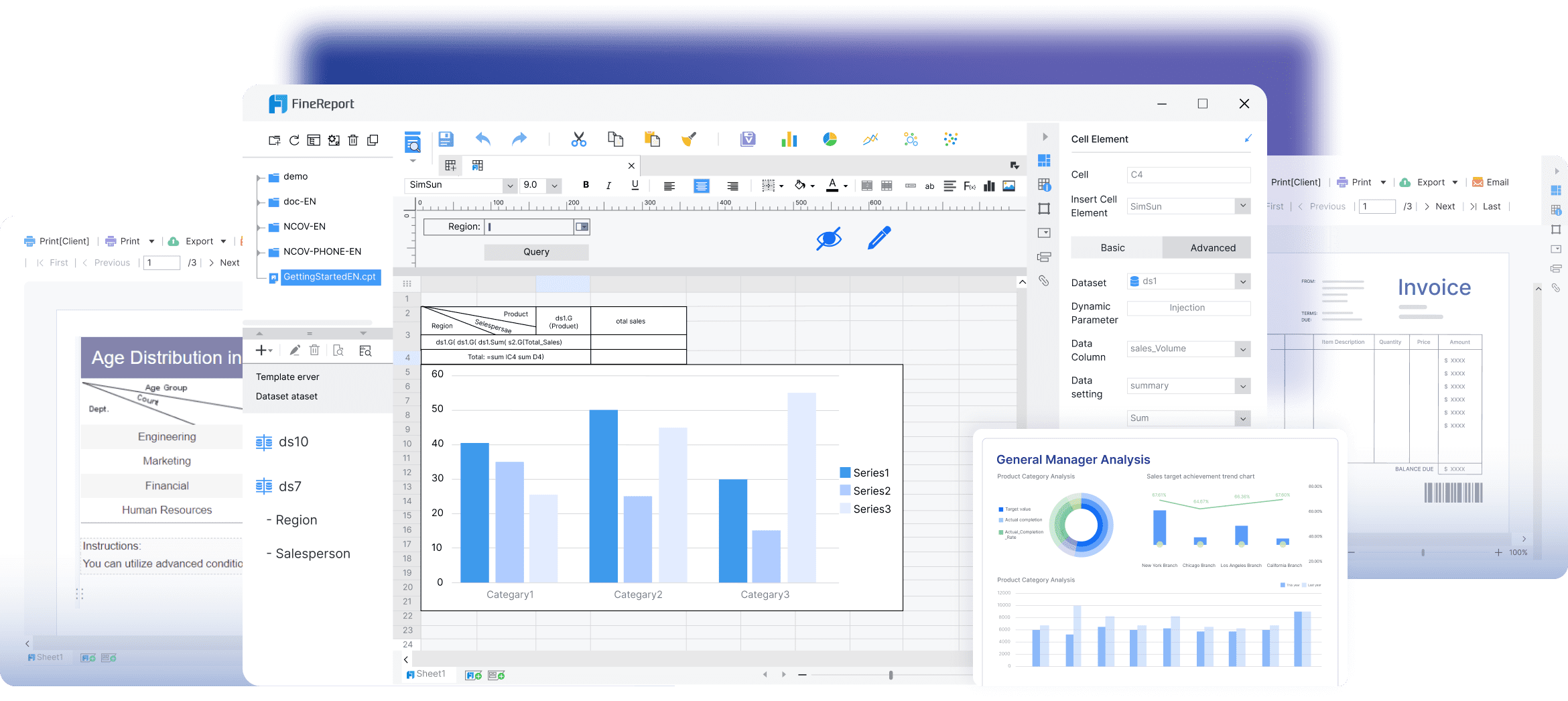

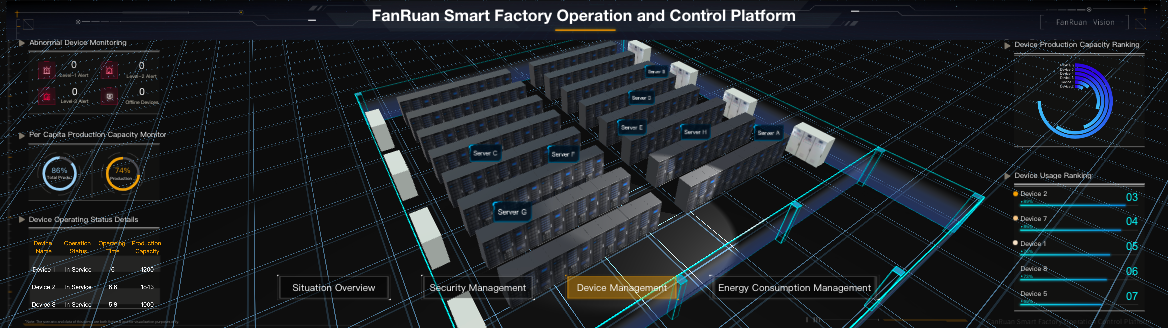

Building this manually is complex; use FineReport to utilize ready-made templates and automate this entire workflow.

Most plants do not struggle with the OEE formula. They struggle with the enterprise reality around it:

FineReport helps solve that operational gap by turning OEE from a fragile spreadsheet exercise into a governed, enterprise-grade reporting workflow.

With FineReport, manufacturers can:

This matters because OEE only improves when the metric becomes actionable. A plant leader should not have to wait days for analysts to merge logs, reconcile counts, and rebuild charts in Excel before a review meeting.

A strong deployment pattern looks like this:

Connect production, maintenance, quality, and schedule data into a unified reporting model. Standardize time logic, loss definitions, and count rules centrally.

Give each stakeholder the right lens:

Use scheduled reports and dashboards so shift leaders, plant managers, and operations directors receive the latest OEE status without manual compilation.

When OEE drops, teams should be able to move immediately from the headline KPI to the exact stop reason, time window, product, or defect category behind the loss.

Use FineReport templates to standardize daily loss review meetings, weekly trend analysis, and monthly performance summaries across plants.

For enterprise decision-makers, the value is not just prettier reporting. It is:

That is the difference between “having an OEE dashboard” and actually managing Overall Equipment Effectiveness as a trusted operational system.

If your current OEE process depends on disconnected logs, spreadsheet math, and inconsistent rules, the next step is not another cosmetic dashboard. The next step is a governed reporting architecture. FineReport gives you the templates, automation, and cross-system visibility to build that foundation faster and scale it with confidence.

OEE, or Overall Equipment Effectiveness, measures how much planned production time becomes good output at the right speed while equipment is actually running. It combines Availability, Performance, and Quality into one metric.

OEE is calculated as Availability × Performance × Quality. To do it correctly, you need consistent definitions for planned production time, stop time, ideal cycle time, total count, and good count.

Misleading OEE usually comes from inconsistent time rules, incorrect ideal cycle times, missing stop reasons, or mixing units such as seconds and minutes. Scores above 100% are a strong sign that the inputs are wrong.

A good OEE score depends on your process, product mix, and operating model, so there is no universal target that fits every plant. OEE should be used with context rather than treated as a standalone benchmark.

OEE can show where productivity is being lost through downtime, speed loss, and defects. It cannot by itself tell you whether your product mix, labor plan, schedule, or profitability is optimized.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

Building an SPC Dashboard: 7 Must-Track Metrics for Statistical Process Control

Learn the 7 essential SPC dashboard metrics for manufacturing quality control, including process stability, capability, and defect rate.

Yida Yin

May 11, 2026

CMMS Dashboard KPIs That Actually Drive Maintenance Decisions: A Role-Based Guide

Learn the essential CMMS dashboard KPIs that drive maintenance decisions. Discover role-based metrics for managers, leaders, and reliability teams.

Yida Yin

May 11, 2026

Predictive Maintenance Dashboard: 9 Metrics Enterprise Teams Should Monitor Before Equipment Fails

A predictive maintenance dashboard helps enterprise teams spot equipment risk before it turns into downtime, scrap, safety exposure, or missed production targets. For plant leaders, reliability engineers, and maintenance

Yida YIn

Jan 01, 1970