搭建什么的大数据平台英语

-

Building a Big Data Platform

A big data platform is essential for organizations that deal with large volumes of data and require advanced analytics to derive meaningful insights. Setting up a big data platform involves several key steps and considerations to ensure its effectiveness and scalability. Here are five key aspects to consider when building a big data platform:

-

Define your objectives and requirements: Before embarking on the journey of building a big data platform, it's crucial to clearly define your objectives and requirements. What are the specific use cases you want to address with the platform? What types of data sources will you be dealing with? Understanding these aspects will help in choosing the right technologies and tools for your platform.

-

Choose the right technologies: Building a big data platform involves integrating various technologies for data ingestion, storage, processing, and analytics. Some of the popular technologies used in big data platforms include Hadoop, Spark, Kafka, Hive, and others. It's important to evaluate these technologies based on your requirements and choose the ones that best suit your needs.

-

Design a scalable architecture: Scalability is a key consideration when designing a big data platform. As the volume of data grows, the platform should be able to handle the increased load without compromising performance. Designing a scalable architecture involves setting up clusters, data replication, and resource management to ensure smooth operations even with large workloads.

-

Ensure data security and compliance: Data security and compliance are critical aspects of a big data platform, especially when dealing with sensitive data. Implementing robust security measures such as encryption, access controls, and monitoring tools helps in safeguarding the data from unauthorized access or breaches. Compliance with data protection regulations such as GDPR and CCPA is also essential to avoid legal issues.

-

Monitor and optimize performance: Once the big data platform is up and running, it's important to continuously monitor its performance and optimize it for better efficiency. Monitoring tools can help in identifying bottlenecks, resource constraints, or other issues that may impact the platform's performance. By analyzing performance metrics and making necessary adjustments, you can ensure that the platform meets the required service level agreements (SLAs).

In conclusion, building a big data platform requires careful planning, choosing the right technologies, designing a scalable architecture, ensuring data security, and monitoring performance. By paying attention to these key aspects, organizations can build a robust big data platform that meets their analytical needs and drives business growth.

1年前 -

-

Building a big data platform involves a series of steps and technologies. The process can be broken down into several key components, including data storage, data processing, data analysis, and data visualization. Let's delve into each of these components in detail.

-

Data Storage:

The first step in building a big data platform is to set up a robust and scalable data storage solution. This typically involves using distributed file systems like Hadoop Distributed File System (HDFS) or cloud-based storage systems such as Amazon S3, Google Cloud Storage, or Azure Blob Storage. These platforms provide the ability to store large volumes of data across multiple nodes or servers, ensuring fault tolerance and high availability. -

Data Processing:

Once the data storage layer is in place, the next step is to implement a data processing framework that can handle the massive volumes of data. Technologies such as Apache Spark, Apache Flink, and Apache Hadoop MapReduce are commonly used for parallel processing of big data. These frameworks allow for distributed processing of large datasets, enabling tasks such as data ingestion, transformation, and cleansing. -

Data Analysis:

With the data processing layer established, the focus shifts to performing advanced analytics on the processed data. This is often accomplished using tools like Apache Hive, Apache Pig, or Apache Impala for querying the data and extracting meaningful insights. Additionally, integrating machine learning frameworks such as TensorFlow, PyTorch, or Apache Mahout enables the development of predictive models and data-driven analytics. -

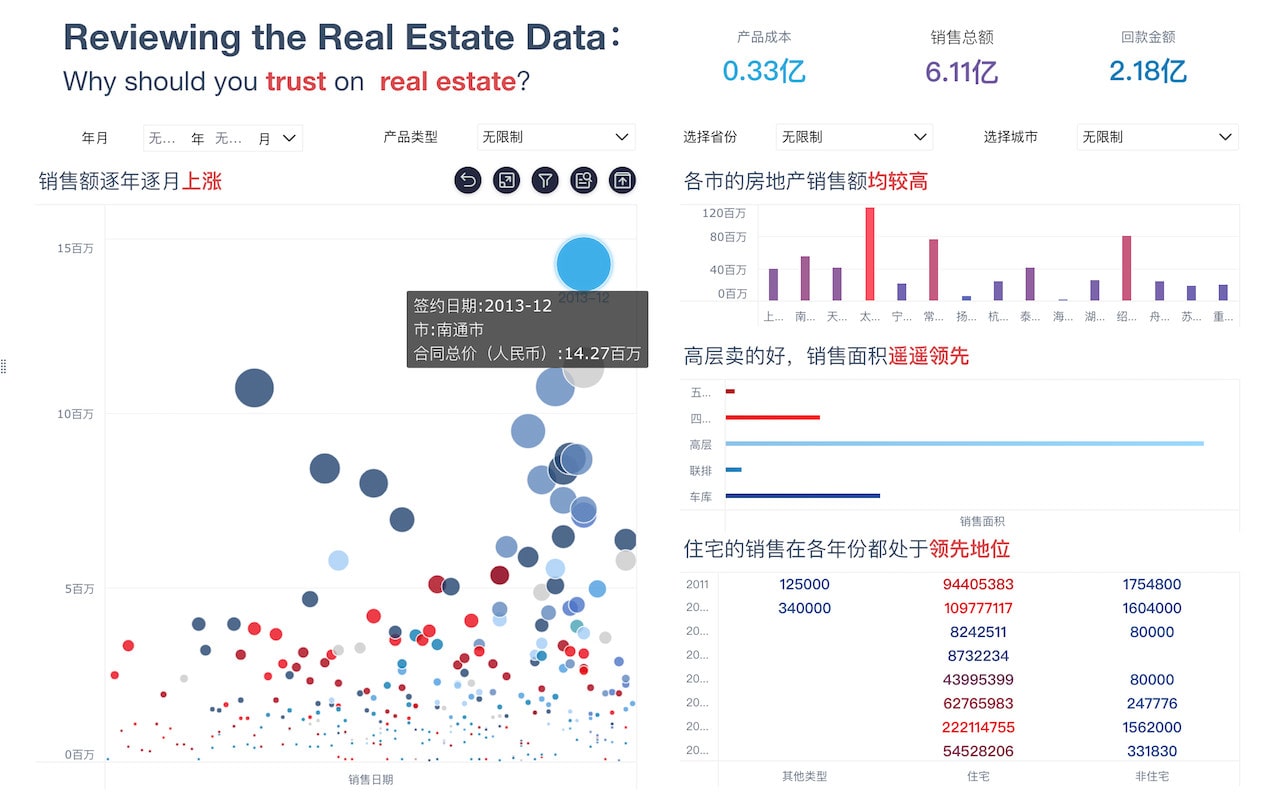

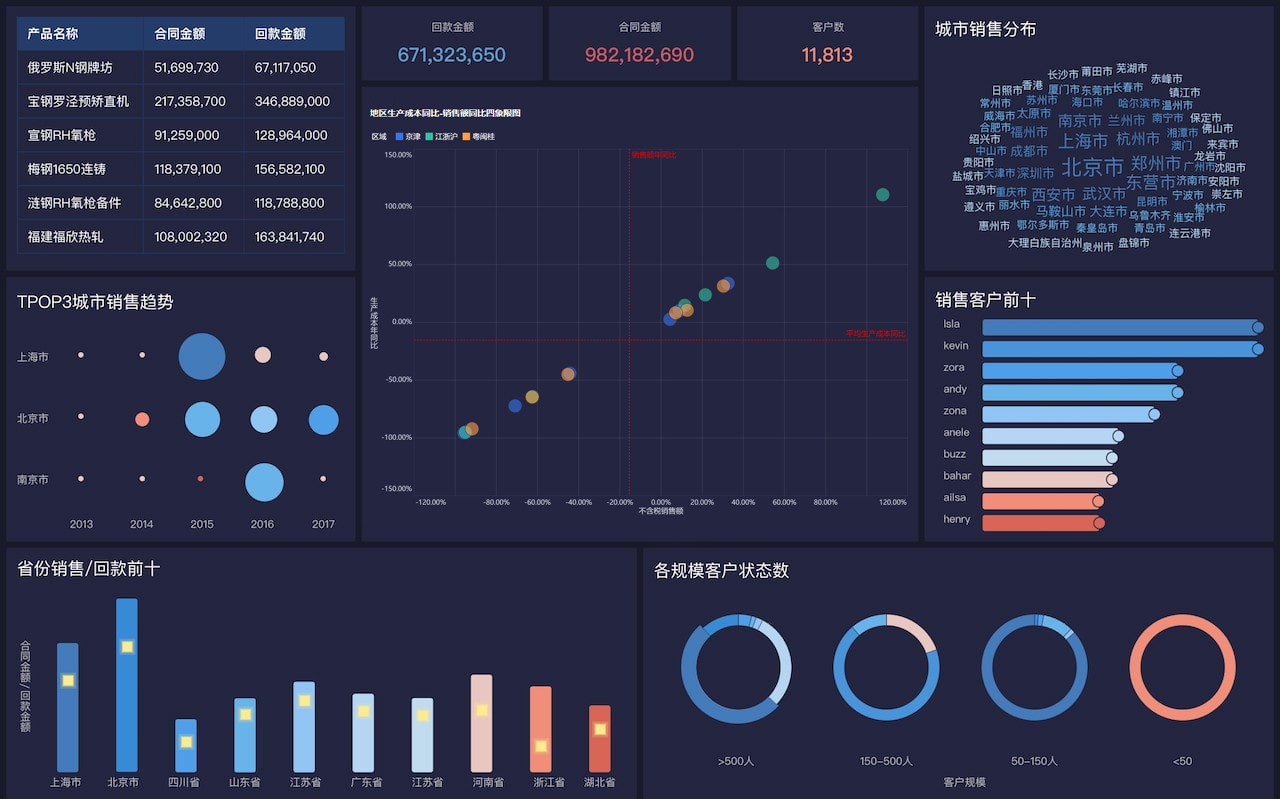

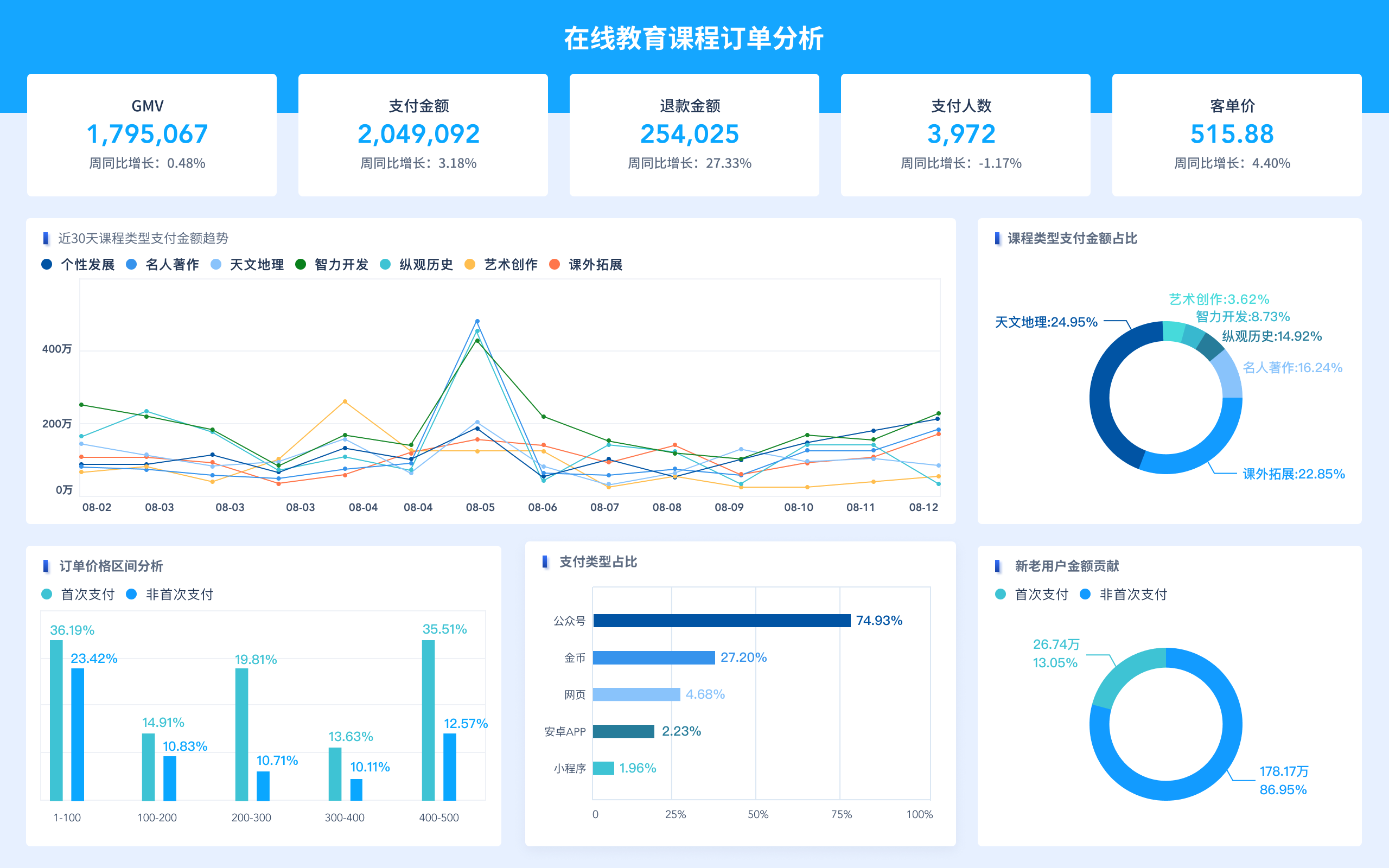

Data Visualization:

The final component of a big data platform involves data visualization tools that allow users to interact with and interpret the analyzed data. Platforms such as Tableau, Power BI, and Apache Superset provide rich visualization capabilities for creating interactive dashboards, charts, and reports. These tools help in communicating the findings and insights derived from the big data analysis to stakeholders and decision-makers.

In summary, building a big data platform entails setting up a scalable data storage infrastructure, implementing a robust data processing framework, performing advanced analytics, and visualizing the results. By carefully designing and integrating these components, organizations can harness the power of big data to gain valuable business insights and drive informed decision-making.

1年前 -

-

Building a Big Data Platform

In the era of big data, organizations are constantly looking for innovative ways to store, process, and analyze massive amounts of data to gain actionable insights and make informed decisions. Building a Big Data Platform is essential for organizations to effectively manage their data and derive value from it. In this article, we will discuss the methods and processes involved in setting up a Big Data Platform.

1. Define the Objectives

The first step in building a Big Data Platform is to clearly define the objectives. Understand the purpose of the platform, the types of data that will be stored and analyzed, and the use cases for the data. This will help in determining the requirements and design of the platform.

2. Choose the Right Technologies

Selecting the appropriate technologies is crucial for the success of a Big Data Platform. The choice of technologies will depend on factors such as the volume, velocity, variety, and veracity of the data. Common technologies used in Big Data platforms include Hadoop, Spark, Kafka, Cassandra, and Elasticsearch.

3. Design the Architecture

Designing a scalable and robust architecture is key to building a successful Big Data Platform. Consider factors such as data storage, data processing, data ingestion, data security, and data governance. Create a layered architecture that separates storage and compute for greater scalability.

4. Set up Data Ingestion

Data ingestion is the process of collecting and importing data from various sources into the Big Data Platform. Implement data pipelines using tools such as Apache NiFi, Kafka, or Flume to ingest data in real-time or batch mode. Ensure data quality and integrity during the ingestion process.

5. Implement Data Storage

Choose appropriate data storage solutions based on the nature of the data. Use distributed file systems like HDFS or object storage solutions like Amazon S3 for storing large volumes of data. Utilize NoSQL databases like Cassandra or MongoDB for unstructured data.

6. Develop Data Processing

Data processing involves transforming and analyzing data to extract valuable insights. Use tools like Apache Spark or Apache Flink for data processing tasks such as batch processing, stream processing, and machine learning. Implement data pipelines for data transformation and analysis.

7. Ensure Data Security

Security is paramount when dealing with sensitive data in a Big Data Platform. Implement encryption, access controls, and data governance policies to protect data at rest and in transit. Regularly monitor and audit the platform for security vulnerabilities.

8. Monitor and Maintain the Platform

Continuous monitoring and maintenance are essential for the smooth operation of a Big Data Platform. Use monitoring tools like Prometheus or Grafana to track system performance, resource utilization, and data quality. Perform regular backups and updates to ensure platform reliability.

9. Scale the Platform

Scalability is a critical aspect of a Big Data Platform, as data volumes and processing requirements may vary over time. Design the platform to be horizontally scalable by adding more nodes or resources as needed. Implement auto-scaling mechanisms to dynamically adjust resources based on workload.

10. Optimize Performance

Optimizing the performance of a Big Data Platform is essential for efficient data processing and analysis. Tune data processing jobs, optimize data storage configurations, and fine-tune hardware resources to improve platform performance. Monitor and analyze performance metrics to identify bottlenecks and optimize resource utilization.

In conclusion, building a Big Data Platform involves a series of steps ranging from defining objectives to optimizing performance. By following these methods and processes, organizations can create a scalable, reliable, and high-performance platform for managing and analyzing big data.

1年前