互联网如何建立大数据库

-

建立大数据库是通过互联网技术来存储和管理大量的数据。以下是建立大数据库的一些关键步骤和要点。

-

数据采集和存储:要建立大数据库,首先需要从不同的来源收集大量的数据。这些数据可以是用户信息、交易记录、传感器数据等。一旦数据被采集,就需要将其存储在可扩展的数据库系统中。常见的数据库管理系统包括MySQL、PostgreSQL、Oracle等。此外,还有专门设计用于大数据存储和处理的解决方案,如Hadoop、Cassandra、MongoDB等。

-

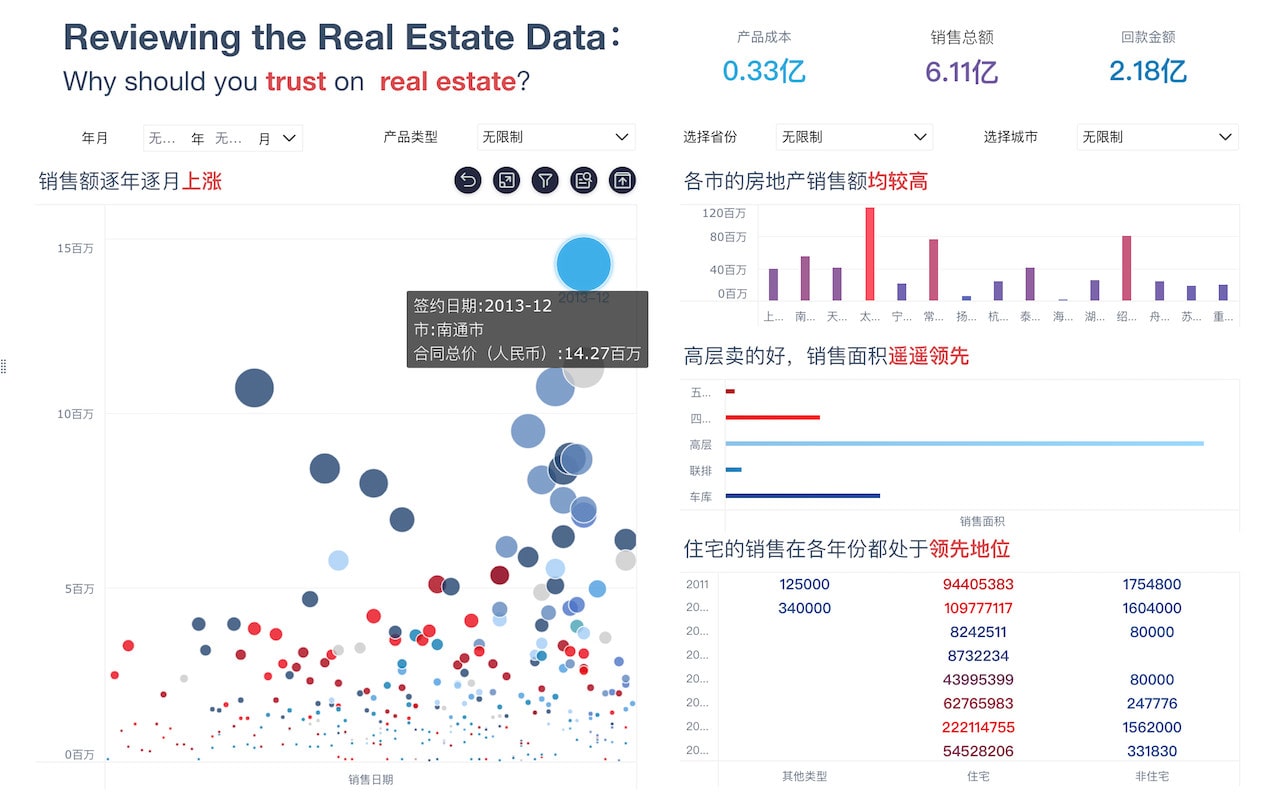

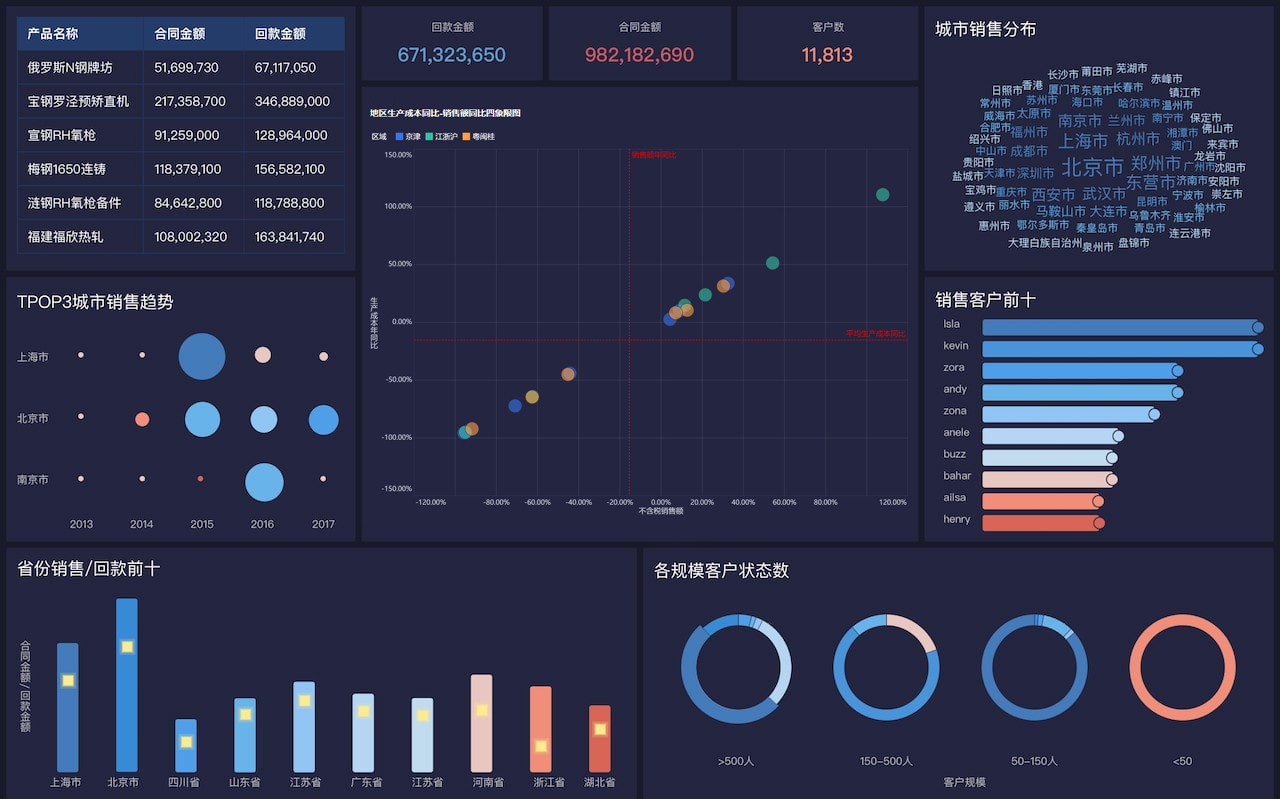

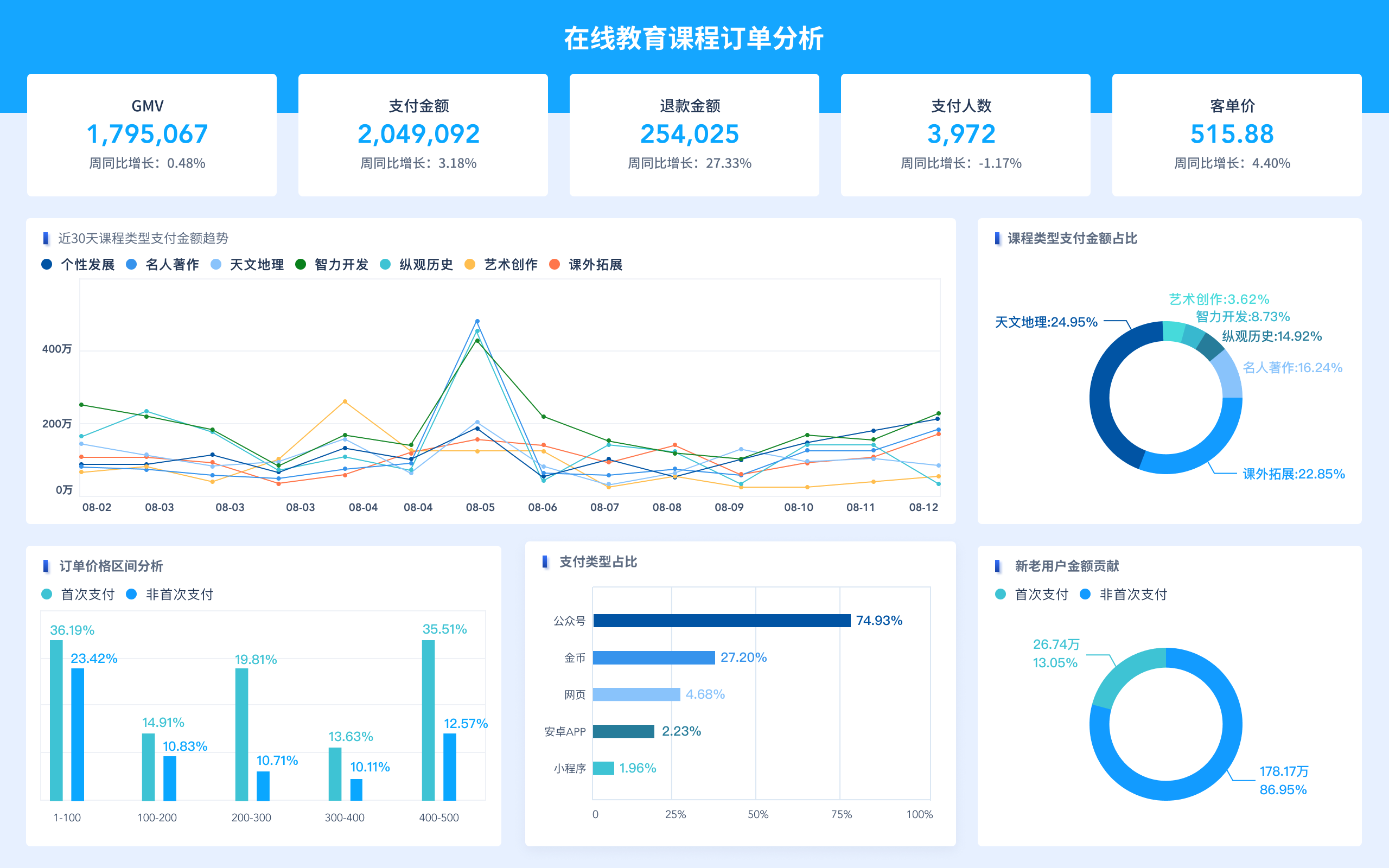

数据分析和处理:建立大数据库后,需要对数据进行分析和处理。数据分析可以帮助用户了解数据的意义和价值,同时也可以提供更准确的预测和决策依据。为了进行数据分析,可以使用各种数据挖掘和机器学习算法,以及可视化工具来发现数据中的模式和规律。

-

数据安全和隐私:大数据库中可能包含着大量敏感信息,因此数据的安全和隐私保护是至关重要的。建立大数据库时,需要采取各种安全措施,如加密、身份验证、权限控制等,以确保数据不被未经授权的访问和修改。

-

数据备份与恢复:为了避免数据丢失或损坏,建立大数据库时需要制定详细的数据备份与恢复策略。这包括定期对数据库进行备份,并确保备份文件的安全存储。

-

性能优化:大数据库往往需要处理大量的数据请求,因此性能优化是至关重要的。通过合理的索引设计、查询优化、负载均衡等手段,可以提高数据库的响应速度和吞吐量。

总的来说,建立大数据库不仅需要技术能力,还需要考虑到数据收集、存储、分析、安全和性能等多个方面。只有综合考虑这些要点,才能建立出稳定、安全、高效的大数据库系统。

1年前 -

-

建立大数据库涉及到大量数据的收集、存储、管理和分析等方面,其中涉及到互联网技术、数据库管理系统和数据处理等多个步骤。下面我就从数据收集、存储、管理和分析等方面给您介绍建立大数据库的方式和步骤。

数据收集

首先,要建立大数据库,需要进行大量数据的收集。数据的来源非常广泛,可以通过以下方式进行数据收集:

- 从互联网上抓取数据:使用网络爬虫技术从网站、社交媒体、新闻网站以及其他公开数据源抓取数据。

- 传感器和设备产生的数据:包括物联网设备、生产线的传感器、无人机、智能手机和其他各种传感器设备。

- 用户生成内容:用户在网上的行为、购买记录、评论、点赞等数据可以作为数据收集的来源。

- 企业内部数据:公司内部销售记录、客户数据、财务数据等都可以作为大数据库的重要数据源。

数据存储

收集到的海量数据需要进行有效的存储,以便后续的访问和分析。数据存储一般采用以下方式:

- 数据仓库:构建数据仓库以存储结构化数据,例如关系型数据库或数据仓库系统,保证数据的存储安全和完整性。

- 大数据存储技术:使用分布式文件系统(如Hadoop的HDFS)、NoSQL数据库(如MongoDB、Cassandra)等技术来存储半结构化和非结构化数据。

- 云存储:将数据存储在云平台如AWS S3、Azure Blob Storage等,以实现弹性扩展和成本效益。

数据管理

对海量数据进行管理是非常重要的一步,数据管理包括数据清洗、数据整合、数据安全等环节:

- 数据清洗:清洗数据,去除冗余数据、填充缺失值、解决数据格式不一致问题,以提高数据质量。

- 数据整合:将来自不同数据源的数据整合到一起,以建立全面、一致的数据库。

- 数据安全:采取必要的安全措施,包括数据加密、访问控制、备份和灾难恢复策略,确保数据的安全和可靠性。

数据分析

建立了大数据库后,需要对数据进行分析,发掘其中蕴含的信息和价值。

- 数据挖掘:利用数据挖掘算法和工具,对大数据进行深入挖掘,发现数据中的规律和关联,从而获得有用的信息并做出预测。

- 机器学习和人工智能:运用机器学习和人工智能技术,从数据中学习并进行模式识别、分类、聚类等操作,以发现数据中的潜在模式和趋势。

- 数据可视化:利用可视化工具对数据进行可视化分析,以便更直观地理解数据所反映的信息。

总的来说,要建立大数据库,需要进行数据收集、存储、管理和分析等一系列工作,需要利用互联网技术、大数据技术和数据处理工具,来有效地处理这些数据,并为企业和组织创造更大的价值。

1年前 -

If you want to establish a large database on the internet, there are several approaches and technologies you can consider. Below, I'll outline a comprehensive guide on how to build a large database on the internet, including various methods and operational procedures.

Understanding the Requirements

Before starting to build a large database on the internet, it's essential to understand the scale and purpose of the database. Consider the following aspects:

- Data Volume: Estimate the volume of data that needs to be stored, and projected growth over time.

- Data Structure: Determine the structure and type of data to be stored. Is it mostly text, multimedia, or a combination of both?

- Access Pattern: Understand how the data will be accessed. Will there be mostly read operations, write operations, or a balanced mix of both?

- Scalability: Plan for future scalability. How will the database handle increased load and data over time?

- Security: Consider the security measures needed to protect the data from unauthorized access.

- Compliance: If the data includes personal or sensitive information, ensure compliance with relevant regulations such as GDPR, HIPAA, etc.

Choosing the Right Database Technology

Once the requirements are clear, it's important to choose the right database technology. There are several options to consider:

SQL Databases

- Relational Databases: Suitable for structured data with complex relationships, common options include MySQL, PostgreSQL, Microsoft SQL Server, etc.

- NewSQL Databases: These databases are designed to provide the scalability of NoSQL systems while maintaining ACID guarantees, e.g., CockroachDB, Google Spanner, etc.

NoSQL Databases

- Document Stores: Ideal for semi-structured data, e.g., MongoDB, Couchbase, etc.

- Key-Value Stores: Great for high-speed data retrieval, e.g., Redis, Amazon DynamoDB, etc.

- Wide-Column Stores: Best for analyzing large amounts of data, e.g., Apache Cassandra, Scylla, etc.

- Graph Databases: Suitable for analyzing and traversing relationships in the data, e.g., Neo4j, Amazon Neptune, etc.

New Technologies

- Blockchain Databases: These are suitable for decentralized and secure data storage, e.g., BigchainDB, IBM Blockchain, etc.

- Time-Series Databases: Ideal for handling time-series data, e.g., InfluxDB, Prometheus, etc.

Designing the Database Schema

Once the database technology is chosen, the next step is to design the database schema. Consider the following factors during the schema design:

- Normalization: Normalize the database to reduce redundancy and improve data integrity.

- Indexing: Plan which fields should be indexed for efficient querying.

- Partitioning: If your data volume is large, consider how to partition the data to improve performance and scalability.

Storage and Infrastructure Setup

The storage and infrastructure setup for the database play a critical role in its performance and availability.

-

Cloud vs. On-Premises: Decide whether to use a cloud-based solution like AWS, Azure, or Google Cloud, or set up your own servers on-premises.

-

High Availability: Utilize multiple servers and redundancy to ensure high availability. This may involve setting up replication, clustering, or employing cloud services that provide high availability configurations.

-

Backup and Recovery: Establish a robust backup and recovery strategy to prevent data loss in case of failures.

Implementation and Development

Once the setup is ready, the database needs to be implemented:

-

Database Initialization: Set up the database environment, including creating tables, indexes, and necessary configurations.

-

Data Ingestion: Ingest initial data into the database, ensuring that the data is consistent and correctly structured.

-

API Development: If the database is going to be accessed by external applications, develop APIs to facilitate interaction with the database.

-

Monitoring and Logging: Implement monitoring and logging to track the database's performance and identify potential issues.

Securing the Database

Security is paramount for a large database. Implement the following security measures:

-

Access Control: Set up user roles and permissions, and limit access based on the principle of least privilege.

-

Data Encryption: Encrypt data at rest and in transit to protect it from unauthorized access.

-

Security Patching: Regularly update the database system and associated software to patch security vulnerabilities.

-

Auditing and Compliance: Implement auditing mechanisms to track access and changes to the data. Ensure compliance with relevant regulations.

Scaling and Performance Optimization

As the database grows, scaling and performance optimization become crucial:

-

Vertical Scaling: Increase the resources (CPU, RAM, storage) of individual database servers.

-

Horizontal Scaling: Distribute the data across multiple servers using sharding or replication techniques.

-

Query Optimization: Analyze and optimize frequently executed queries to improve performance.

-

Caching: Implement caching mechanisms to reduce the load on the database for frequently accessed data.

Conclusion

Building a large database on the internet entails a multi-faceted approach, encompassing technology selection, schema design, infrastructure setup, development, security, and ongoing maintenance. By carefully considering the requirements and following best practices, a robust and scalable database can be established to meet the needs of diverse applications and organizations. Remember that the process does not end with the database construction; ongoing monitoring, maintenance, and adaptation to changing requirements are essential for the long-term success of the database.

1年前