大数据分析有哪些特点呢英语作文

-

Big data analysis refers to the process of examining large and varied data sets to uncover hidden patterns, unknown correlations, market trends, customer preferences, and other useful information that can help organizations make more informed decisions. There are several key characteristics that define big data analysis:

-

Volume: Big data analysis deals with vast amounts of data, often ranging from terabytes to petabytes in size. This sheer volume of data requires specialized tools and technologies to store, process, and analyze.

-

Velocity: Data is generated at an unprecedented speed in today's digital world. Big data analysis must be able to process and analyze data in real-time or near real-time to derive insights quickly.

-

Variety: Big data comes in various forms, including structured data (e.g., databases), unstructured data (e.g., text, images, videos), and semi-structured data (e.g., XML, JSON). Big data analysis tools must be able to handle this variety of data types.

-

Veracity: Big data is often noisy, incomplete, or inconsistent. Ensuring the accuracy and reliability of the data used for analysis is crucial to obtaining meaningful insights.

-

Value: The ultimate goal of big data analysis is to extract value from data to drive better decision-making, improve operational efficiency, enhance customer experiences, and gain a competitive edge in the market.

In conclusion, big data analysis is characterized by its volume, velocity, variety, veracity, and value. By leveraging advanced analytics techniques and technologies, organizations can harness the power of big data to gain valuable insights and drive business success.

1年前 -

-

Big data analytics has revolutionized how businesses and organizations operate, providing unprecedented insights that drive decision-making, optimize operations, and foster innovation. Its characteristics distinguish it from traditional data analysis, making it a critical tool in the modern data-driven world. Understanding these characteristics is essential for leveraging the full potential of big data analytics. Here, we delve into the key features that define big data analytics and explore their implications.

One of the primary characteristics of big data analytics is volume. The sheer amount of data generated daily is staggering, coming from various sources such as social media, sensors, transaction records, and more. This massive volume of data requires advanced storage solutions and sophisticated processing techniques to handle effectively. Traditional data management tools often fall short in managing such vast quantities, necessitating the use of distributed storage systems like Hadoop Distributed File System (HDFS) and cloud-based solutions that can scale dynamically with data growth.

Velocity is another defining feature. Data is generated at an unprecedented speed, requiring real-time or near-real-time processing to extract meaningful insights. This continuous influx of data demands robust data processing frameworks capable of handling streams of information instantaneously. Technologies such as Apache Kafka and Apache Storm are designed to manage high-velocity data, enabling businesses to make timely decisions and respond swiftly to market changes or operational issues.

Variety refers to the different types of data that are now available for analysis. Unlike traditional data, which was mostly structured and stored in relational databases, big data comes in multiple formats, including structured, semi-structured, and unstructured data. This variety includes text, images, videos, sensor data, and more. Tools like NoSQL databases (e.g., MongoDB, Cassandra) and big data processing frameworks (e.g., Apache Spark) are essential for integrating and analyzing diverse data types, providing a more comprehensive view of the information landscape.

Veracity pertains to the trustworthiness and quality of the data. With the explosion of data sources, ensuring data accuracy and reliability becomes a significant challenge. Inaccurate data can lead to faulty analyses and poor decision-making. Techniques such as data cleaning, validation, and real-time monitoring are crucial to maintain high data quality. Moreover, advanced machine learning algorithms can help identify and correct anomalies, enhancing the overall veracity of the data being analyzed.

Value is the ultimate goal of big data analytics. The purpose of collecting, processing, and analyzing large volumes of data is to derive actionable insights that drive business value. This could be in the form of improved customer experience, increased operational efficiency, better risk management, or new revenue opportunities. Data analytics helps organizations uncover hidden patterns, correlations, and trends that were previously invisible, enabling them to make informed decisions and stay competitive in their respective industries.

Scalability is a critical feature due to the growing nature of big data. Analytical systems must be able to scale up or down seamlessly as data volumes increase or decrease. This scalability is often achieved through cloud computing platforms and distributed computing frameworks that can handle varying data loads without compromising performance. Scalability ensures that businesses can continue to derive insights from their data regardless of its size.

Big data analytics also emphasizes the importance of advanced analytical techniques. Traditional statistical methods are often insufficient for extracting insights from massive datasets. Instead, big data analytics leverages machine learning, artificial intelligence, and advanced algorithms to analyze data. These techniques enable predictive analytics, anomaly detection, and pattern recognition, providing deeper and more accurate insights than traditional methods.

Data privacy and security are paramount in big data analytics. With large volumes of sensitive information being processed, ensuring data protection is crucial. Regulations like the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) mandate strict compliance for data handling and protection. Implementing robust security measures such as encryption, access controls, and audit trails is essential to safeguard data against breaches and unauthorized access.

Big data analytics fosters innovation by providing a sandbox for experimentation and hypothesis testing. Businesses can simulate different scenarios, test hypotheses, and measure outcomes using big data. This iterative process enables organizations to innovate continuously, adapt to changes, and develop new products or services that meet evolving market demands.

Collaboration is significantly enhanced through big data analytics. The ability to share data and insights across departments and with external partners fosters a collaborative environment. Data democratization ensures that relevant information is accessible to all stakeholders, facilitating better coordination and decision-making. Platforms that support data sharing and collaborative analytics play a crucial role in breaking down silos and promoting a data-driven culture.

Another notable characteristic is the integration capability of big data analytics. Modern businesses operate on a plethora of systems and platforms, each generating its own set of data. Integrating these disparate data sources to provide a unified view is essential for comprehensive analysis. Big data tools and technologies facilitate this integration, enabling seamless data flow across different systems and enhancing the accuracy of insights derived from the analysis.

Real-time analytics is increasingly becoming a necessity in today's fast-paced environment. The ability to analyze data as it is generated allows businesses to respond to opportunities and threats immediately. Real-time analytics supports use cases such as fraud detection, personalized marketing, and dynamic pricing, where timely insights are crucial. Technologies like stream processing and real-time data warehouses enable organizations to achieve real-time analytics, providing a competitive edge.

Cost efficiency is another important aspect. Traditional data processing methods can be expensive and resource-intensive, especially with large data volumes. Big data analytics offers more cost-effective solutions through distributed computing and cloud-based services. These technologies reduce the need for expensive hardware and allow for pay-as-you-go models, making it more affordable for businesses of all sizes to leverage big data.

Finally, big data analytics drives better decision-making. The insights gained from analyzing large and complex datasets empower decision-makers with a deeper understanding of their operations, market trends, and customer behavior. This informed decision-making leads to improved strategies, optimized processes, and enhanced performance across various business functions.

In summary, the characteristics of big data analytics—volume, velocity, variety, veracity, value, scalability, advanced analytical techniques, data privacy and security, innovation, collaboration, integration capability, real-time analytics, cost efficiency, and improved decision-making—form the foundation of its transformative power. These features enable organizations to harness the vast potential of big data, driving growth, efficiency, and competitive advantage in an increasingly data-driven world. Understanding and leveraging these characteristics is crucial for any organization looking to thrive in the modern business landscape.

1年前 -

Characteristics of Big Data Analysis

Big Data analysis has become a pivotal tool in modern business and research, allowing organizations to harness vast amounts of data for actionable insights. In this essay, we will explore the characteristics of Big Data analysis, detailing the methods, operational processes, and various aspects that define this crucial field. With a structured approach, we will delve into the following topics:

- Introduction to Big Data Analysis

- Characteristics of Big Data

- Methods of Big Data Analysis

- Operational Processes in Big Data Analysis

- Challenges and Solutions in Big Data Analysis

- Applications of Big Data Analysis

- Future Trends in Big Data Analysis

- Conclusion

1. Introduction to Big Data Analysis

Big Data analysis refers to the process of examining large and varied datasets to uncover hidden patterns, unknown correlations, market trends, customer preferences, and other useful business information. This analysis helps organizations make more informed decisions, optimize operations, and enhance their competitive edge. The volume, variety, and velocity of data have significantly increased with the advent of digital technologies, making Big Data analysis more relevant than ever.

2. Characteristics of Big Data

Big Data is defined by several key characteristics, often referred to as the "3 Vs" (Volume, Variety, and Velocity), but can also include additional Vs such as Veracity and Value.

Volume

The sheer amount of data generated every second is enormous. From social media interactions to IoT sensor data, the volume of data that needs to be analyzed is constantly growing. Big Data tools and technologies are designed to handle this massive scale.

Variety

Data comes in various forms: structured, semi-structured, and unstructured. Structured data is highly organized and easily searchable, while unstructured data (such as videos, images, and social media posts) requires more sophisticated processing to be useful.

Velocity

The speed at which data is generated and needs to be processed is another critical factor. Real-time data processing allows businesses to respond quickly to market changes and emerging trends.

Veracity

Data accuracy and reliability are crucial for meaningful analysis. With the vast amount of data, ensuring the veracity of data—its authenticity and trustworthiness—becomes a challenging task.

Value

The ultimate goal of Big Data analysis is to derive value. This involves turning large volumes of data into actionable insights that can drive business decisions and strategies.

3. Methods of Big Data Analysis

Various methods and techniques are employed in Big Data analysis to extract meaningful insights. These include:

Descriptive Analytics

This method focuses on summarizing past data to understand what has happened. It uses techniques such as data aggregation and data mining to provide insights into historical data trends.

Diagnostic Analytics

This goes a step further to determine why something happened. By examining data more closely, it helps identify root causes and contributing factors to past outcomes.

Predictive Analytics

Predictive analytics uses statistical models and machine learning techniques to forecast future outcomes based on historical data. This is particularly useful for anticipating trends and behaviors.

Prescriptive Analytics

This method suggests actions that can help achieve desired outcomes. It uses advanced algorithms and optimization techniques to recommend the best course of action based on predictive insights.

Real-Time Analytics

With the rise of IoT and streaming data, real-time analytics has become essential. It involves processing and analyzing data as it is generated, enabling immediate decision-making.

4. Operational Processes in Big Data Analysis

The process of Big Data analysis involves several key steps, each critical for transforming raw data into valuable insights.

Data Collection

The first step involves gathering data from various sources such as databases, data lakes, sensors, social media, and transactional systems. This data can be structured, semi-structured, or unstructured.

Data Storage

Once collected, data needs to be stored efficiently. Technologies like Hadoop, NoSQL databases, and cloud storage solutions are commonly used to handle large volumes of data.

Data Processing

Data processing involves cleaning, transforming, and preparing data for analysis. This step is crucial for ensuring data quality and consistency. Techniques such as ETL (Extract, Transform, Load) and data wrangling are commonly used.

Data Analysis

This is the core step where various analytical methods (descriptive, diagnostic, predictive, prescriptive) are applied to extract insights from the processed data. Tools like Apache Spark, R, Python, and machine learning frameworks are widely used.

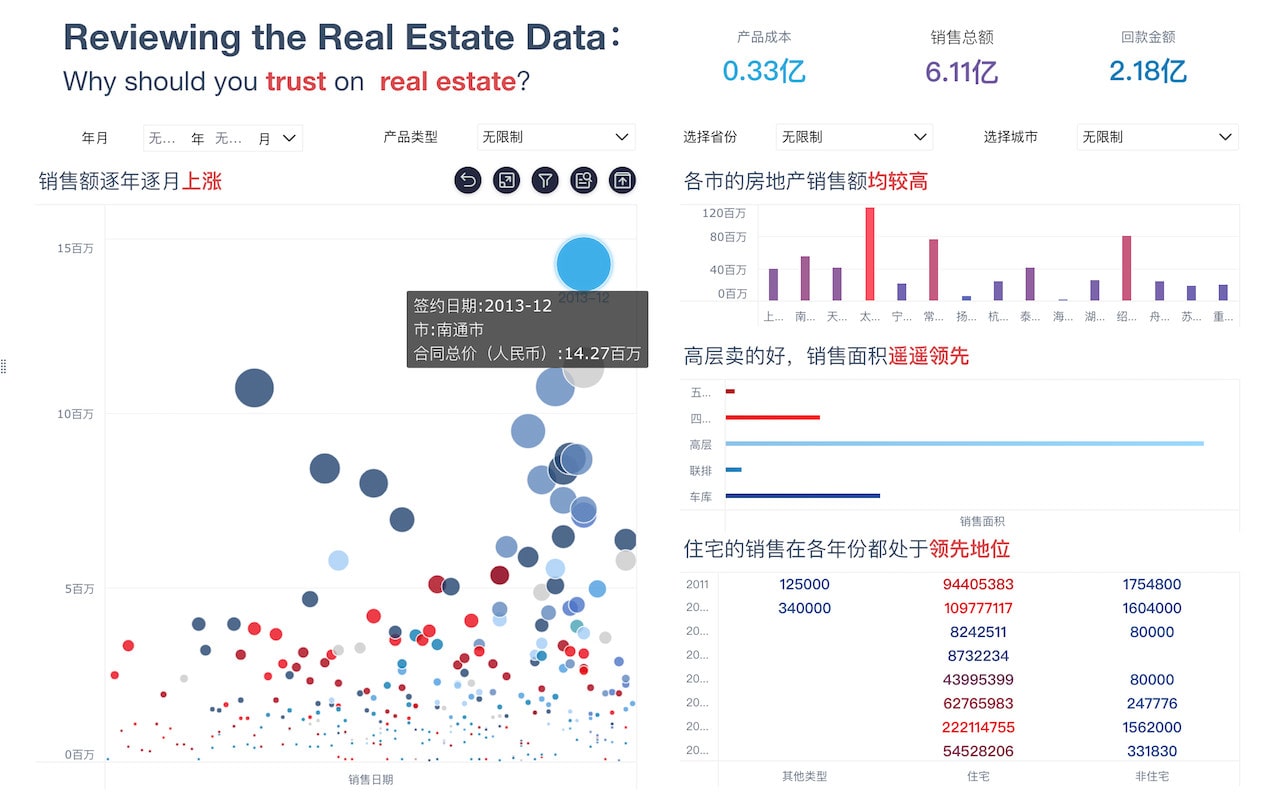

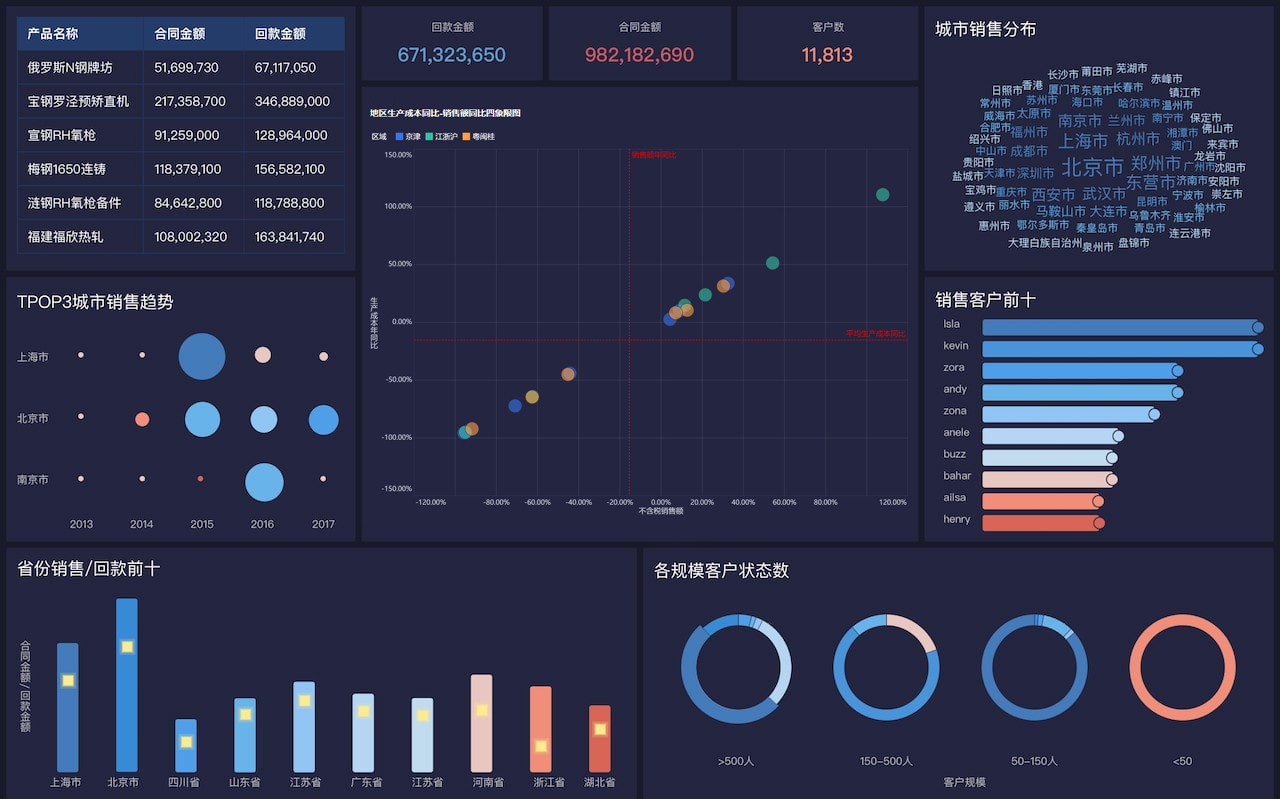

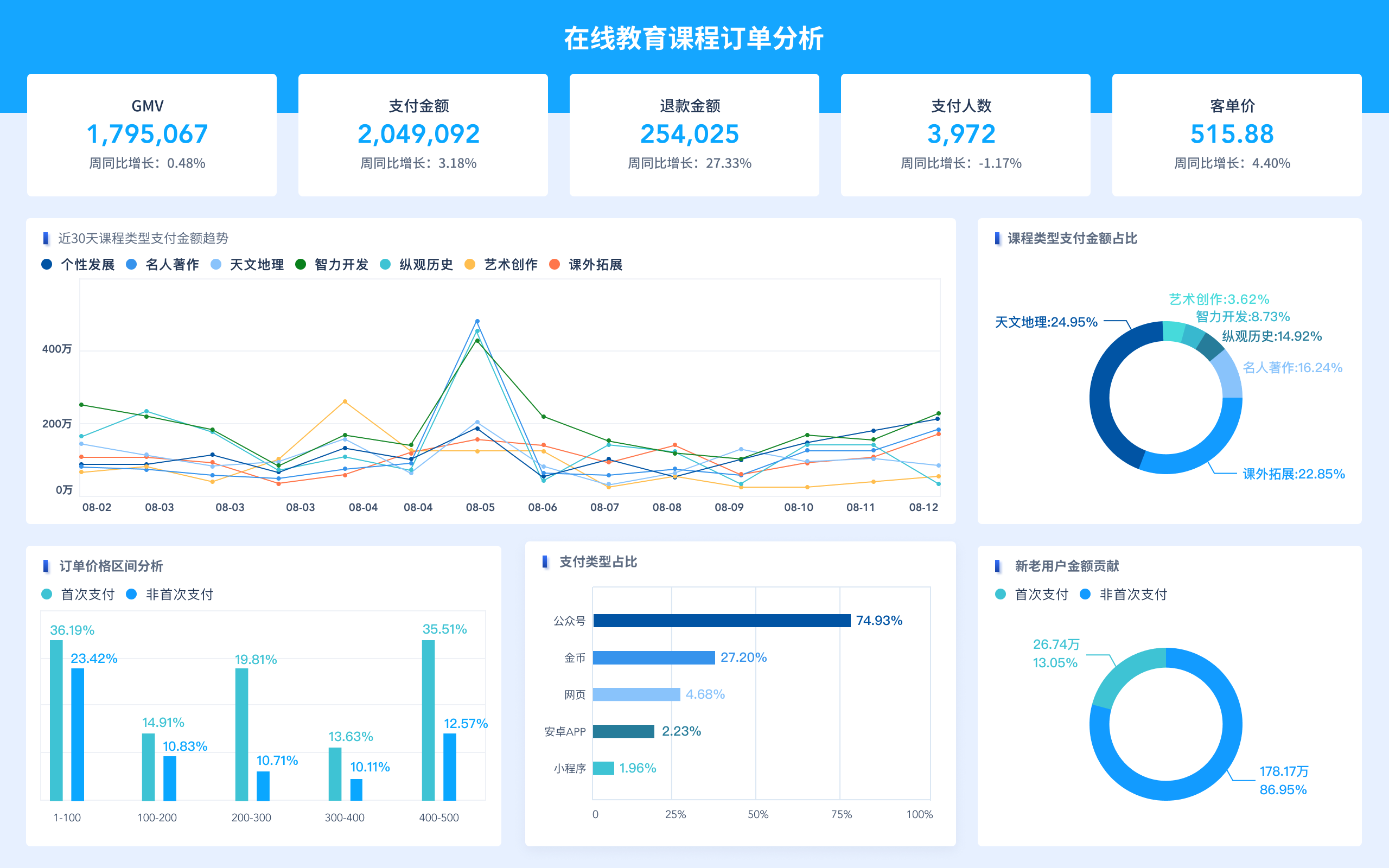

Data Visualization

The final step involves presenting the analysis results in a comprehensible format. Data visualization tools like Tableau, Power BI, and D3.js help create interactive and intuitive visualizations that make it easier to interpret data insights.

5. Challenges and Solutions in Big Data Analysis

Despite its advantages, Big Data analysis comes with several challenges that need to be addressed.

Data Quality and Integration

Ensuring high data quality and integrating data from diverse sources can be complex. Implementing robust data governance and using advanced data integration tools can help mitigate these issues.

Scalability

Handling the exponential growth of data requires scalable solutions. Cloud-based platforms and distributed computing frameworks like Apache Hadoop and Apache Spark provide the necessary scalability.

Data Privacy and Security

With the increasing amount of sensitive data, ensuring data privacy and security is paramount. Implementing strong encryption, access controls, and compliance with data protection regulations (like GDPR) are essential.

Skilled Workforce

There is a significant demand for skilled data scientists and analysts. Investing in training and development programs can help bridge this skills gap.

6. Applications of Big Data Analysis

Big Data analysis has a wide range of applications across various industries:

Healthcare

In healthcare, Big Data analysis is used for predictive analytics to improve patient outcomes, optimize hospital operations, and support personalized medicine.

Finance

Financial institutions use Big Data for fraud detection, risk management, customer segmentation, and personalized financial services.

Retail

Retailers leverage Big Data to enhance customer experience, optimize inventory management, and develop targeted marketing campaigns.

Manufacturing

In manufacturing, Big Data helps in predictive maintenance, quality control, and optimizing supply chain operations.

Transportation

Big Data is used in transportation for route optimization, predictive maintenance of vehicles, and improving logistics efficiency.

Government

Governments use Big Data for improving public services, monitoring social trends, and enhancing security and law enforcement efforts.

7. Future Trends in Big Data Analysis

The field of Big Data analysis is continually evolving. Some future trends include:

Artificial Intelligence and Machine Learning

AI and ML will play an increasingly significant role in automating data analysis processes and providing more accurate predictions and recommendations.

Edge Computing

With the proliferation of IoT devices, edge computing will become more important, enabling real-time data processing closer to the source.

Data Privacy Enhancements

As data privacy concerns grow, advancements in techniques like differential privacy and federated learning will help protect sensitive data.

Increased Adoption of Cloud-Based Solutions

Cloud platforms will continue to be a popular choice for Big Data storage and processing, offering scalability and flexibility.

Advanced Data Visualization

Interactive and immersive data visualization techniques, such as augmented reality (AR) and virtual reality (VR), will enhance data interpretation.

8. Conclusion

Big Data analysis is a powerful tool that can drive significant business value and innovation across various sectors. Understanding its characteristics, methods, and operational processes is crucial for effectively leveraging Big Data. While challenges exist, advancements in technology and methodologies are continually improving the field. As Big Data continues to grow, staying abreast of future trends will be essential for organizations aiming to maintain a competitive edge.

In summary, Big Data analysis offers immense potential, but harnessing it requires a comprehensive understanding of its complexities and a strategic approach to overcome its challenges. By doing so, organizations can unlock new opportunities and drive forward their strategic goals with data-driven insights.

1年前