大数据分析要以什么为基础呢英语

-

Big data analysis is based on a variety of foundational principles and technologies. Here are five key elements that serve as the foundation for big data analysis:

-

Data Collection and Storage: Big data analysis relies on the collection and storage of large volumes of data from various sources, including structured and unstructured data. This can include data from business transactions, social media, sensors, and other sources. Technologies such as Hadoop, distributed file systems, and NoSQL databases are commonly used for storing and managing big data.

-

Data Processing and Analysis: Big data analysis involves processing and analyzing large datasets to extract valuable insights. Technologies such as MapReduce, Apache Spark, and distributed computing frameworks are used for parallel processing and analysis of big data. Data processing may involve tasks such as data cleaning, transformation, and statistical analysis.

-

Machine Learning and AI: Big data analysis often incorporates machine learning and artificial intelligence techniques to identify patterns, make predictions, and automate decision-making processes. Machine learning algorithms, neural networks, and deep learning models are used to analyze big data and make sense of complex and unstructured datasets.

-

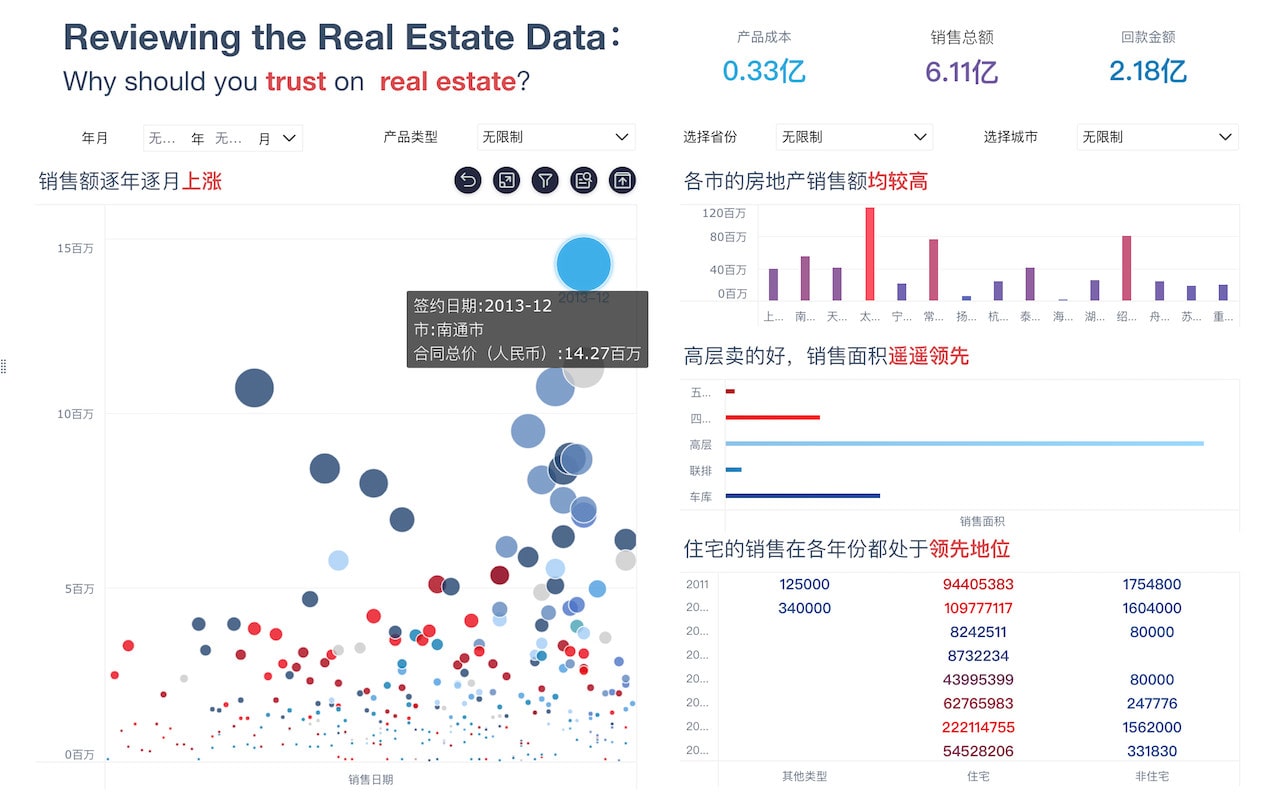

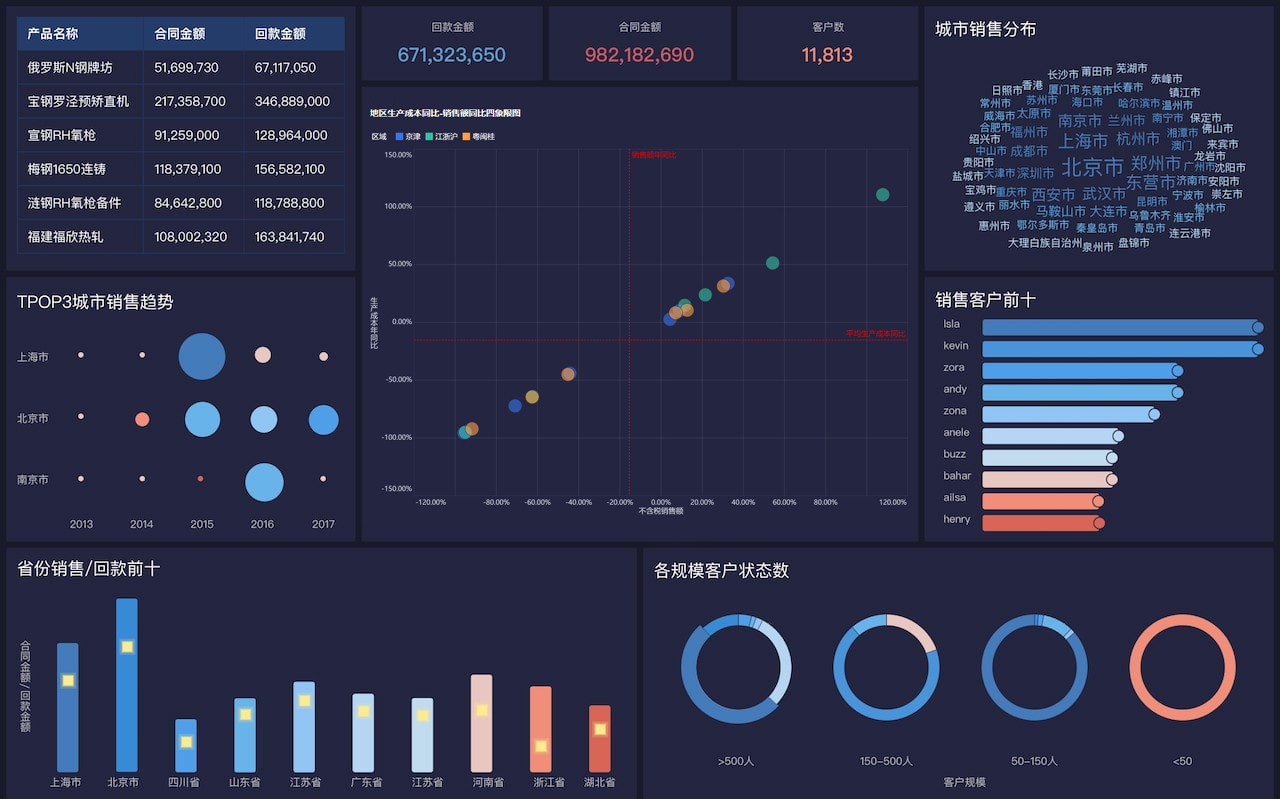

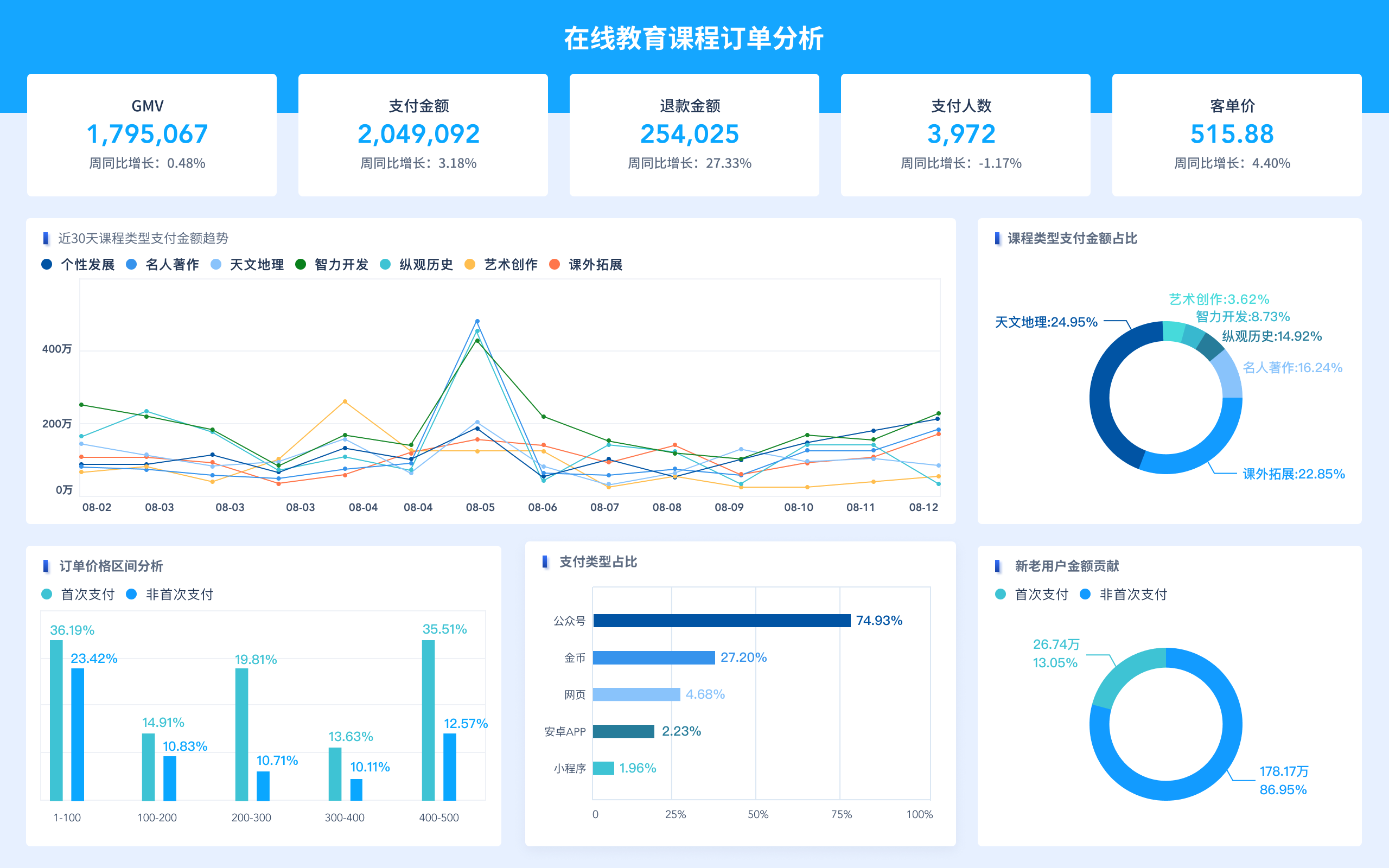

Data Visualization: Visual representation of data is crucial for understanding and communicating insights from big data analysis. Data visualization tools and techniques are used to create interactive and informative visualizations that help users comprehend complex patterns and trends within large datasets.

-

Scalable Infrastructure: Big data analysis requires a scalable and distributed infrastructure to handle the volume, velocity, and variety of data. Technologies such as cloud computing, containerization, and scalable storage systems are essential for building a robust infrastructure that can handle the demands of big data analysis.

In conclusion, big data analysis is based on the principles of data collection, processing, machine learning, visualization, and scalable infrastructure. These foundational elements form the basis for harnessing the power of big data to derive valuable insights and drive informed decision-making.

1年前 -

-

Big data analysis is based on three key components: volume, velocity, and variety. Volume refers to the enormous amount of data generated and collected from various sources. Velocity relates to the speed at which data is being generated and needs to be analyzed in real-time. Variety refers to the different types and formats of data, including structured, unstructured, and semi-structured data. These three components form the foundation for big data analysis and are essential for extracting valuable insights and making informed decisions.

1年前 -

Big Data Analysis: Foundations and Methods

Introduction

Big data analysis has become an essential part of modern business and scientific research. It involves the use of advanced tools and techniques to analyze and interpret large and complex data sets. In this article, we will explore the foundations and methods that form the basis of big data analysis.Foundations of Big Data Analysis

Big data analysis is built on several key foundations that provide the groundwork for the methods and techniques used in the process. These foundations include:-

Data Collection: The first step in big data analysis is the collection of large volumes of data from various sources such as sensors, social media, web logs, and more. This data can be structured, semi-structured, or unstructured.

-

Storage and Management: Big data requires specialized storage and management solutions to handle the massive volumes of data. Technologies such as Hadoop Distributed File System (HDFS) and NoSQL databases are commonly used for this purpose.

-

Data Processing: Once the data is collected and stored, it needs to be processed to extract valuable insights. This involves data cleaning, transformation, and integration to make it suitable for analysis.

-

Analysis and Interpretation: The final foundation of big data analysis involves the application of various analytical methods and algorithms to derive meaningful patterns, trends, and insights from the data.

Methods of Big Data Analysis

There are several methods and techniques used in big data analysis, each serving a specific purpose in extracting valuable insights from large and complex data sets. Some of the key methods include:-

Descriptive Analytics: This method involves summarizing the main characteristics of the data, such as mean, median, mode, and standard deviation. Visualization techniques, such as histograms and scatter plots, are often used to present the descriptive statistics.

-

Predictive Analytics: Predictive analytics uses statistical models and machine learning algorithms to forecast future trends and behaviors based on historical data. It helps in identifying patterns and making predictions about future outcomes.

-

Prescriptive Analytics: This method focuses on providing recommendations and decision support based on the analysis of large data sets. It helps in determining the best course of action to achieve specific goals or objectives.

-

Text Analytics: Text analytics involves the analysis of unstructured textual data, such as social media posts, customer reviews, and survey responses. Natural language processing (NLP) techniques are used to extract valuable insights from text data.

-

Machine Learning: Machine learning algorithms play a crucial role in big data analysis by automating the process of identifying patterns and making predictions. Supervised learning, unsupervised learning, and reinforcement learning are common approaches used in machine learning.

Operational Flow of Big Data Analysis

The operational flow of big data analysis involves a series of steps that are followed to process and analyze large data sets. The typical operational flow includes:-

Data Collection: The process begins with the collection of large volumes of data from various sources. This can include structured data from databases, unstructured data from social media, and semi-structured data from logs and documents.

-

Data Storage and Management: The collected data is then stored in specialized big data storage systems, such as Hadoop clusters or NoSQL databases. These systems provide the scalability and fault tolerance required to handle large data volumes.

-

Data Processing: The next step involves processing the data to clean, transform, and integrate it for analysis. This can include tasks such as data normalization, deduplication, and data enrichment.

-

Analysis and Interpretation: Once the data is prepared, it is analyzed using various methods such as descriptive analytics, predictive analytics, and machine learning. The goal is to extract valuable insights and patterns from the data.

-

Visualization and Reporting: The results of the analysis are then visualized using charts, graphs, and dashboards to make them understandable and actionable. Reporting tools are used to present the insights to stakeholders.

Conclusion

In conclusion, big data analysis is built on the foundations of data collection, storage, processing, and analysis. Various methods and techniques, such as descriptive analytics, predictive analytics, and machine learning, are used to extract valuable insights from large and complex data sets. The operational flow of big data analysis involves a series of steps, including data collection, storage, processing, analysis, visualization, and reporting. By understanding the foundations and methods of big data analysis, organizations can harness the power of big data to make informed decisions and gain a competitive edge.1年前 -