You need a clear process for preparing data for ai. Start by gathering ai data that matches your project goals. Focus on high-quality data, because it shapes the accuracy and reliability of your ai project.

As Andrew Ng says, “If 80 percent of our work is data preparation, then ensuring data quality is the most critical task for a machine learning team.”

Automated tools like FineDataLink and FineChatBI simplify ai data preparation and help you build efficient workflows. Follow a structured approach to achieve better results and avoid costly mistakes.

Setting clear goals is the foundation of ai data preparation made easy for your next project. You need to know what you want to achieve before you start preparing data for ai. When you define your objectives, you make the entire data preparation process more efficient and focused. This approach helps you avoid collecting unnecessary datasets and ensures that your ai data supports your business needs.

You should align your ai project with your organization’s goals. This step ensures that your ai data preparation made easy for your next project delivers real value. The most common objectives for enterprise ai projects include:

| Objective | Description |

|---|---|

| Aligning AI initiatives with goals | Ensures that AI projects are directly contributing to the overarching business objectives. |

| Ensuring data readiness | Focuses on the necessity of having quality data available for effective AI implementation. |

| Addressing compliance challenges | Highlights the importance of adhering to regulations and standards in AI deployment. |

| Performance at scale | Emphasizes the need for AI systems to function efficiently as they are scaled up in enterprise environments. |

You should also consider clear business cases, good data practices, and a plan to scale. These elements help you stay focused and make your ai project successful.

After you set your objectives, you need to identify the datasets that match your goals. Start by creating a data inventory. List all available datasets and check their relevance and quality. You should include both structured and unstructured datasets, such as databases, spreadsheets, images, and text files. Follow these steps for effective data sourcing and data preparation:

You should always check data availability before you begin sourcing. Good data sourcing practices help you build a strong foundation for your ai project and ensure your datasets support your goals.

You need a solid strategy for data collection and integration to achieve ai data preparation made easy for your next project. This step ensures you gather the right datasets and combine them efficiently, which is essential for preparing data for ai. When you focus on proper sourcing and collection, you build a strong foundation for your ai project and avoid common pitfalls in data preparation.

You can collect datasets from databases, APIs, files, and even sensors. Each method has strengths and challenges. In-house data collection gives you control and privacy, but it can be expensive and slow. Off-the-shelf datasets save time and money, but sometimes lack relevance. Automated tools make collection efficient and reduce errors, but they may require ongoing maintenance.

Tesla collects real-time driving data from its fleet of vehicles using onboard sensors and cameras. This proprietary dataset trains its AI models to navigate complex traffic scenarios.

You should consider sourcing datasets from multiple channels to ensure diversity and quality. For example, Alibaba uses automated sensors, GPS, and traffic cameras to collect real-time urban data. This system helps optimize traffic light timing and reduces congestion. When you combine datasets from different sources, you increase the value and accuracy of your ai data.

Many organizations discover tangled data sources only when they start integrating ai data, revealing years of poor management. Scattered datasets can hinder your ability to build effective models.

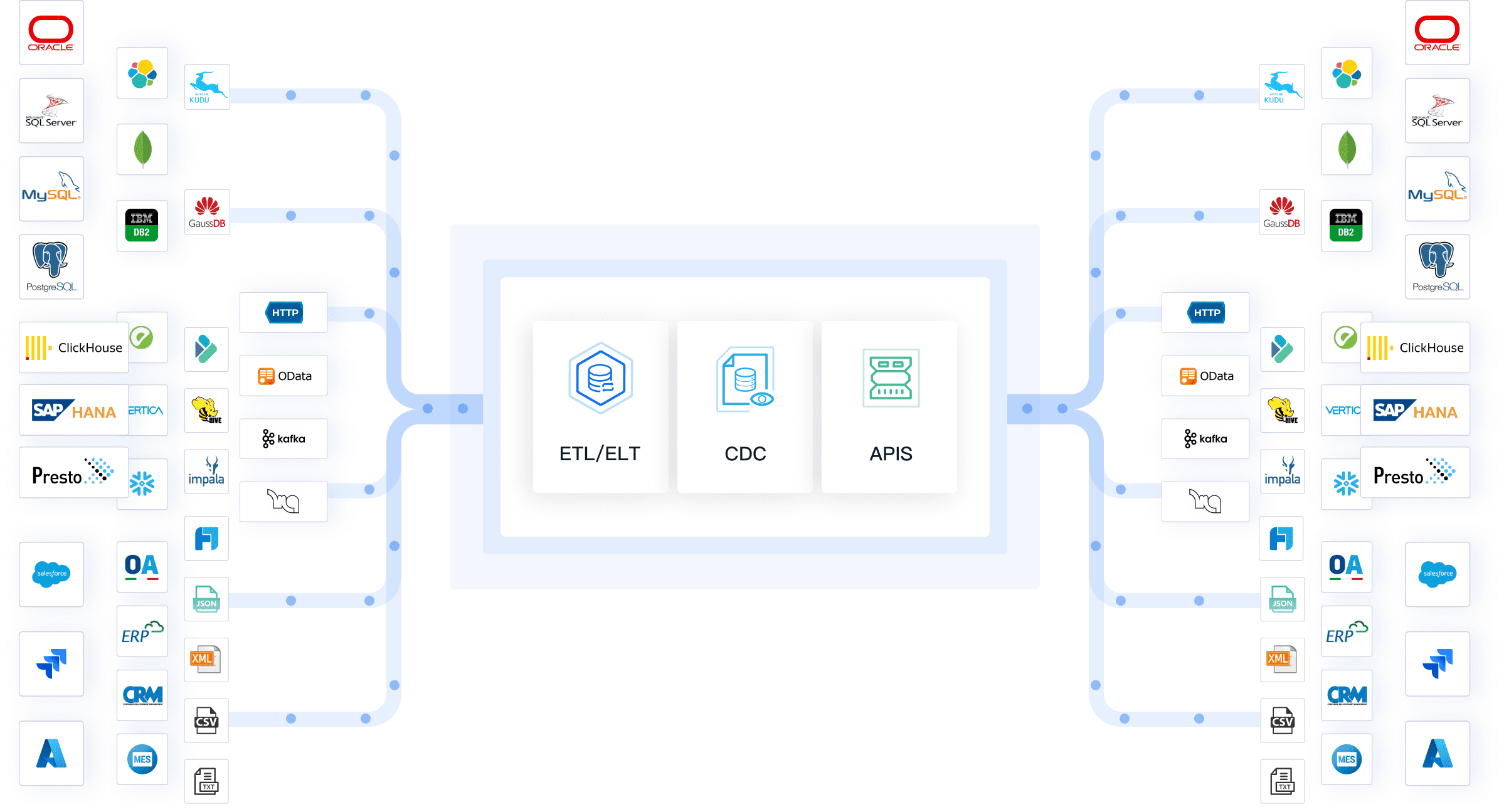

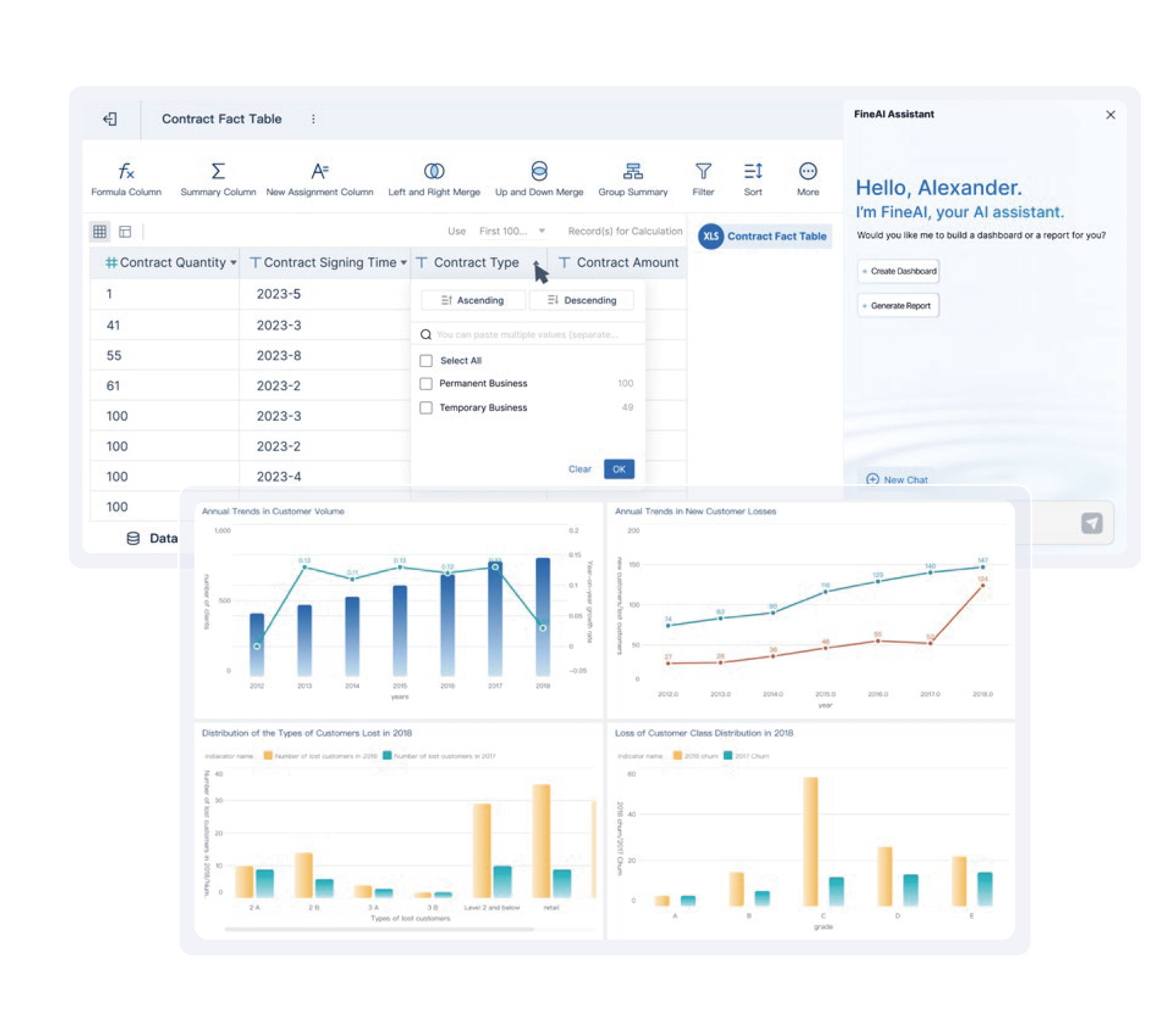

FineDataLink makes ai data preparation made easy for your next project by streamlining data integration and synchronization. You can use FineDataLink to connect, transform, and synchronize datasets from over 100 sources. The platform automates data collection and ensures your ai data remains consistent and reliable.

| Feature | Description |

|---|---|

| Data Integration from Multiple Sources | FineDataLink integrates data from various sources, ensuring comprehensive access to relevant data. |

| Data Consistency | It guarantees that data from different sources is consistent and reliable, reducing error risks. |

| Automated Data Synchronization | The tool automates data synchronization, keeping reports and dashboards updated with the latest data. |

| Seamless Data Transformation | FineDataLink transforms data during integration, ensuring it is in the correct format for analysis. |

You can automate sourcing and collection, reduce manual errors, and maintain real-time data accuracy. FineDataLink supports your ai project by providing a unified platform for all your datasets, making data preparation efficient and scalable.

You need to master data preparation for ai to achieve ai data preparation made easy for your next project. This step transforms raw datasets into high-quality data that supports reliable model training. When you focus on preparing data for ai, you improve data consistency and reduce errors. Most datasets require cleaning and annotation before you can use them for machine learning. Only about 20% of raw datasets meet the standards for ai data preparation, so you must address quality issues early.

Data cleaning is a critical part of ai data preparation made easy for your next project. You must identify and fix problems in your datasets to ensure data consistency and accuracy. Around 80% of datasets need cleaning before you can use them for ai training. Common data quality issues include:

You should focus on data governance and enforce privacy and security measures. Addressing poor data quality and integrity helps you avoid model failures. Data preparation tools streamline this process, saving time and resources. Clean, relevant datasets allow you to spend more time improving your ai project.

Data annotation and labeling are essential for ai data preparation made easy for your next project. You must label datasets accurately to train effective models. High-quality data labeling improves model performance and ensures data consistency. Best practices for annotating and labeling datasets include:

Accurate data labeling is vital. Mislabeled datasets can undermine your ai project and lead to poor predictions. For example, incorrect labels in e-commerce datasets may cause algorithms to misinterpret customer preferences. Legal AI tools may make wrong decisions if you do not ensure data consistency. You must treat data labeling as a foundation for successful ai data preparation.

Transforming and splitting datasets is a crucial step in ai data preparation made easy for your next project. You need to convert raw ai data into meaningful features and divide it into proper sets for model development. This process ensures your ai project uses high-quality training data and produces reliable results. When you focus on effective data preparation, you improve model accuracy and avoid common pitfalls.

Feature engineering helps you extract valuable information from datasets. You create new features or modify existing ones to make your training data more useful for machine learning. You can use several statistical methods to transform your ai data. The table below shows common techniques:

| Technique | Description |

|---|---|

| Log Transformation | Converts skewed distributions into more normal distributions by applying the logarithm to values. |

| One-Hot Encoding | Represents categorical variables as binary vectors, creating a unique column for each category. |

| Scaling | Adjusts the range of features to ensure they are on a similar scale. |

Normalization is essential for large-scale datasets. You need to choose the right technique based on your data. The following table summarizes popular normalization methods:

| Technique | Description | Best Use Case | Sensitivity to Outliers |

|---|---|---|---|

| Robust Scaling | Uses median and interquartile range to scale data. | Datasets with many outliers. | Low |

| Z-score Normalization | Transforms data to have mean 0 and standard deviation 1. | Algorithms using distance metrics. | Moderate |

| Min-Max Scaling | Scales features to a fixed range, usually [0, 1]. | When outliers are minimal and a fixed range is required. | High |

| Log Transformation | Applies natural logarithm to each value, effective for right-skewed data. | Right-skewed distributions or data with long tails. | Moderate |

| Quantile Normalization | Aligns distributions of multiple samples by adjusting their quantiles. | Biology and bioinformatics datasets. | Low |

You must split your datasets to evaluate your ai project accurately. Proper splitting prevents data leakage and ensures your model generalizes well. The recommended ratio for splitting datasets is:

If you do not split your ai data correctly, your model may learn patterns it should not. This mistake can inflate performance metrics and cause poor results in real-world scenarios. You need to avoid data leakage to maintain the integrity of your training data. Follow these steps:

Effective splitting is a key part of ai data preparation made easy for your next project. You build models that perform well on new data and support your business goals.

You need strong documentation and version control to achieve ai data preparation made easy for your next project. This step ensures you can track every change, maintain transparency, and reproduce results. When you document your data preparation steps, you make it easier to share knowledge, troubleshoot issues, and comply with privacy and security standards. You also protect your ai data and support data privacy throughout the entire workflow.

Tracking data changes is a key part of ai data preparation made easy for your next project. You should document every step, from sourcing datasets to transforming ai data. This practice helps you maintain data privacy and data security. The table below shows recommended documentation standards:

| Documentation Practice | Description |

|---|---|

| Thorough Documentation | Ensures transparency and reproducibility. |

| Documenting Sources and Processes | Keep detailed records of data sources, transformations, and processing steps. |

| Version Control | Use tools like Git to track changes and manage data versions. |

You can use automated tools to track changes and manage datasets. Popular options include ClickUp, Forecast, and Wrike. These tools help you automate tasks, optimize workflows, and maintain privacy. Version control allows you to trace every modification, share workflows, and ensure reproducibility. You gain auditability, compliance, and scalability for your ai project. Reproducible workflows prevent code failures and support consistent results across environments.

Tip: Always document your data preparation steps and use version control to protect data privacy and security.

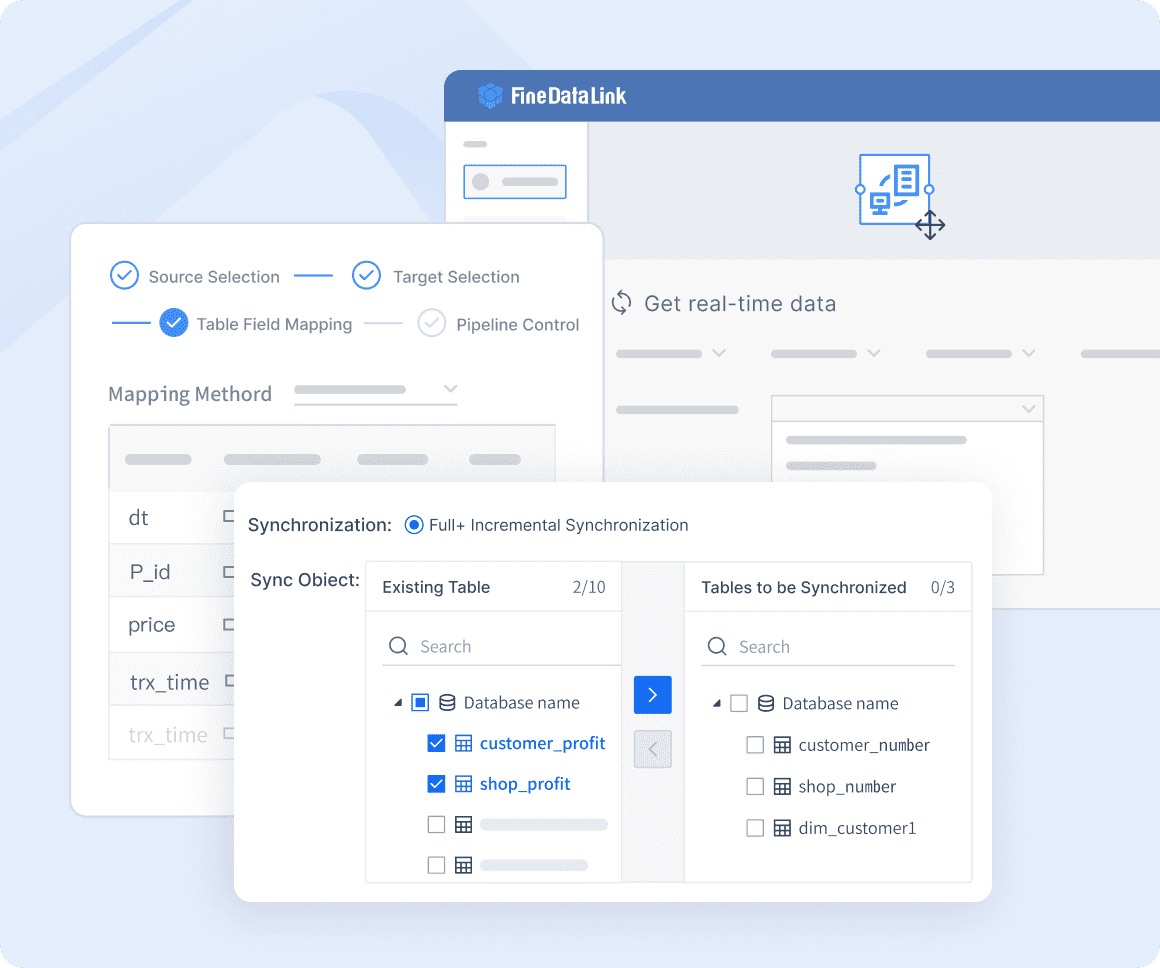

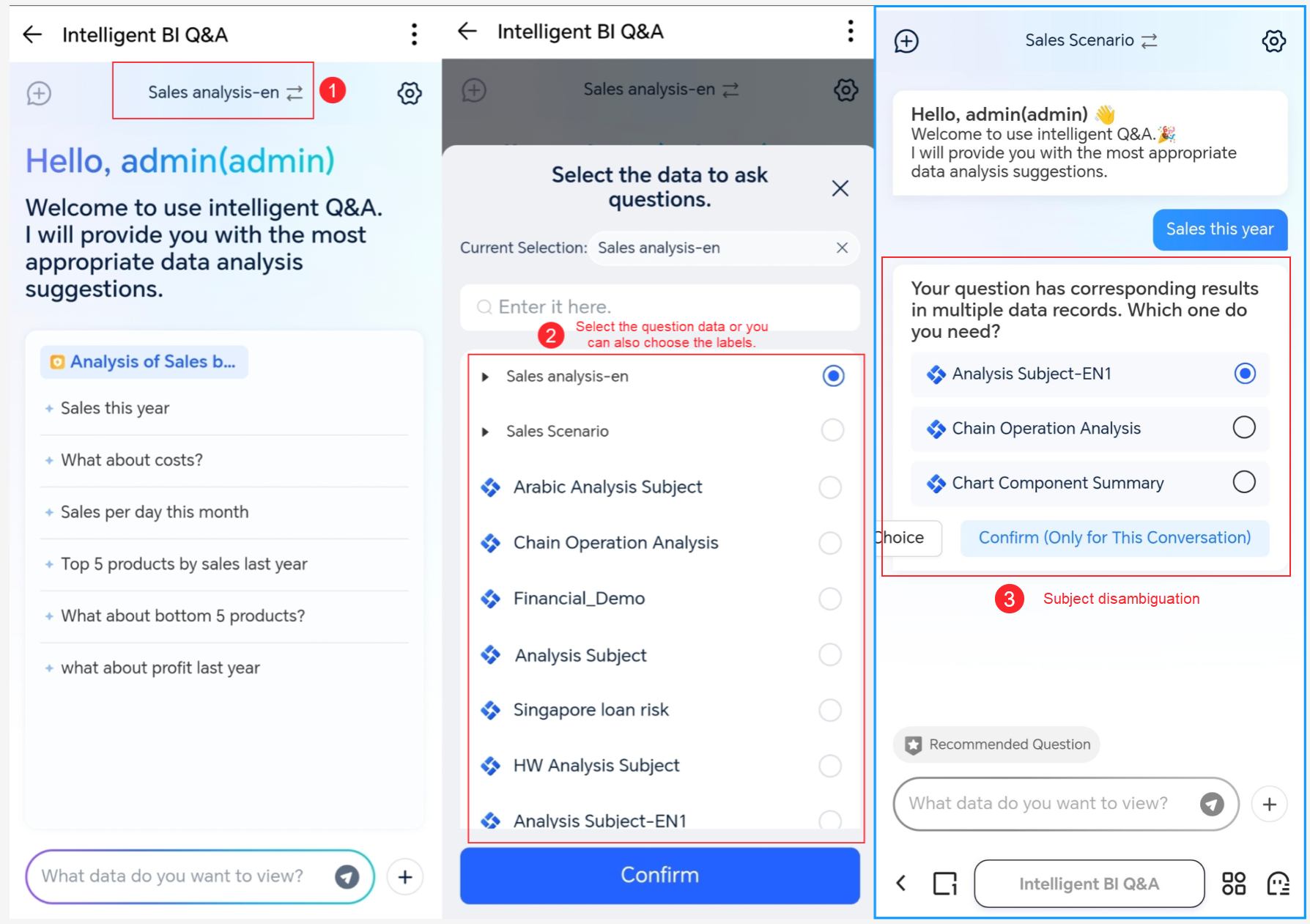

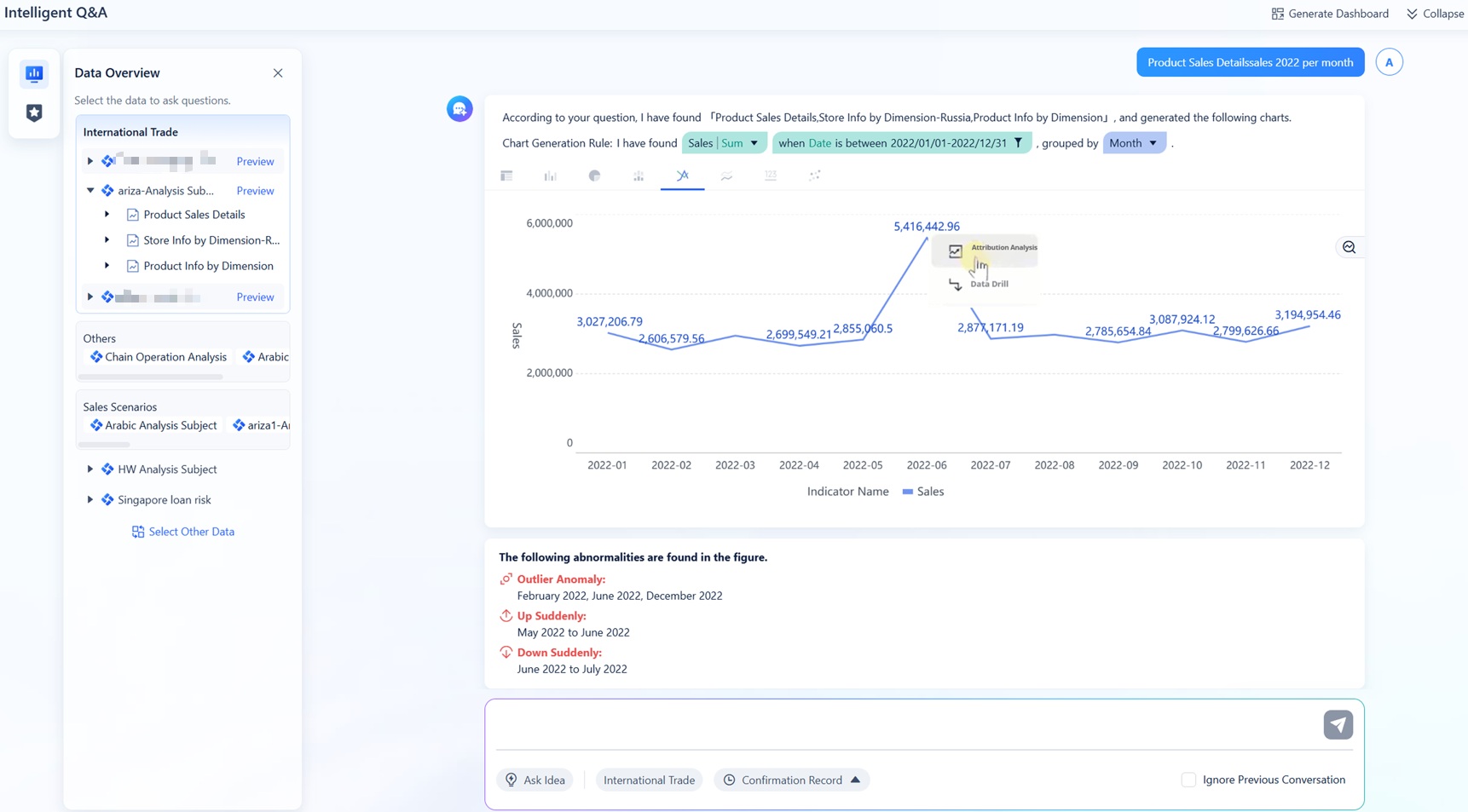

FineChatBI supports ai data preparation made easy for your next project by simplifying data analysis and validation. You can use FineChatBI’s natural language interface to query datasets and validate ai data without technical barriers. This tool bridges the gap between business users and IT teams, delivering quick and accurate responses. FineChatBI updates analytics in real time, connects to over 100 data sources, and provides a user-friendly interface for all users. The table below highlights measurable benefits:

| Feature | Benefit |

|---|---|

| Real-time analytics | Updates as data changes for timely insights |

| Natural language processing | Simplifies interaction with data |

| Integration with multiple sources | Connects over 100 data sources |

| User-friendly interface | Accessible for all users |

| High-performance engine | Efficiently processes large datasets |

| Visual data preparation tools | Helps clean and organize data |

| Intelligent permission inheritance | Simplifies user access management |

| Preview data changes in real time | Catches issues early |

You can validate prepared datasets, catch errors early, and maintain data privacy. FineChatBI helps you analyze and organize ai data, ensuring your data preparation supports privacy, security, and business goals.

You can achieve successful ai data preparation by following a structured approach. Start with clear goals for your ai project and collect high-quality ai data. Use these steps to guide your process:

You improve your results when you use tools like FineDataLink and FineChatBI. These platforms help you manage data preparation, reduce errors, and validate your ai data. The table below shows why structured data preparation matters:

| Statistic | Description |

|---|---|

| 96% of AI and ML projects encounter problems related to data quality | Structured data helps you overcome these challenges. |

| 80% of the time spent on AI projects is devoted to data preparation tasks | Managing data well leads to better outcomes. |

| Nearly 85% of AI projects fail due to poor data quality | Thorough preparation increases your chances of success. |

Apply these best practices to your next ai project. You will build reliable models and unlock the full potential of your ai data.

Understanding Perplexity AI Data Privacy and Practices

How Will Data Science Be Replaced by AI Shape the Future

What Data Readiness for AI Means and Why It Matters

What is AI Data Cleaning and How Does it Work

The Author

Lewis

Senior Data Analyst at FanRuan

Related Articles

What Is a Data Agent? A Practical Beginner’s Guide to How It Works

A data agent is one of the easiest ways to make business data feel more accessible. Instead of opening dashboards, writing SQL, or asking an analyst for help, you can ask a question in plain language and get an answer ba

Saber CHEN

Apr 02, 2026

Create AI Dashboards Instantly Without Coding

Create an ai dashboard instantly without coding. Connect data, analyze, and build dashboards in minutes using AI tools for fast, secure insights.

Lewis

Dec 29, 2025

FineChatBI vs Mercury Labs MLX Dashboard Performance in 2026

Compare FineChatBI and Mercury Labs MLX dashboard performance, speed, features, and integration to choose the best mlx dashboard for your business in 2026.

Saber

Dec 22, 2025