An incident management dashboard should help teams detect risk early, prioritize response, and communicate service health with confidence. In practice, many dashboards do the opposite. They look polished, contain plenty of charts, and still leave operations teams unsure what needs attention first.

The problem is rarely the existence of reporting. It is the design logic behind it. When teams track the wrong metrics, blend incompatible data, or build one dashboard for every audience, the result is misleading visibility rather than operational clarity.

This article explains why incident reporting breaks down, identifies 10 common reporting mistakes, and shows how to fix them so your dashboard becomes a practical decision tool instead of a static reporting surface.

A dashboard often fails not because teams lack data, but because they expect the dashboard to answer questions it was never designed to support.

Most teams expect an incident management dashboard to reveal:

What many dashboards actually show is a loose collection of generic metrics:

These figures are not useless, but they do not automatically support action. A dashboard becomes effective only when each metric is connected to a specific decision, such as whether to reassign resources, trigger escalation, investigate a service, or review a process bottleneck.

Even good metrics become noise when reporting discipline is weak. Common patterns include:

Over time, teams stop reading the dashboard critically. They scan it, reference it in meetings, and quietly maintain side spreadsheets because the official view is not trusted enough for real decisions.

A weak dashboard creates more than analytical inconvenience. It directly affects service performance.

| Area | What poor reporting causes |

|---|---|

| Response time | High-priority incidents are harder to detect early |

| Service quality | Repeating issues remain hidden behind aggregate trends |

| Backlog control | Unresolved workload is masked by closure activity |

| Leadership decisions | Resource allocation is based on incomplete context |

| Stakeholder trust | Executives and customers question the reliability of status reporting |

When visibility is poor, incident management becomes reactive. Teams spend more time explaining what happened than preventing recurrence or accelerating recovery.

Many dashboards fail because they attempt to display everything the service desk system can produce. More metrics do not create more insight. They often create hesitation.

An overloaded dashboard forces users to search for meaning under time pressure. During incident review meetings, this leads to delays, conflicting interpretations, and missed warning signs.

Common symptoms include:

If a dashboard user cannot answer “What decision does this chart support?” the metric is likely unnecessary.

A better approach is to narrow each dashboard view to a small set of operational signals, such as:

A practical rule is to keep only metrics that directly support one of these actions:

Ticket volume is easy to measure, which is why many dashboards overuse it. But incident count alone says very little about business risk.

A service with 200 low-impact incidents may be less critical than a single high-severity outage affecting revenue, compliance, or customer access. When dashboards prioritize volume without context, leaders may direct attention to noisy areas instead of business-critical ones.

Volume-based reporting often misses:

To improve reporting, include measures such as:

This is also where modern BI tools can help. With FineBI, teams can combine service desk data with business system data to visualize incident impact beyond ticket volume alone, making the dashboard more relevant to both IT and business stakeholders.

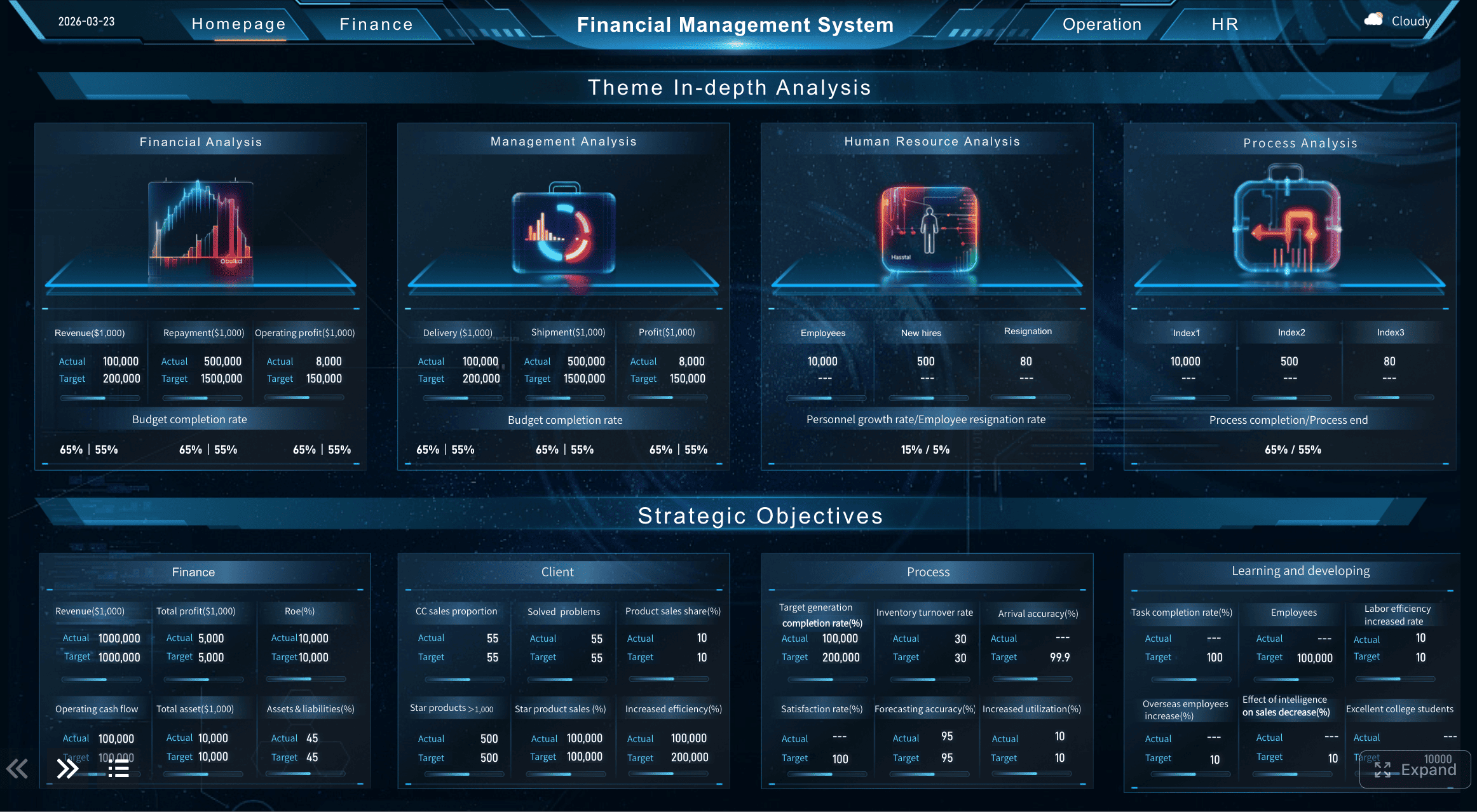

A Dashboard Example with Data Combination created by FineBI (Click to Engage)

A Dashboard Example with Data Combination created by FineBI (Click to Engage)

This is one of the most common reasons an incident management dashboard looks healthy when operations are actually under strain.

When open and closed incidents are shown together without distinction, recent closure volume can create the illusion of control even while unresolved backlog is increasing. Teams may celebrate throughput while active risk quietly accumulates.

This affects visibility into:

Use separate views for separate purposes:

This distinction is essential. Incident commanders need immediate state awareness; managers and leaders need trend interpretation.

No dashboard design can compensate for poor source data. If incident records are inconsistently tagged, incomplete, or duplicated, reporting will remain unreliable.

Data quality problems create misleading outputs such as:

For example, if one team labels incidents by symptom and another by root service, service-level reporting becomes structurally inconsistent.

Start with simple, enforceable rules:

Trust in the dashboard rises when users see that definitions are stable and exceptions are controlled.

Average-based reporting is convenient, but incidents are rarely distributed evenly. Outliers matter because they often represent the most severe service failures.

A mean resolution time may appear acceptable even when a subset of critical incidents took far too long to resolve. This is dangerous because the dashboard appears compliant while the customer experience tells a different story.

For example:

The average may still look reasonable, while the operational reality is not.

Use more informative measures such as:

These formats reveal whether performance is consistently strong or merely cosmetically acceptable.

A dashboard is not a design exercise. In incident management, readability under pressure matters more than visual novelty.

The following often reduce speed and clarity:

If users need several seconds to decode a chart, it is too complex for operational use.

Prefer visual forms that support immediate comparison:

Good visualization is not about making data impressive. It is about making signals unmistakable.

A single global dashboard may look comprehensive, but it rarely helps the people responsible for action.

Frontline responders need to know what belongs to them. Service owners need to know which services are deteriorating. Executives need a summarized risk picture. A universal view often satisfies none of these audiences.

Without segmentation, teams struggle to identify:

Add filters and segmented views by:

This helps transform broad reporting into accountable reporting. It also supports targeted improvement, since recurring issues usually emerge in specific combinations of service, owner, and incident type.

A point-in-time dashboard can show what is happening now, but not whether the situation is getting better or worse.

Current-state metrics are necessary, but insufficient. A backlog of 120 incidents may be acceptable or alarming depending on the recent pattern. If the dashboard lacks historical comparison, teams lose the ability to detect deterioration early.

This limits insight into:

Useful trend analysis includes:

This is where an analytical layer becomes especially valuable. Platforms such as FineBI can support period comparison, interactive drill-down, and cross-source trend analysis, helping teams move beyond static status reporting into deeper operational learning.

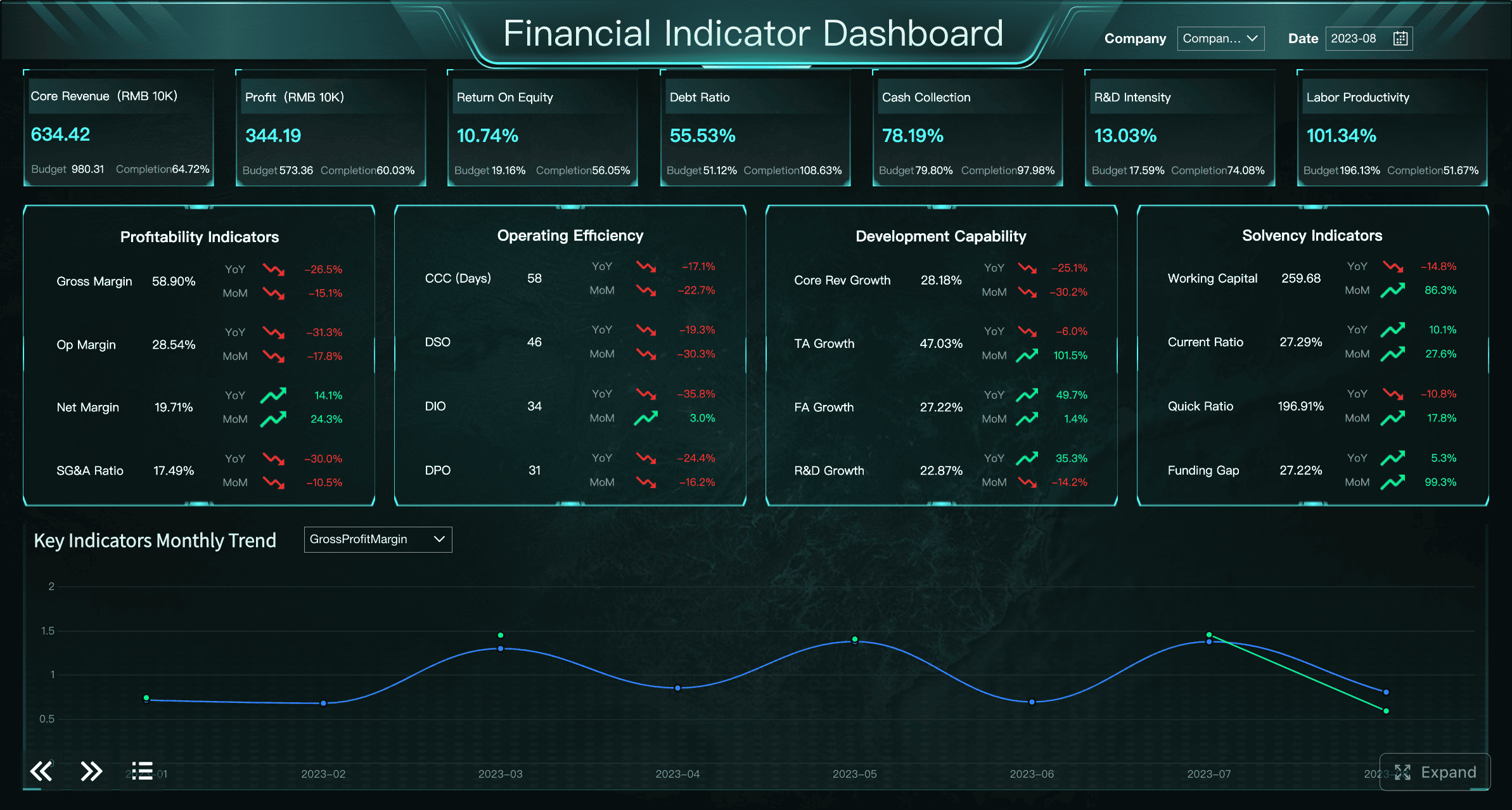

A Dashboard Example created by FineBI (Click to Engage)

A Dashboard Example created by FineBI (Click to Engage)

A dashboard cannot communicate effectively if it tries to serve all stakeholders with the same level of detail.

Different audiences ask different questions:

When these needs are merged into one dashboard, the result is either oversimplified for operators or too detailed for leadership.

Use a layered model:

| Audience | Best dashboard focus |

|---|---|

| Executives | High-level service risk, major incident trend, SLA summary |

| Service owners | Service-specific trends, recurring incident categories, breach analysis |

| Operations teams | Active incidents, aged backlog, response queue, ownership status |

The key is not building separate reporting systems, but creating role-based views from the same governed data model.

Dashboards decline in value when teams treat them as a one-time build rather than a living part of incident operations.

If metrics remain unchanged while workflows, services, and escalation paths evolve, the dashboard becomes outdated. Users notice this quickly. They begin to rely on side conversations, screenshots, or manually compiled reports.

Warning signs include:

Establish a reporting governance rhythm:

A dashboard should evolve with the operating model. Otherwise, its relevance decays even if the underlying system remains active.

Before choosing charts, define the actual operational questions.

Examples include:

If a metric does not support a real decision, remove it.

A strong incident management dashboard should align reporting with outcomes such as:

This keeps the dashboard practical rather than descriptive only.

Dashboard improvement should begin with data governance, not chart cosmetics.

At minimum, standardize:

Useful controls include:

Without these controls, even well-designed reporting will degrade.

Operational monitoring and retrospective analysis should not compete for the same screen.

A practical structure is:

Live operations dashboard

During active response, users should not need to interpret long-term analytical views. Keep immediate work signals prominent and reserve trend analysis for review cycles.

A dashboard should not only describe the environment. It should help users decide what to do next.

Action-oriented features include:

Each dashboard section should imply an action:

This is where BI maturity matters. With tools like FineBI, organizations can create drill-down dashboards that connect overview metrics to underlying incident detail, improving both executive visibility and operational follow-through.

A drill-down dashboard example created by FineBI (Click to Engage)

A drill-down dashboard example created by FineBI (Click to Engage)

A practical dashboard usually needs the following components:

These components support both daily control and longer-term process review.

The best structure depends on the scale and maturity of your operations.

A simpler dashboard is often sufficient when:

In such environments, a clean overview with strong filtering may be enough.

Larger organizations often require:

In these cases, a more flexible analytics layer is valuable. FineBI can support enterprise teams that need self-service analysis, role-based views, and the ability to combine incident data with operational or business data for broader decision support.

Templates can accelerate dashboard creation, but they should never replace reporting logic.

Templates are useful for:

Adapt templates by asking:

A template should be a starting point, not a reporting strategy.

A strong incident management dashboard is not a final deliverable. It is part of an ongoing operating system for service reliability.

To keep reporting useful:

The most effective teams use dashboards not only to monitor incidents, but to improve the way incidents are managed, escalated, and learned from.

Use the checklist below to evaluate whether your current reporting is helping or misleading:

If several boxes remain unchecked, the issue is not only dashboard design. It is reporting discipline. Fix that foundation, and your incident management dashboard can become what it was meant to be: a trusted tool for faster response, clearer accountability, and continuous service improvement.

Many dashboards show available metrics instead of decision-focused signals. When charts are not tied to actions like escalation, prioritization, or resource allocation, teams get visibility without clarity.

Focus on metrics that help teams act quickly, such as active incident load, priority mix, SLA risk, backlog age, affected services, and recurrence trends. These indicators are more useful than broad totals alone.

Ticket count shows activity, but it does not explain severity, customer disruption, or business risk. A smaller number of high-impact incidents can matter far more than a large volume of low-priority tickets.

Usually they should be separated or clearly segmented because they answer different questions. Open incidents help teams manage current risk, while closed incidents are better for trend review and performance analysis.

Start by cleaning category definitions, removing unused KPIs, and tailoring views for different audiences. Tools like FineBI can also help combine operational and business data so the dashboard reflects real service impact.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

User Dashboard Explained: 7 Core Components, Top Use Cases, and Real-World Examples

A user dashboard is often the first meaningful screen people see after signing in to a product. It shapes how quickly they can understand their account, complete tasks, monitor activity, and find the tools they need. Whe

Yida Yin

May 08, 2026

Best Marketing KPIs Dashboard Template: 7 Key Features for Weekly and Monthly Reporting

A well designed marketing kpis dashboard does more than display numbers. It gives teams a structured view of performance, helps leaders identify risk and opportunity early, and turns reporting into a repeatable decision

Yida Yin

May 08, 2026

How to Create a Business Management Dashboard: KPI Framework, Layout Design, Alert Rules, and Examples

A well designed business $1 gives leaders a single, reliable view of performance across the company. Instead of reviewing disconnected spreadsheets, static reports, and fragmented updates from different departments, mana

Yida Yin

May 08, 2026