A benchmark dashboard is a decision-making tool that helps operations leaders compare performance across teams, locations, and time periods in one place. Its business value is simple: it turns scattered KPIs into a fair, structured view of who is performing well, where gaps exist, and what to improve next.

If you manage multiple teams, sites, shifts, or business units, you already know the pain points. Raw reports make every group look different for reasons that may have nothing to do with actual performance. One site handles more volume. One team supports harder cases. One region has staffing shortages. Without benchmarking, leaders end up debating the numbers instead of acting on them.

A well-built benchmark dashboard solves that. It normalizes data, adds context, and highlights meaningful differences so leaders can make faster, better decisions.

In practical terms, a benchmark dashboard is a visual analytics layer that compares performance against a reference point. That reference point could be:

For operations directors, plant managers, service leaders, and BI teams, this matters because comparison is what makes metrics useful. Seeing a team hit 92% SLA compliance is not enough. You need to know whether 92% is above average, below target, improving over time, or lagging behind similar teams.

A benchmark dashboard differs from a standard reporting dashboard in a few important ways:

That distinction is critical. Reporting tells you the score. Benchmarking tells you whether the score is good, bad, improving, or misleading.

For example, a service center leader may want to compare:

A strong benchmark dashboard puts all of those comparisons into one view, with filters and drill-downs that explain why differences exist.

The most effective benchmark dashboard balances breadth and focus. It should cover the few metrics that truly drive business outcomes while allowing fair comparisons across entities and time.

Below are the core KPI categories most benchmark dashboards should include:

These metrics help leaders answer the most important benchmarking question: Who is performing better, under what conditions, and by how much?

Team-level benchmarking is often the fastest path to performance improvement because managers can directly influence coaching, staffing, workflows, and execution.

Useful team-to-team metrics include:

The key is normalization. If one team handles twice the volume or more complex work, raw totals will distort the picture. A benchmark dashboard should support normalized views such as:

This lets leaders compare execution quality, not just workload size.

Site benchmarking is more complex because location differences can be structural, not just operational. A high-performing urban facility may not be directly comparable to a rural site with different labor availability, customer mix, or logistics constraints.

That is why a benchmark dashboard should account for factors such as:

Fair comparison methods include:

This prevents misleading rankings and helps leaders identify truly replicable best practices.

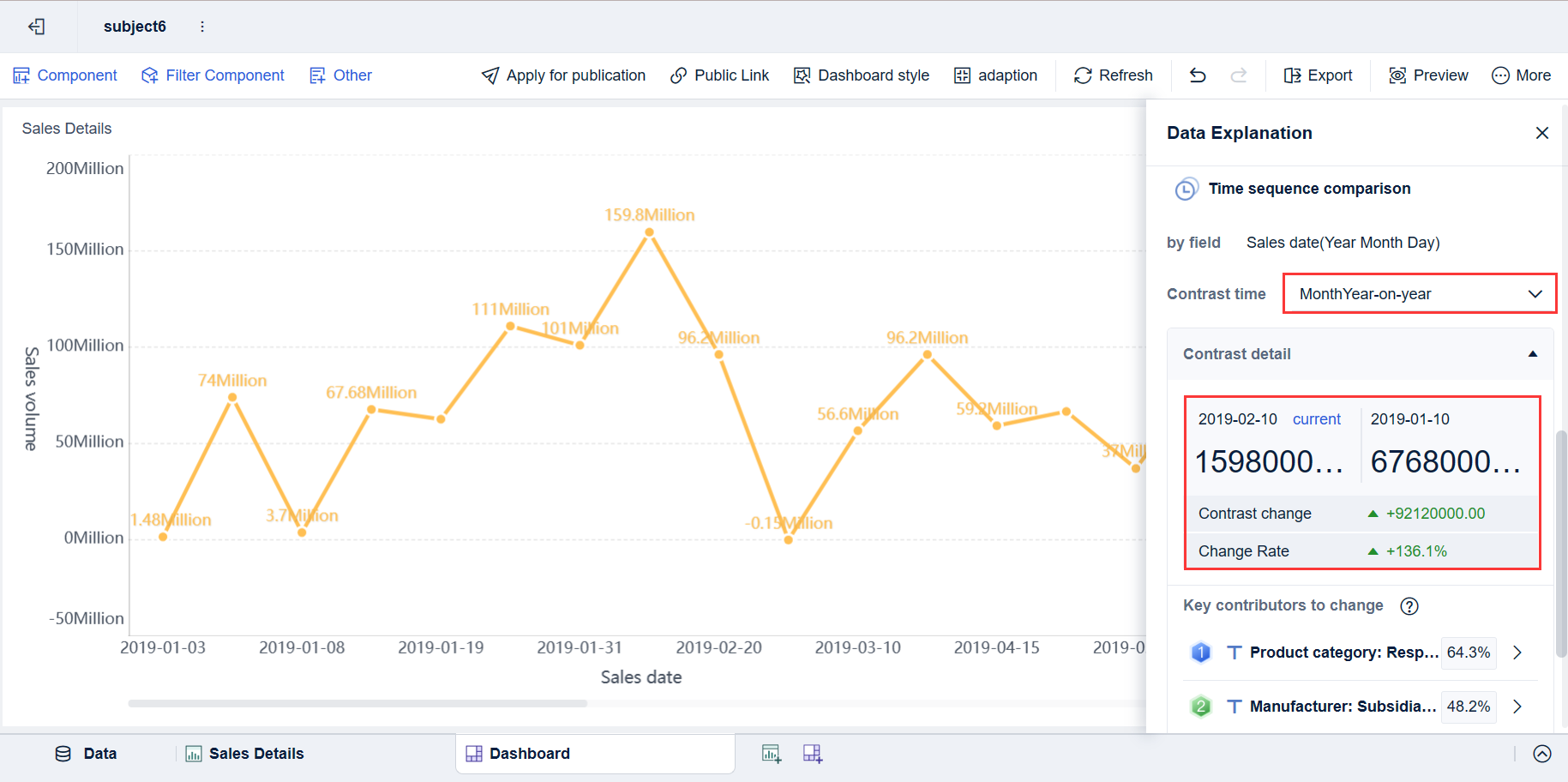

Time-based benchmarking helps leaders distinguish one-off variance from sustained change. It adds the context needed to avoid overreacting to short-term noise.

A practical benchmark dashboard should support:

To make those views useful, include:

For example, if customer wait time rises in December, the dashboard should help leaders see whether that is an unusual operational issue or a predictable seasonal spike.

A benchmark dashboard only creates value if leaders trust it and can act on it quickly. The biggest design mistake is trying to show everything at once. The goal is not maximum data density. The goal is faster, fairer decisions.

Not all benchmarks are equally useful. The right benchmark depends on the decision you want to support.

Common benchmark types include:

You should also decide when to benchmark against top performers versus the median.

A seasoned consultant’s rule: if the benchmark does not support a decision, it should not be on the dashboard.

Leaders reject dashboards when comparisons feel unfair. To make benchmarking credible, segment the data before ranking it.

Key segmentation dimensions include:

This matters because two teams with the same KPI score may be operating in very different conditions.

To make outliers explainable, use:

When leaders can click from a red scorecard into workload mix, staffing levels, or process adherence, the benchmark dashboard becomes operationally useful instead of politically risky.

The best benchmark dashboard designs are visually simple but analytically rich. Each visual should answer a specific business question.

Recommended visual elements include:

A practical design principle is to combine summary and explanation in the same experience. Start with high-level comparisons, then allow drill-down to root causes.

A benchmark dashboard becomes especially valuable when it is tied to recurring management workflows.

Team reviews often suffer from anecdotal management. One supervisor says a team is overloaded. Another says a different team is underperforming. Without benchmarking, these discussions become subjective.

A benchmark dashboard improves team reviews by helping leaders:

This creates a more disciplined review process and reduces reliance on opinion.

In multi-site environments, leaders need a fast way to spot process drift and performance inconsistency. A benchmark dashboard helps compare locations side by side while accounting for differences in scale and context.

Common outcomes include:

This is especially valuable in manufacturing, retail, logistics, healthcare operations, and shared service environments where local execution varies widely.

Continuous improvement efforts fail when prioritization is weak. Teams generate too many ideas and act on the wrong ones.

A benchmark dashboard helps leaders prioritize actions based on:

This makes improvement planning more rigorous. Instead of asking, "What should we work on?" leaders can ask, "Which benchmark gap is largest, most material, and most fixable?"

Even experienced teams make avoidable errors when building a benchmark dashboard. These mistakes usually damage trust more than they damage analytics.

The most common issues are:

A good rule is to treat every ranking as the beginning of an investigation, not the end of one.

Choosing the right platform matters because benchmarking requires more than charts. It requires governed definitions, flexible slicing, reliable refreshes, and enough interactivity for leaders to explain outliers without leaving the dashboard.

If you are evaluating technology for a benchmark dashboard, prioritize these capabilities:

Without these capabilities, teams often fall back to spreadsheet-based benchmarking, which is slow, inconsistent, and difficult to govern.

Before launching a benchmark dashboard, leadership should align on a few operational questions:

To make rollout successful, follow these implementation best practices:

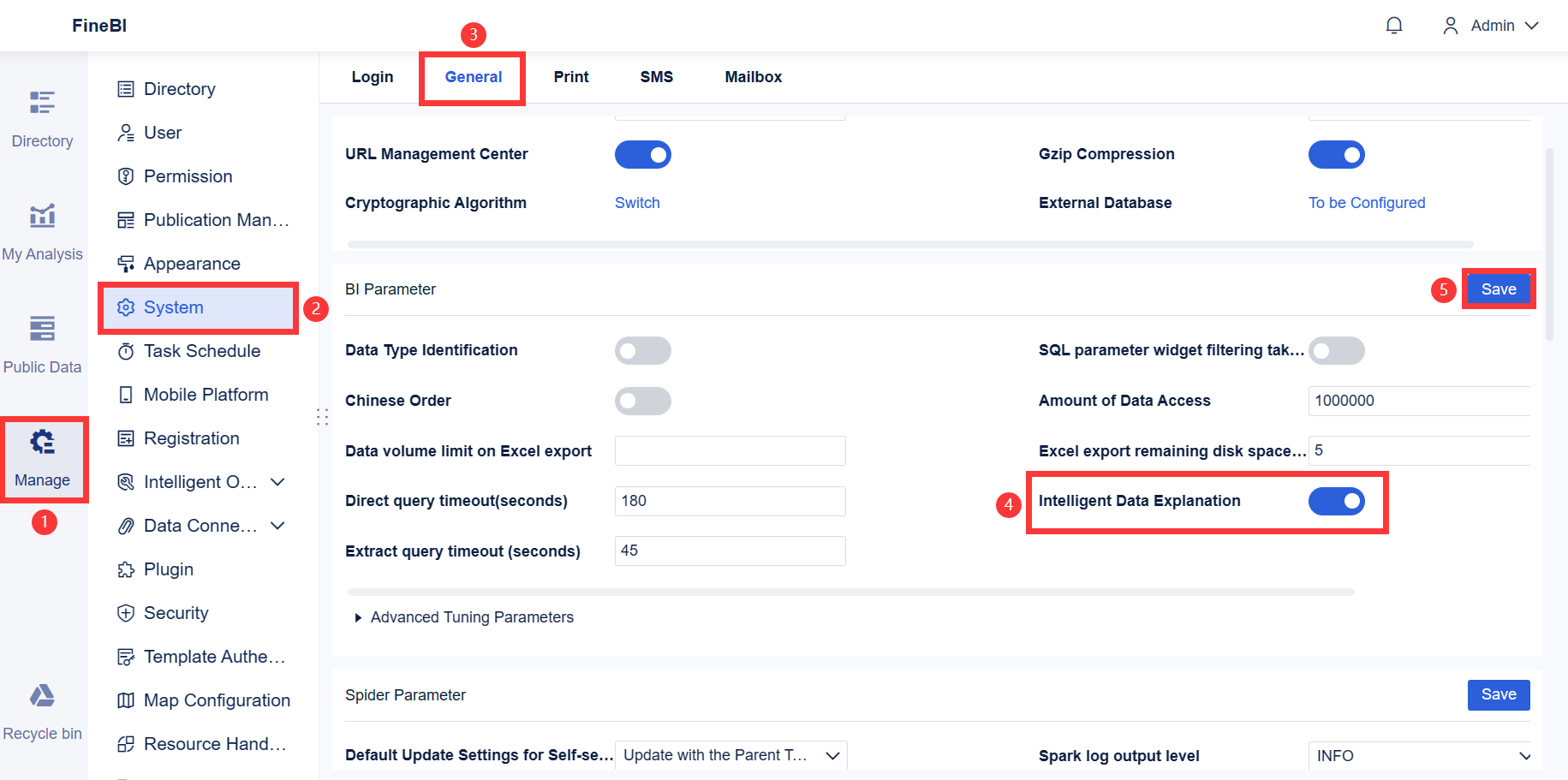

Building this manually is complex; use FineBI to utilize ready-made templates and automate this entire workflow.

A modern benchmark dashboard is not just a collection of charts. It requires integrated data, benchmark logic, segmentation, historical analysis, permissions, and repeatable refresh processes. Trying to manage all of that through spreadsheets or one-off BI builds usually creates bottlenecks for both analysts and business users.

FineBI helps solve that by enabling teams to:

For enterprise decision-makers, the value is straightforward: less manual reporting, faster insight generation, and more consistent performance reviews across the business.

If your current reporting setup tells you what happened but not how one group compares to another, it is time to move beyond static dashboards. A well-designed benchmark dashboard gives leaders the context to prioritize action, replicate best practices, and improve performance with confidence. FineBI is the practical way to get there faster.

A benchmark dashboard is used to compare performance across teams, sites, or time periods against a reference point such as a target, peer average, or top performer. It helps leaders see where results are strong, where gaps exist, and where action is needed.

A regular KPI dashboard mainly shows current results, while a benchmark dashboard shows how those results compare to something meaningful. That extra context makes it easier to judge whether performance is actually good, poor, or improving.

Most benchmark dashboards include productivity, quality, compliance, cost efficiency, service level, utilization, throughput, and trend metrics. The right mix depends on the business process being compared and the decisions leaders need to make.

Fair comparisons require normalization for factors like workload, labor hours, case complexity, and local operating conditions. Using peer groups, indexed scores, and per-unit metrics helps prevent misleading rankings.

Operations leaders, plant managers, service managers, and BI teams benefit most because they need to compare performance across multiple groups and periods. It is especially useful in organizations managing several teams, locations, shifts, or business units.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

CFO Dashboard Examples: How to Build a Dashboard Executives Actually Use

Executives do not need another report. They need a decision tool. That is the real difference between weak and effective cfo $1 . A $1 should help leaders identify what changed, why it matters, and what action to take ne

Yida Yin

Jan 01, 1970

Workforce Metrics Dashboard: 9 Steps to Build One for Better Executive Decision-Making

A workforce $1 is not just an HR $1. In practice, it is an executive decision system that turns workforce data into signals leaders can act on quickly. For CHROs, CEOs, CFOs, COOs, and business unit leaders, the value is

Yida Yin

Jan 01, 1970

What Is a Reputation Management Dashboard? Core Components, KPIs, and Enterprise Use Cases Explained

A reputation $1 gives enterprise teams one place to monitor reviews, mentions, sentiment, response activity, and broader brand health signals across digital channels. For marketing leaders, CX managers, support directors

Yida Yin

Jan 01, 1970