A call center KPI dashboard should do one job exceptionally well: help leaders make faster, better operational decisions without digging through fragmented reports.

For operations directors, call center managers, workforce planners, and team leads, the pain is familiar. You have call data everywhere, but not enough clarity anywhere. One report shows volume. Another shows agent activity. A third shows CSAT. By the time someone connects the dots, the service-level miss has already happened, overtime has been approved, and customers have felt the impact.

A dashboard leaders actually use is not a wall of metrics. It is a decision system. It helps you answer questions like:

That is the business value. A well-built dashboard reduces reaction time, improves accountability, and ties frontline execution to executive outcomes.

Before choosing charts or metrics, define the decisions the dashboard must support. This is where most dashboard projects fail. Teams start with available data instead of operational use cases.

A high-value call center KPI dashboard should support daily, weekly, and monthly decisions across service delivery, staffing, coaching, and performance management.

At the daily level, leaders need to manage immediate operational risk. The dashboard should help answer:

This view is about control and speed. It should be built for intraday action, not postmortem analysis.

At the weekly level, the dashboard should support pattern recognition and performance management. Leaders should be able to see:

This is where operational management moves from monitoring to diagnosis.

At the monthly level, senior leaders need the dashboard to summarize business performance, resource efficiency, and customer outcomes. It should support decisions such as:

One of the most common mistakes is forcing all audiences onto the same screen.

Executives need:

Frontline managers need:

If your dashboard tries to satisfy both audiences equally on one page, it will satisfy neither. Build role-based views with a shared metric definition layer underneath.

These are three distinct dashboard functions.

Monitoring activity tells you what is happening now.

Examples:

Diagnosing issues tells you why performance moved.

Examples:

Improving performance tells you what to do next.

Examples:

The best call center dashboards are designed across all three layers, not just the first.

A dashboard becomes useful when it prioritizes a small number of metrics with clear operational meaning. Too many teams add every available KPI and end up with noise, not insight.

Focus on KPIs that connect directly to three areas:

You also need a balance between leading indicators and lagging indicators.

A mature call center KPI dashboard uses both. Leading indicators drive intervention. Lagging indicators validate whether those interventions worked.

Avoid vanity metrics that look busy but do not improve decisions. Total calls handled, for example, may matter in context, but on its own it says little about efficiency, quality, or customer outcome.

Below are the core KPI categories most operations teams should evaluate and define consistently.

This is the headline area for service operations. If demand rises and staffing does not keep up, this metric will typically show the damage first.

Leaders should monitor:

ASA is one of the clearest signals of customer wait friction. It works best when viewed alongside call volume and staffing coverage, not in isolation.

Use it to:

Abandonment should trigger operational investigation, not just reporting. High abandonment can reflect poor service speed, but also channel mismatch or poor IVR design.

Look at:

AHT is useful, but easily misused. Shorter is not always better. If agents rush interactions and create repeat contacts, the operation gets less efficient, not more.

Use AHT in context with:

FCR is one of the most valuable indicators because it captures both efficiency and customer experience.

A rising FCR typically means:

These metrics are essential for workforce control.

Together, they often explain why service level misses happen even when headcount looks sufficient on paper.

High transfer or escalation rates usually point to a root-cause issue somewhere else in the system.

Common causes include:

These are diagnostic metrics with direct coaching and process implications.

A call center cannot be judged by speed alone. If service level improves while CSAT drops, the dashboard should make that contradiction visible immediately.

Leaders should compare:

This is critical for executive reporting because it connects operating performance to financial efficiency.

Use cost per contact to assess:

These two metrics help determine whether service problems were preventable.

If forecast accuracy is poor, planning assumptions are weak.

If staffing variance is high, execution is weak.

If both are strong and service still misses, the problem may be process design or demand complexity.

Even the right KPIs will fail if the dashboard layout forces leaders to hunt for meaning.

A strong call center KPI dashboard should be organized around operational workflow, not around whatever the source systems happen to output.

This structure works because it mirrors how leaders think through problems.

Start with incoming pressure:

This tells leaders whether the operating environment changed.

Next, show what customers experienced:

This tells leaders whether demand was absorbed effectively.

Then show how the workforce performed:

This helps isolate whether the issue is staffing, execution, or process friction.

Finally, show outcome quality:

This prevents teams from optimizing speed while harming experience.

A metric tile with one number is rarely enough. Leaders need context.

Use:

Good dashboard design reduces interpretation time. If a leader has to click four layers deep to realize service level is deteriorating, the design failed.

The dashboard should emphasize what requires attention now.

That means:

Executives and managers do not need all numbers equally visible. They need the numbers that indicate action.

This should be the control tower for supervisors and real-time analysts.

Include:

Primary actions:

This view should summarize what happened today and where manager action is needed.

Include:

Primary actions:

This should be diagnostic, comparative, and management-oriented.

Include:

Primary actions:

This should be concise and outcome-focused.

Include:

Primary actions:

A dashboard is not complete when the visuals are done. It only becomes operationally valuable when it is embedded in a repeatable review process.

Without workflow, dashboards become passive screens that everyone glances at and nobody uses to drive accountability.

Not every metric should refresh at the same speed.

Use refresh logic like this:

This matters because stale data destroys trust, while unnecessary refresh rates add complexity without decision value.

Every KPI should have a documented owner and a clear definition.

At minimum, define:

For example, if one team defines AHT differently from another, your dashboard becomes politically contested rather than operationally useful.

A practical operating rhythm might look like this:

Intraday review

Daily stand-up

Weekly operations review

Monthly leadership review

This turns the dashboard into a management system rather than a reporting artifact.

Most dashboards stop at presentation. Strong operations teams go one step further and make movement explainable.

A KPI should be interpreted from at least three angles:

This three-way comparison helps separate performance failure from planning failure.

Numbers without context often lead to bad decisions.

Examples:

Your dashboard should support annotations, commentary, or contextual filters so leaders do not misread the story.

Every KPI change should lead to one of three outcomes:

This is how dashboards stop being descriptive and start becoming operationally transformative.

The fastest way to lose dashboard adoption is to treat the first version as final. Call center operations change constantly. New channels appear. Staffing models shift. Business priorities evolve. The dashboard must be governed as a living asset.

Do not begin with a blank page unless you have strong internal BI maturity. A focused template accelerates alignment because it gives teams a baseline structure and common language.

Start with a template that covers:

Then adapt it based on:

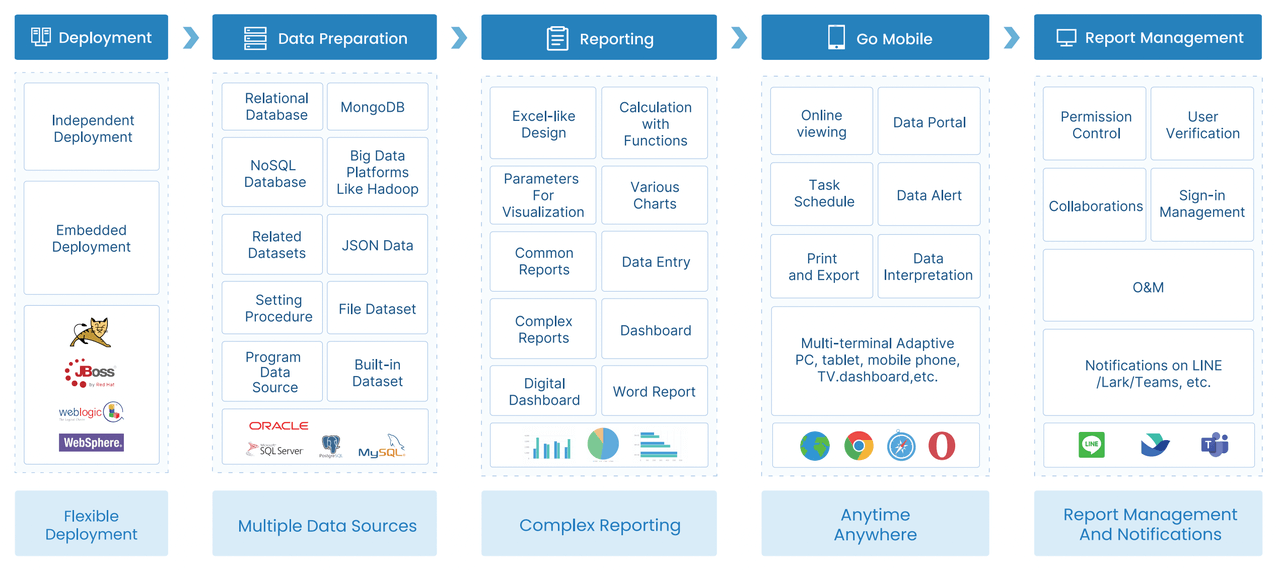

The right dashboard software should reduce manual effort and increase trust in the data.

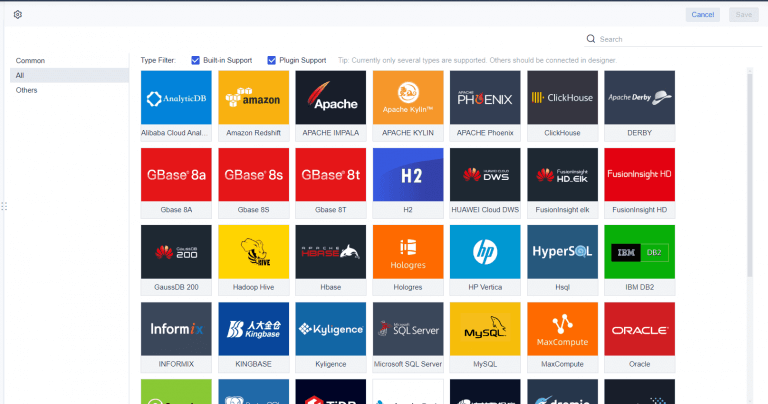

Key evaluation criteria include:

A quarterly dashboard audit is a smart operating discipline.

Review:

A dashboard with fewer, sharper metrics usually performs better than one that keeps expanding.

When everything is visible, nothing is obvious. Keep each dashboard view focused on a specific decision-making context.

If nobody owns the metric, nobody trusts the number. Define every KPI and assign accountability.

If you overemphasize AHT and underweight FCR, quality, or CSAT, agents will optimize for speed at the expense of resolution.

A dashboard is only valuable if it changes behavior. If your review process does not convert insight into action, the dashboard becomes decorative.

At this point, the pattern should be clear: building an effective call center KPI dashboard is not just about choosing charts. It requires KPI definition, role-based design, workflow alignment, governance, refresh logic, drill-down capability, and ongoing maintenance.

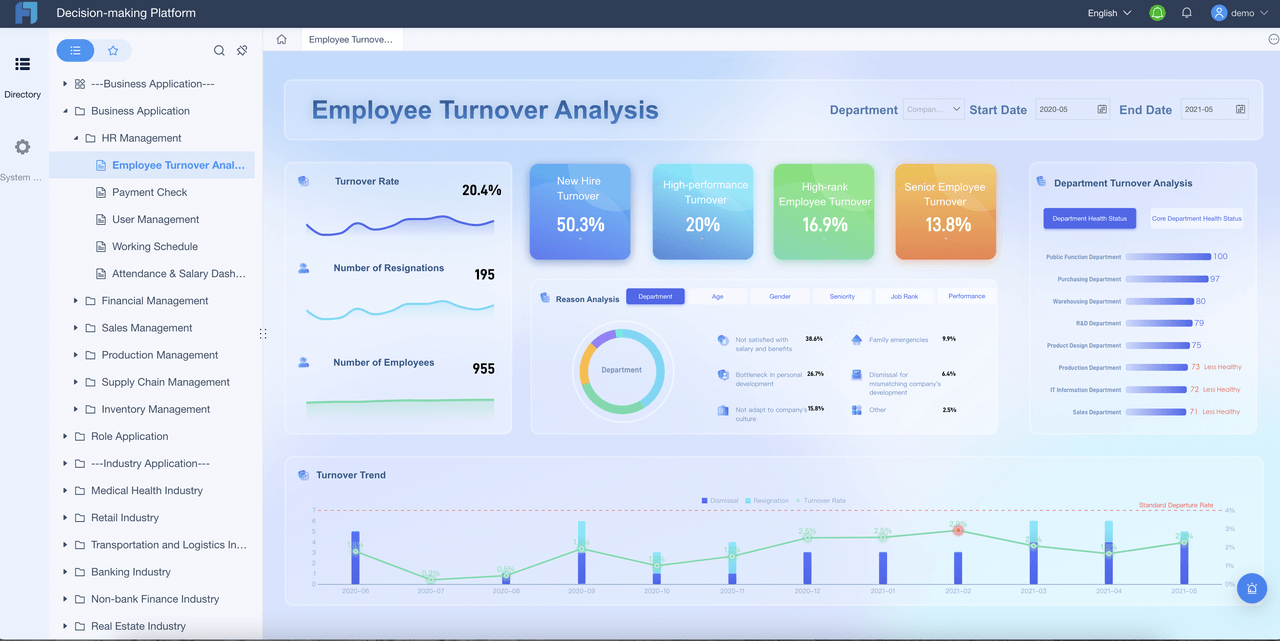

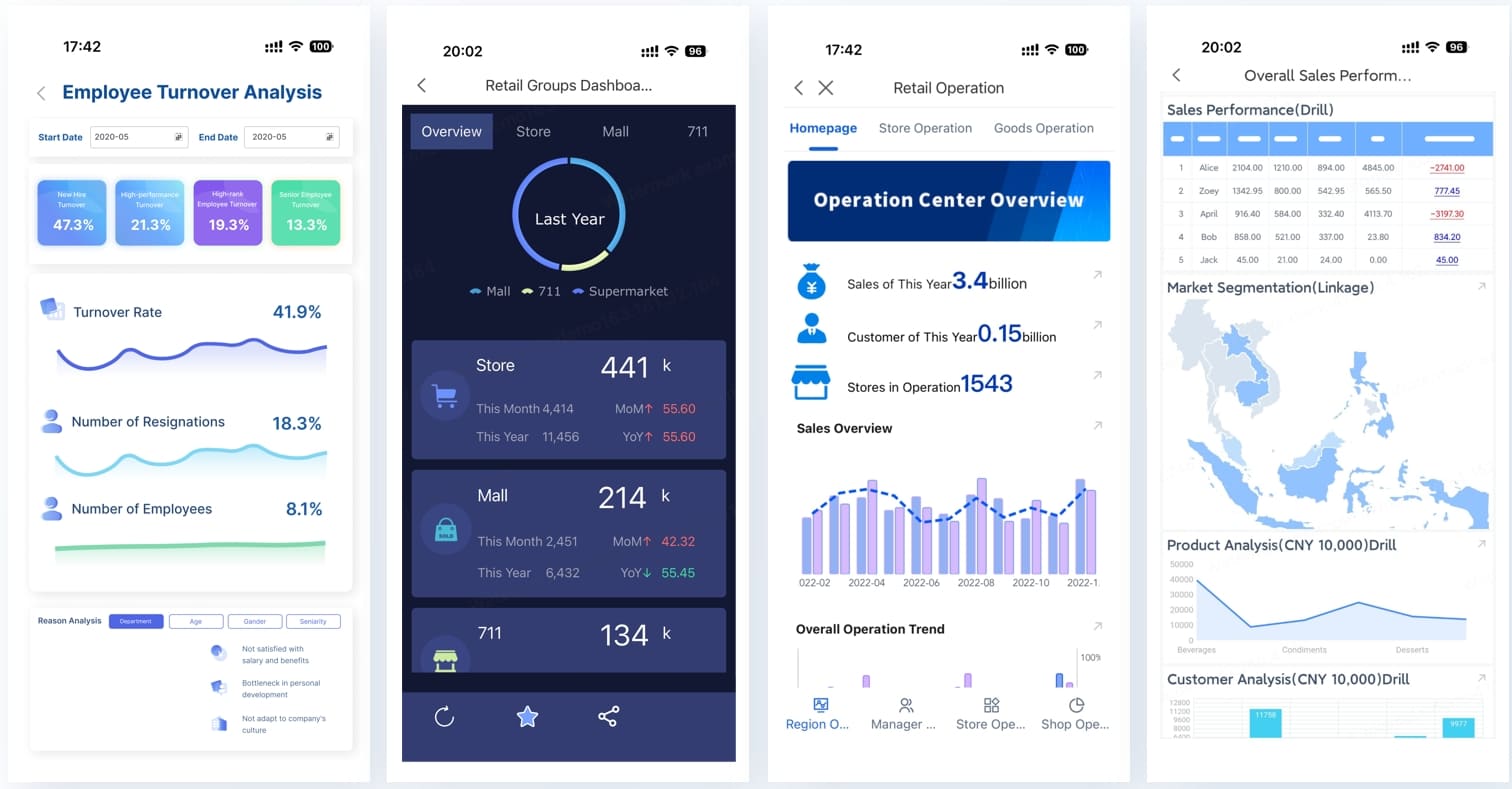

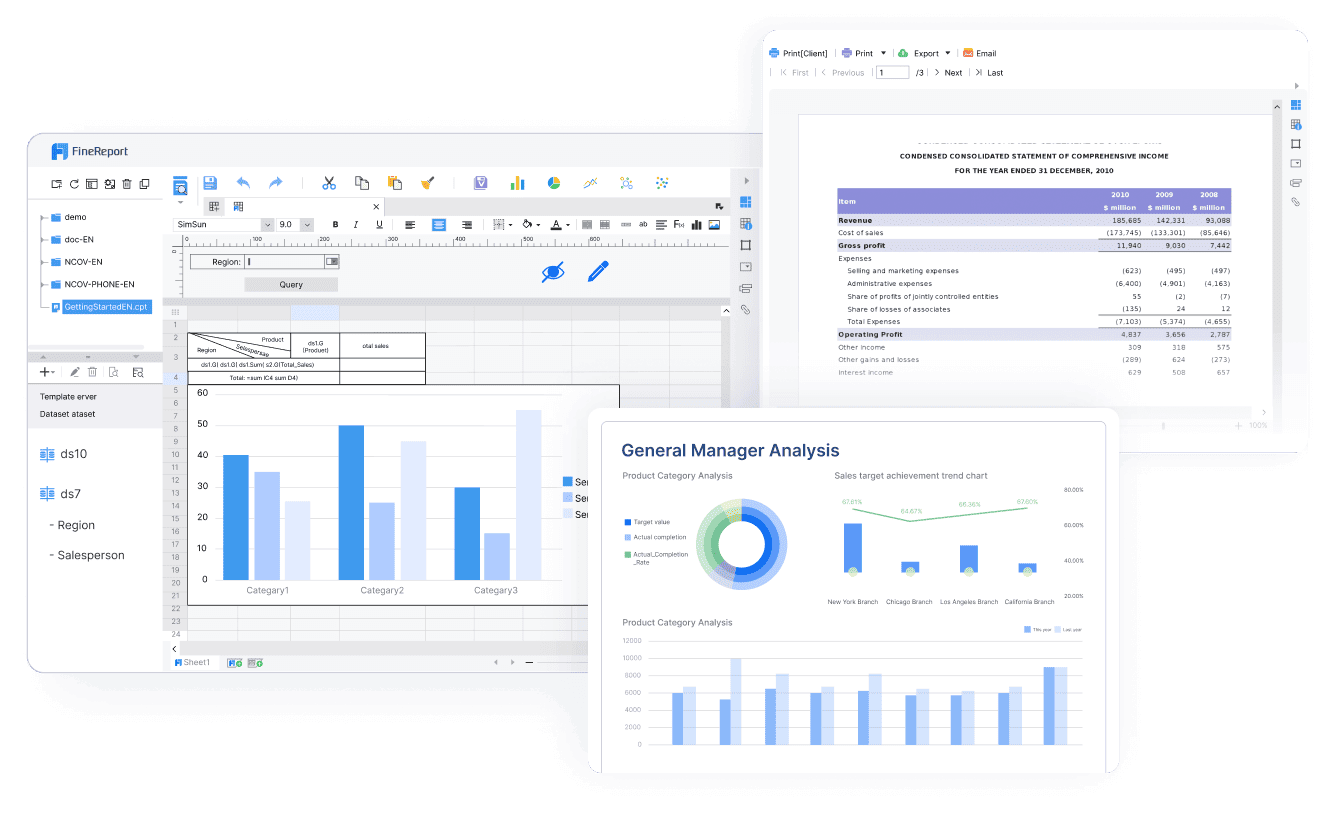

Building this manually is complex; use FineBI to utilize ready-made templates and automate this entire workflow.

FineBI helps operations leaders move faster by giving them a practical way to:

For enterprise teams, this is the difference between having reporting and having a true management system. Instead of spending cycles assembling numbers, leaders can focus on staffing decisions, coaching priorities, service recovery, and continuous improvement.

If your current reporting process depends on exported files, inconsistent definitions, and static presentations, you do not need more dashboard meetings. You need a better platform.

FineBI is the practical next step for teams that want to build a call center KPI dashboard operations leaders actually use, trust, and act on.

A useful call center dashboard should focus on service level, average speed of answer, abandonment rate, queue volume, occupancy, adherence, average handle time, and customer outcomes such as CSAT or first contact resolution. The best mix combines leading indicators for fast action with lagging indicators that confirm long-term impact.

A dashboard is built for decisions, not just visibility. It brings real-time and historical metrics into one view so leaders can spot risk quickly, diagnose the cause, and respond before service levels slip further.

Operations leaders, call center managers, workforce planners, supervisors, and team leads all benefit from it, but they should not all use the same view. Executives usually need trend summaries and business impact, while frontline managers need queue-level detail and coaching signals.

Real-time or near-real-time updates are best for intraday management, especially for queue health, staffing, and agent availability. Weekly and monthly views should also be included to support trend analysis, coaching, and planning.

It should be designed around the decisions leaders need to make, not around every metric available in the system. A strong dashboard stays focused, separates monitoring from diagnosis, and makes it clear what action to take next.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

What Is a Benchmark Dashboard? Practical Guide to Compare Teams, Sites, and Time Periods

A benchmark dashboard is a decision making tool that helps operations leaders compare performance across teams, locations, and time periods in one place. Its business value is simple: it turns scattered KPIs into a fair,

Yida Yin

Jan 01, 1970

CFO Dashboard Examples: How to Build a Dashboard Executives Actually Use

Executives do not need another report. They need a decision tool. That is the real difference between weak and effective cfo $1 . A $1 should help leaders identify what changed, why it matters, and what action to take ne

Yida Yin

Jan 01, 1970

Workforce Metrics Dashboard: 9 Steps to Build One for Better Executive Decision-Making

A workforce $1 is not just an HR $1. In practice, it is an executive decision system that turns workforce data into signals leaders can act on quickly. For CHROs, CEOs, CFOs, COOs, and business unit leaders, the value is

Yida Yin

Jan 01, 1970