Executive dashboards fail when they look impressive but do not help leaders decide what to do next. The real purpose of reviewing interactive dashboard examples is not to copy layouts. It is to understand how strong dashboards reduce executive friction, surface risk earlier, and connect top-line performance to fast action.

For CIOs, operations directors, finance leaders, and business unit heads, the pain points are familiar: too many disconnected reports, endless status meetings, conflicting KPI definitions, and no easy way to move from a red metric to the reason behind it. An executive dashboard should solve those problems in one place.

A strong executive dashboard delivers business value in four ways:

If you are evaluating interactive dashboard examples, use this guide as a practical framework for building one that executives will actually trust and use.

Most executives do not need more data. They need a better decision environment. The best dashboard examples succeed because they are built around decisions first, visuals second.

Before selecting charts, filters, or sources, define what the dashboard is supposed to support. Is the CEO reviewing growth and forecast confidence? Is the COO monitoring delivery risk and capacity strain? Is the CFO watching margin erosion and cash flow pressure? The dashboard should reflect those recurring decisions clearly.

The next lesson is hierarchy. Executive teams need a fast, strategic scan before they need detail. That means separating top-level outcomes from operational diagnostics. The first view should answer, “Are we on track?” Only then should users drill into “What changed?” and “Where do we need to act?”

Interactivity is what turns a dashboard from a static status board into a decision tool. A leader should be able to investigate a trend, compare periods, filter segments, and isolate drivers without opening three more reports or waiting for an analyst.

Clear success criteria matter too. A dashboard initiative should not be judged by visual polish alone. It should be measured by outcomes such as:

When assessing interactive dashboard examples, these are the operational and business KPIs that matter most:

These KPIs help determine whether the dashboard is creating business value, not just generating screen views.

The best executive dashboards do not start with available data. They start with repeated business questions.

Ask what leaders regularly need to know in board meetings, weekly reviews, and monthly planning sessions. Common examples include:

Once those questions are clear, map each KPI to four things:

This mapping prevents a common dashboard mistake: showing numbers that generate discussion but not action.

Keep the first view limited. Executives should see a small set of metrics that reveal performance, risk, and momentum. In most cases, that means a focused summary rather than an overloaded command center.

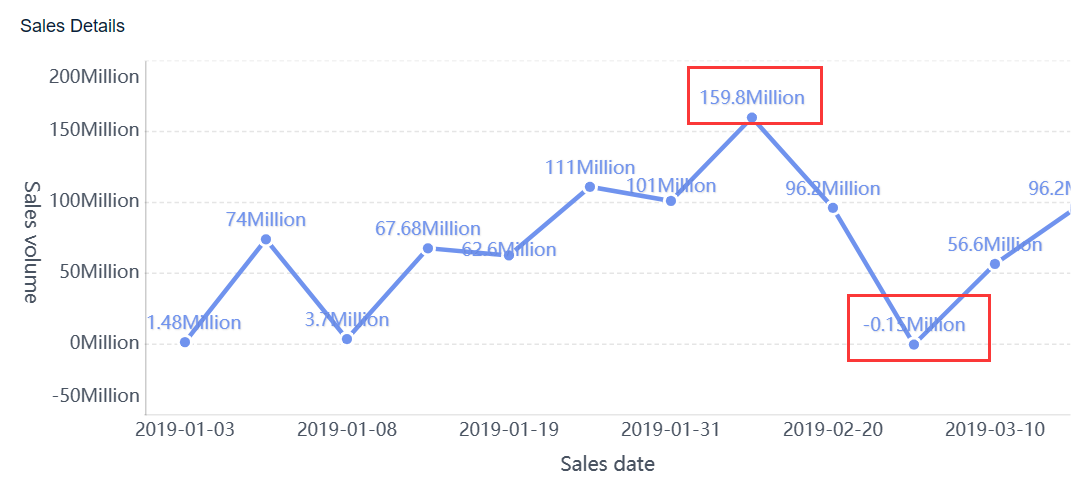

A dashboard that only shows lagging results tells leaders what already happened. A decision-ready dashboard also includes signals that predict what is likely to happen next.

For example:

This combination gives leaders both confirmation and warning. A growth dashboard may show current revenue above target while pipeline conversion quality drops. An operations dashboard may show acceptable service levels today while backlog and cycle time signal future disruption.

Always present targets, thresholds, and trends alongside actual performance. A KPI without context is easy to misread.

Many executive dashboard failures are really governance failures. If teams argue over formulas during review meetings, the dashboard has already lost credibility.

Before design starts, standardize:

Add plain-language metric definitions for anything that could be interpreted in multiple ways. Terms like “active customer,” “qualified pipeline,” “gross retention,” or “on-time delivery” often vary across departments. If the logic is unclear, adoption will be low no matter how polished the interface looks.

The value of interactive dashboard examples becomes most obvious in drill-down design. Executives need a quick path from summary performance to the specific cause of deviation.

Every top-line KPI should have a logical investigation path. A red revenue number should not force the user to guess where to click next.

A good drill-down flow typically moves through levels such as:

This progression helps leaders go from “performance is off” to “here is the source of the issue” in minutes.

Keep filters persistent throughout the journey. If a leader selects a quarter, region, or channel, that context should remain in place while drilling deeper. Losing filter state creates confusion and slows interpretation.

Strong executive dashboards do more than display numbers. They explain movement.

Useful interactive patterns include:

The best dimensions depend on the business model, but common ones include:

This is what separates decorative dashboards from operationally useful ones. Leaders should not have to ask analysts for a second report to understand why a KPI moved.

Executives use dashboards under time pressure. The interface must support the most common actions immediately.

Make these actions obvious:

Avoid cluttered layouts, buried controls, and dense menu systems. If users need training just to find a regional filter or comparison toggle, the design is too complex for executive use.

A pragmatic rule: if the dashboard cannot be used effectively during a live leadership meeting, it is not ready.

A dashboard becomes much more valuable when it does not wait for scheduled review meetings to surface problems.

Alerts should fire when something requires attention, not when data simply changes. High-value alert triggers include:

Each alert should include enough context to support a decision. A useful executive alert answers:

Without context, alerts create noise instead of action.

Not every KPI shift deserves the same urgency. Executive dashboards should separate strategic risk from operational exceptions and informational updates.

A simple model works well:

Routing matters as much as severity. Alerts should go to the people who can act, not just the people who requested visibility. An at-risk retention trend may go to customer success leadership, while a forecast miss may route to sales and finance owners.

Poorly designed alerting destroys trust quickly. If executives receive too many low-value notifications, they will ignore the important ones too.

Use these controls to keep alerts effective:

Alert quality should be reviewed like any other KPI. If alerts do not drive faster action, they need redesign.

Reviewing interactive dashboard examples is useful when you know what to borrow and what to ignore. The goal is not imitation. It is pattern recognition.

Several dashboard types consistently work well for leadership teams because they align with recurring executive decisions.

Revenue and growth dashboards

These typically track:

These are useful for CEOs, CROs, and finance leaders because they combine current performance with forward-looking confidence.

Operations dashboards

These often include:

These are valuable for COOs and operations heads who need to detect execution bottlenecks before customer or financial impact compounds.

Customer dashboards

These usually focus on:

These help leaders understand both current customer health and future revenue stability.

Not all polished dashboards are useful. Evaluate examples with an executive lens, not a design lens.

Ask these questions:

Many templates look good in demos but fail in practice because they ignore governance, refresh timing, and actionability.

Borrow interaction patterns, not generic content. A good template can give you structure for hierarchy, drill-down, and layout. But your metrics, thresholds, and decision rules must reflect your business model.

Use this adaptation process:

A dashboard should be proven in live decision moments before broad rollout. If executives cannot use it naturally in a real review, refine it before scaling.

Many executive dashboards fail for predictable reasons. They prioritize visual density over decision clarity.

Common mistakes include:

These failures usually show up in behavior before they show up in feedback. Executives stop opening the dashboard. Analysts keep rebuilding ad hoc reports. Meetings still revolve around arguing over the numbers.

If you want a practical implementation model, follow these consultant-grade steps:

Define the top 5 to 8 executive decisions first

Do not begin with charts or source systems. Begin with the decisions leadership must make repeatedly.

Build a KPI dictionary before the first mockup

Standardize formulas, owners, thresholds, and refresh timing so trust is built into the design.

Design the first page for scanning in under 30 seconds

The opening view should show performance, risk, and momentum immediately, with drill-down available only where needed.

Create guided drill paths for every major KPI

Ensure users can move from summary to cause logically, without resetting filters or opening external reports.

Launch with alerts, annotations, and feedback loops

Dashboards improve when leaders use them in real review cycles and provide direct input on friction points and missing context.

These steps sound simple, but execution is not. The complexity usually comes from data alignment, governance, usability design, and workflow automation.

Studying interactive dashboard examples is a smart starting point, but building an executive dashboard manually across multiple systems is difficult. You need trusted KPI logic, interactive drill-down, alerting, permissions, data refresh orchestration, and a layout executives can use without training.

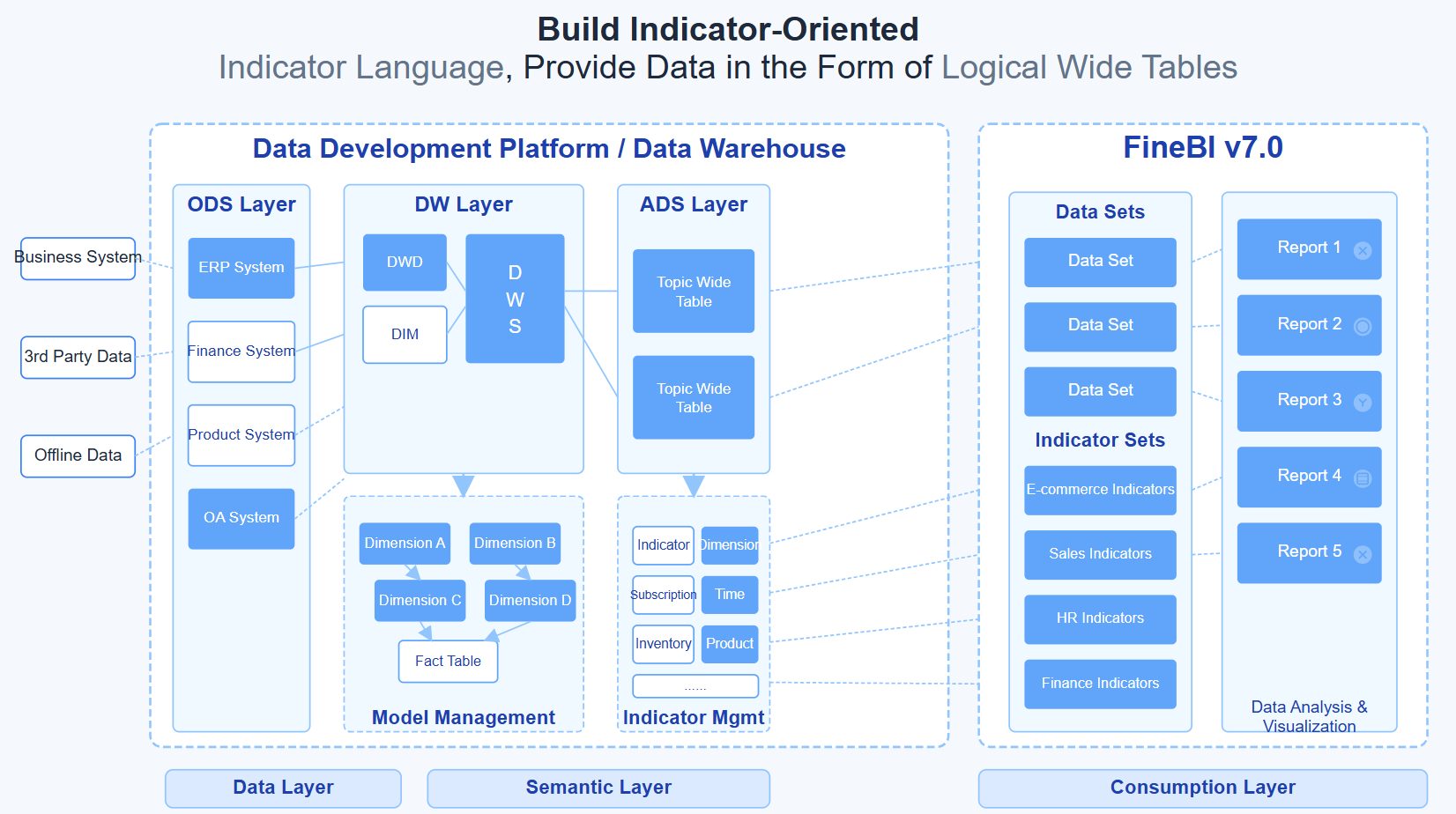

This is where FineBI becomes the practical solution.

Building this manually is complex; use FineBI to utilize ready-made templates and automate this entire workflow.

With FineBI, organizations can:

For enterprise decision-makers, the advantage is straightforward: less manual reporting, faster insight-to-action cycles, and stronger trust in the numbers. Instead of spending months stitching together a dashboard framework from scratch, teams can use FineBI to operationalize best practices from proven interactive dashboard examples and turn them into a working executive system faster.

If your current dashboard still behaves like a prettier spreadsheet, it is time to upgrade the model. The right executive dashboard should not just show performance. It should help leadership decide, act, and follow through with confidence.

An interactive executive dashboard lets leaders filter data, compare time periods, drill into problem areas, and trace KPI changes to likely causes without needing separate reports. The goal is faster investigation and action, not just better-looking charts.

Most executive dashboards work best with a focused set of high-priority KPIs rather than a crowded screen. Start with the few metrics tied directly to strategic decisions, then allow drill-down for supporting detail.

The right KPIs depend on the decisions leaders make most often, such as revenue pace, margin pressure, delivery risk, cash flow, or churn exposure. Each metric should have a clear owner, review cadence, threshold, and expected action.

They usually fail when they prioritize visual complexity over decision support, or when KPI definitions are inconsistent across teams. Dashboards also lose trust when data is stale or users cannot move quickly from a red metric to root cause.

Success should be measured by business outcomes such as faster decision cycles, shorter time to root cause, higher adoption, fewer manual reporting meetings, and quicker response to alerts. A dashboard is effective when it improves decisions and follow-through, not just when it gets viewed.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

What Is a Benchmark Dashboard? Practical Guide to Compare Teams, Sites, and Time Periods

A benchmark dashboard is a decision making tool that helps operations leaders compare performance across teams, locations, and time periods in one place. Its business value is simple: it turns scattered KPIs into a fair,

Yida Yin

Jan 01, 1970

CFO Dashboard Examples: How to Build a Dashboard Executives Actually Use

Executives do not need another report. They need a decision tool. That is the real difference between weak and effective cfo $1 . A $1 should help leaders identify what changed, why it matters, and what action to take ne

Yida Yin

Jan 01, 1970

Workforce Metrics Dashboard: 9 Steps to Build One for Better Executive Decision-Making

A workforce $1 is not just an HR $1. In practice, it is an executive decision system that turns workforce data into signals leaders can act on quickly. For CHROs, CEOs, CFOs, COOs, and business unit leaders, the value is

Yida Yin

Jan 01, 1970