A cyber security dashboard only matters to executives if it converts technical noise into business decisions. Boards, CEOs, CFOs, CIOs, and operating leaders do not need another feed of alerts, blocked attacks, or scan logs. They need to understand one thing clearly: where cyber risk can disrupt revenue, operations, compliance, and customer trust—and what leadership should do next.

This is the gap many organizations struggle with. Security teams report activity. Executives are accountable for business outcomes. When those two worlds are not connected, funding gets delayed, priorities become reactive, and accountability stays vague.

A strong executive dashboard solves that problem. It gives leadership a concise, repeatable view of exposure, resilience, response capability, and business impact. It helps answer practical questions such as:

An operational security report is not the same thing as an executive dashboard. Operational reporting is built for analysts and engineering teams. It includes event volume, tool telemetry, ticket queues, and technical detail needed for daily action. An executive cyber security dashboard is different by design. It is built for decision-making, resource allocation, and governance.

The executive team does not manage firewalls, SIEM alerts, endpoint telemetry, or identity exceptions directly. But they are responsible for the consequences when those controls fail. That is why a cyber security dashboard must bridge security activity and business risk.

For executives, the value is straightforward:

The most effective dashboards also improve alignment between the CISO, CIO, risk leaders, legal, finance, and operations. Instead of debating isolated technical facts, leaders can discuss measurable exposure and resilience.

The first design rule is simple: start with executive decisions, not tool outputs.

Executives care about:

They do not need to see raw alert counts, log volume spikes, or screenshots from five security platforms. Those details may be useful in the SOC, but they rarely help a board member decide whether the organization is underinvesting in identity security, overexposed through third parties, or missing recovery targets for critical services.

A strong cyber security dashboard should therefore map security data to business questions. For example:

That is the level where executive action begins.

A useful KPI does not just report status. It shows whether risk is changing, why it matters, and who is accountable.

Every metric on an executive dashboard should ideally do three things:

This matters because static percentages without context create weak governance. A metric becomes decision-ready only when leadership can interpret it quickly.

Board and executive updates should be instantly scannable. If a dashboard requires a long verbal translation, it is too technical or too crowded.

Use these design principles:

Below are the core characteristics every KPI on the dashboard should meet:

This KPI shows what percentage of business-critical systems are protected by essential controls such as MFA, EDR, secure backups, vulnerability scanning, and logging.

Executives should care because control gaps on critical assets create outsized business risk. If a core ERP platform, payment environment, manufacturing controller, or customer-facing application lacks baseline protection, the issue is not merely technical. It is operational and financial.

What this KPI answers:

Best practice: break coverage down by control family and by critical service, not just enterprise-wide average.

Not all vulnerabilities are equal. The executive dashboard should prioritize unresolved high-risk vulnerabilities based on:

This KPI helps leaders understand where vulnerability debt could materially affect operations, customer trust, or contractual obligations.

What this KPI answers:

A mature view avoids reporting giant backlog numbers without context. What matters is the exposure on critical systems.

Mean time to detect measures how quickly threats affecting key systems are identified.

For executives, this is not an SOC vanity metric. It is a measure of how long the business may remain exposed before action begins. Shorter detection windows generally reduce downstream impact.

What this KPI answers:

Trend lines matter more than isolated numbers. The board should see whether detection capability is strengthening over time.

Mean time to contain shows how quickly the organization can isolate affected assets, stop lateral movement, or reduce attacker access after a threat is identified.

This KPI directly reflects the duration of business exposure during an incident. The longer containment takes, the more opportunity an attacker has to expand impact.

What this KPI answers:

For executive reporting, frame this as exposure duration, not just security team speed.

Mean time to recover tracks how long critical services take to return to normal after an incident.

This is one of the clearest measures of cyber resilience because it ties directly to continuity, customer commitments, service-level expectations, and financial performance.

What this KPI answers:

Recovery should be measured at the service level, not as a generic IT average.

This KPI breaks incidents down by severity and business impact rather than reporting only total count.

Total incident volume often misleads executives. A rise in low-level events may not matter. A small increase in severe incidents absolutely does.

What this KPI answers:

A practical severity model should incorporate:

This metric should track both employee failure rates and employee reporting rates for suspicious messages.

The executive value here is human risk, not training completion. A business with a moderate click rate but strong reporting behavior may be more resilient than one with high completion statistics and weak real-world reporting.

What this KPI answers:

The dashboard should segment this by high-risk populations such as finance, executives, privileged users, and customer support.

Identity is often the shortest path to material compromise. This KPI should summarize:

What this KPI answers:

For executive teams, identity risk should be linked to crown-jewel systems and sensitive business processes.

Many organizations inherit cyber risk through vendors, service providers, software dependencies, and outsourced operations. This KPI should show exposure created by critical third parties, open findings, and concentration risk.

What this KPI answers:

This is especially important in regulated sectors and complex service environments where third-party outages or breaches can create compliance and customer fallout.

Executives do not just need to know whether controls exist. They need to know whether those controls reduce top risks effectively.

This KPI should connect priority risks to:

What this KPI answers:

This is one of the strongest KPIs for budget discussions because it links security spend to measurable risk reduction.

This KPI tracks overdue remediation items, repeat exceptions, open policy waivers, and findings tied to legal, regulatory, audit, or contractual obligations.

Executives should not be flooded with minor administrative findings. They should see only the exceptions that create meaningful business exposure.

What this KPI answers:

The most useful view separates low-impact housekeeping issues from material non-compliance.

This KPI translates cyber exposure into business language by estimating likely impact across top loss scenarios.

Scenario examples may include:

What this KPI answers:

This metric is especially effective with finance and board audiences because it reframes cyber security dashboard reporting around probable loss, not technical complexity.

A clean executive dashboard should group KPIs into four decision-oriented views:

This view shows where the organization is vulnerable today.

Include metrics such as:

This view shows whether the organization is prepared to resist and absorb disruption.

Include metrics such as:

This view shows how well the organization detects, contains, and recovers from real incidents.

Include metrics such as:

This view shows the likely consequence of cyber events in terms executives already use.

Include metrics such as:

This structure makes the dashboard easier to scan and discuss in leadership meetings.

A dashboard without targets is just a scorecard. Executives need to know what “good” looks like, when to escalate, and what action is expected.

Each KPI should include:

Also add short commentary, such as:

One of the most common design failures is trying to satisfy every audience with one dashboard.

A board package should stay focused on strategic risk and business impact. The CIO and CISO may need more detail. Security operations and engineering teams need much deeper technical drill-downs.

A good model is layered:

This prevents the executive cyber security dashboard from turning into a crowded SOC monitor.

Many dashboard initiatives fail not because of bad tools, but because of poor design logic. The usual mistakes are predictable.

If every available metric is included, nothing stands out. Executives stop engaging when dashboards become dense collections of security trivia.

The test is simple: if a metric does not support a funding, governance, or risk decision, it likely does not belong in the executive view.

Blocked attacks, patch counts, login attempts, and event volumes can be useful operationally. But they often say little about actual business exposure.

Executives need to see whether the organization is becoming safer, more resilient, and more accountable—not just busier.

Different stakeholders need different levels of abstraction. A board dashboard should not read like a SOC queue. Likewise, analysts cannot work effectively from high-level executive charts alone.

Build dashboards by audience and decision type.

Even a well-designed cyber security dashboard loses credibility if data is stale, definitions keep changing, or nobody owns the outcome.

Every KPI needs:

Dashboards should influence how the organization allocates budget, prioritizes remediation, and reviews accountability. If the dashboard is only produced for board meetings, it becomes performative rather than useful.

The best dashboards evolve with the business, threat landscape, operating model, and regulatory pressure.

A practical rollout beats a perfect theoretical model. Most organizations should begin with a minimum viable executive dashboard and mature it through monthly use.

Identify the services, processes, and obligations that matter most to the business. Then select a compact KPI set aligned to those risks.

In most cases, starting with 8 to 12 strong KPIs is enough.

Before you build visuals, agree on:

This avoids the common problem of dashboard disputes caused by inconsistent metric logic.

A cyber security dashboard becomes valuable when it drives recurring management conversations. Monthly review is usually the right starting point for executive teams.

Use the review to answer:

If a KPI never influences action, revise or remove it. If leadership keeps asking a follow-up question, add that context to the dashboard.

The best dashboards are shaped by decision patterns, not by security tool capabilities.

External examples can be helpful, but they are often misleading without context. Your first benchmark should be your own target state, risk appetite, and critical service requirements.

Once internal baselines are stable, external benchmarking becomes more meaningful.

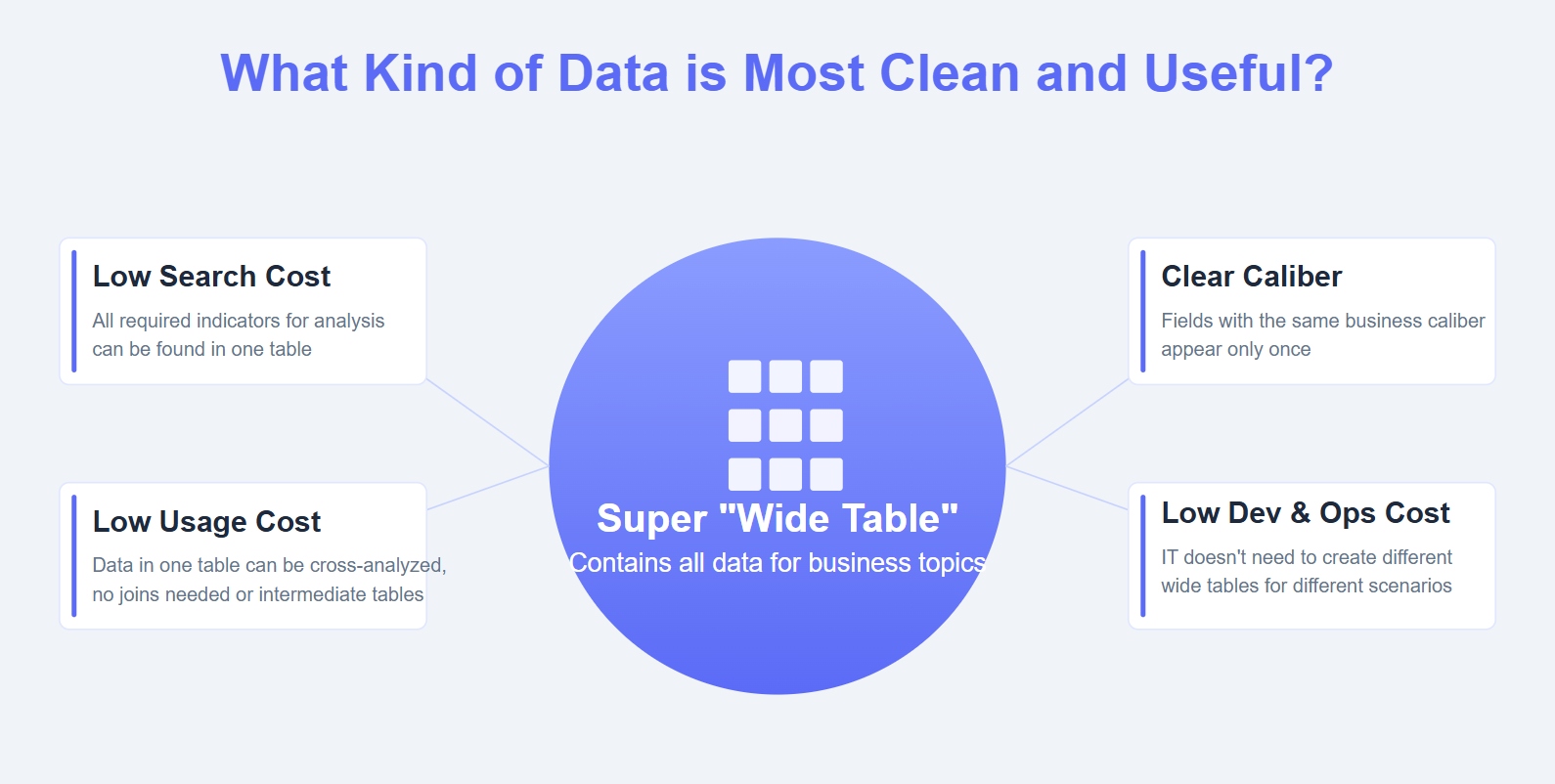

Building this manually is complex. You need to integrate multiple data sources, normalize KPI definitions, maintain refresh schedules, design executive-ready visuals, and keep reporting consistent across stakeholders. That is a heavy lift for already stretched security, IT, risk, and BI teams.

This is where FineBI becomes the practical enabler.

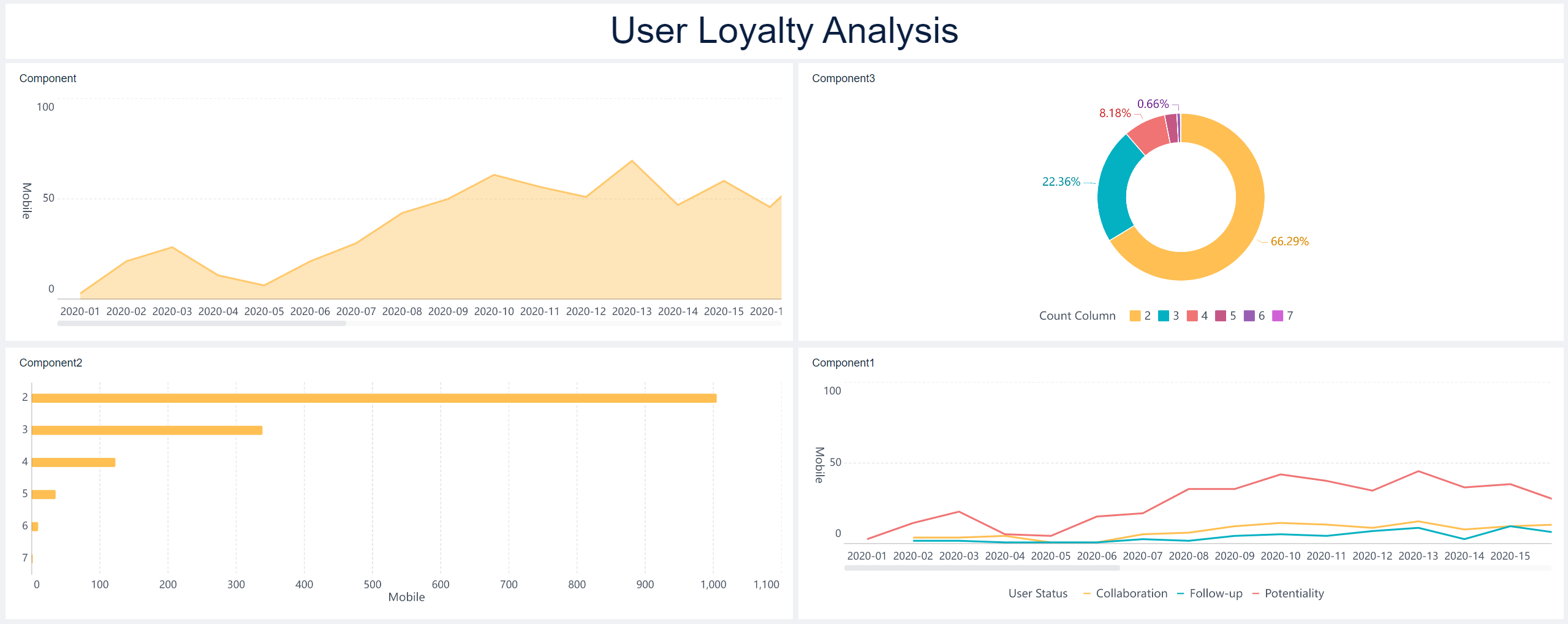

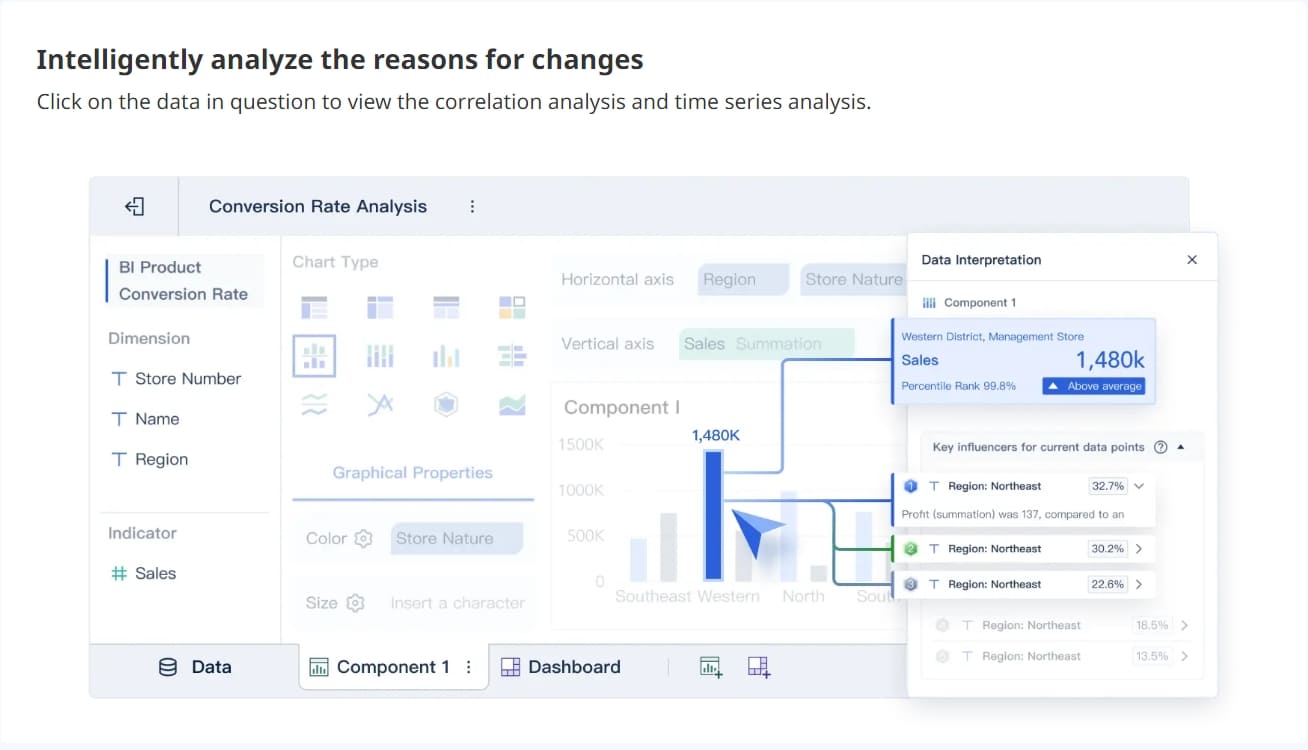

With FineBI, organizations can use ready-made templates and automate this entire workflow instead of assembling a fragile dashboard process from spreadsheets, slide decks, and disconnected security tools. For executive cyber security dashboard use cases, FineBI helps teams:

That matters because a high-value dashboard is not just a visualization project. It is an operational management system for cyber risk.

If your team is still stitching together board updates by hand, the process is likely slow, inconsistent, and difficult to scale. FineBI provides a more reliable model: centralize the data, automate the reporting logic, and deliver a cyber security dashboard that translates technical activity into business risk with far less manual effort.

The goal is not to show more data. The goal is to help leadership make better decisions, faster. FineBI is how you get there.

It should show business risk, resilience, and accountability rather than raw tool data. The most useful dashboards connect exposure to critical services, financial impact, compliance risk, and named owners.

An executive dashboard is built for decisions on funding, risk acceptance, and oversight, while an operational dashboard supports daily technical work. Executives need concise trends, thresholds, and business impact instead of alert volume and detailed telemetry.

The strongest KPIs usually measure exposure in critical assets, incident response speed, recovery readiness, unresolved high-risk vulnerabilities, third-party risk, and compliance gaps tied to business impact. Each one should also show trend and ownership so leaders know what action is needed.

Keep it limited to a small set of high-value KPIs that leaders can scan quickly and use to make decisions. Too many metrics usually create noise and make it harder to identify material risk.

Most organizations review it on a regular executive cadence such as monthly or quarterly, with immediate escalation for red-status issues. The right frequency depends on risk exposure, reporting obligations, and how fast conditions change in the business.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

What Is a Benchmark Dashboard? Practical Guide to Compare Teams, Sites, and Time Periods

A benchmark dashboard is a decision making tool that helps operations leaders compare performance across teams, locations, and time periods in one place. Its business value is simple: it turns scattered KPIs into a fair,

Yida Yin

Jan 01, 1970

CFO Dashboard Examples: How to Build a Dashboard Executives Actually Use

Executives do not need another report. They need a decision tool. That is the real difference between weak and effective cfo $1 . A $1 should help leaders identify what changed, why it matters, and what action to take ne

Yida Yin

Jan 01, 1970

Workforce Metrics Dashboard: 9 Steps to Build One for Better Executive Decision-Making

A workforce $1 is not just an HR $1. In practice, it is an executive decision system that turns workforce data into signals leaders can act on quickly. For CHROs, CEOs, CFOs, COOs, and business unit leaders, the value is

Yida Yin

Jan 01, 1970